AI

Shedding Light on the Future: How Optical Computing Could Revolutionize AI Efficiency

To go back to this article, head to My Profile, and then click on View saved stories.

Purchasing through the links featured in our articles could result in us receiving a commission. Find out more.

The name provided is Amos Z

Chips Powered by Light May Quench AI's Insatiable Energy Demand

This story was first published in Quanta Magazine.

Moore's law, which suggests that the number of transistors on microchips doubles approximately every two years, enhancing their speed and efficiency significantly, is already considered rapid. However, the requirements for computing power in the age of deep learning are escalating at an even quicker rate, one that is likely unsustainable. The International Energy Agency forecasts a tenfold increase in the power consumption of artificial intelligence by 2026 compared to 2023, with data centers expected to consume as much energy as the entire country of Japan in the same year. Nick Harris, the founder and CEO of Lightmatter, a computing-hardware firm, stated, "The computational power demand for AI is doubling every three months," which outpaces the growth rate predicted by Moore's law. He warned, "This could potentially bankrupt companies and strain economies."

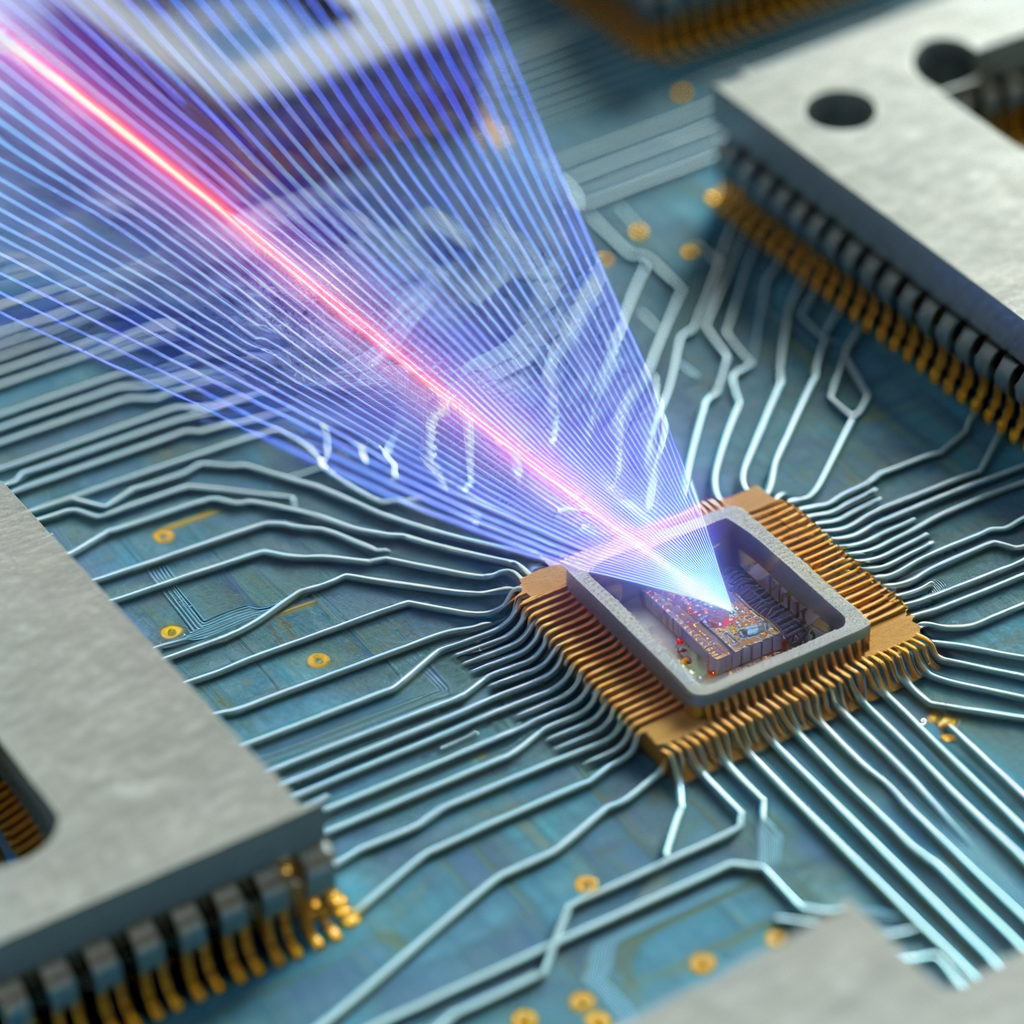

A promising advancement in technology involves a shift from relying on electrons, the backbone of computing for the last half-century, to utilizing photons, tiny units of light, for information processing. Recent findings indicate that when it comes to key computational operations essential to contemporary artificial intelligence, computers that operate on light, known as "optical computers," could present a significant benefit.

The advancement in optical computing is "creating opportunities for significant progress in areas requiring fast and efficient processing, like artificial intelligence," stated Natalia Berloff, a physicist at the University of Cambridge.

Optimal Optical

Theoretically, light offers intriguing advantages. Firstly, optical signals have the capacity to transport a greater volume of information compared to electrical signals due to their higher bandwidth. Additionally, because optical frequencies surpass those of electrical signals, optical setups are capable of executing more computational operations in a shorter duration and with reduced delay.

Moreover, there's an issue with how efficiently electronic chips operate. Beyond the environmental and financial implications of their relatively inefficient nature, these chips generate so much heat that only a small percentage of the transistors—critical tiny switches in all computing devices—can function at once. In contrast, optical computers offer the potential to perform more processes at the same time, processing a greater volume of data with reduced energy consumption. "Harnessing these benefits," remarked Gordon Wetzstein, an electrical engineer at Stanford University, "could unlock numerous new opportunities."

Nick Harris established a business that develops chips utilizing photons rather than electrons.

Authored by Jason Barlow

Crafted by the Nerd's Navigator to the

By Adrienne So

Authored by Eric Ravenscraft

Recognizing the potential benefits, scientists have been eager to harness light for artificial intelligence, a domain that demands intensive computation. During the 1980s and 1990s, for example, scientists employed optical technologies to construct some of the initial neural networks. Demetri Psaltis, alongside two associates at the California Institute of Technology, developed an innovative facial recognition system utilizing these early versions of optical neural networks (ONNs). They encoded images of an individual—actually one of the scientists involved—as holograms within a photorefractive crystal. These holograms were then used to train the ONN, enabling it to identify new pictures of the scientist and differentiate him from his peers.

However, light is not without its limitations. A key issue is that photons typically do not interact with one another, making it challenging for one signal to influence or regulate another, a fundamental function of standard transistors. Additionally, transistors have proven to be extremely effective. They are now produced in the billions on small, coin-sized chips, a testament to the gradual enhancements made over many years.

In recent years, however, a breakthrough application for optical computing has emerged: multiplying matrices.

A Glimpse into Basic Arithmetic

The method of multiplying matrices, which are collections of numbers, supports much of the intensive computing work. Particularly in neural networks, the act of matrix multiplication plays a crucial role in the training of networks with existing data and in the handling of new data by those trained networks. Moreover, light could potentially be a more efficient medium for performing matrix multiplication than traditional electricity.

In 2017, a significant leap in AI processing technology emerged, spearheaded by Dirk Englund and Marin Soljačić from the Massachusetts Institute of Technology. They unveiled a method to construct an optical neural network on a silicon platform. The team devised a strategy to represent different values they aimed to multiply using light beams. These beams were then directed through a sequence of devices that tweaked the beam's phase – the pattern of its light wave oscillations, with each tweak symbolizing a step in the multiplication process. Through a process of dividing the beams, modifying their phases, and merging them again, the light was manipulated to perform matrix multiplication tasks. At the chip's endpoint, photodetectors were installed to capture and interpret the outcomes of these light beams.

Lightmatter is set to launch its Passage chip in 2026, which will integrate electronic components with optical interconnections.

Authored by Jason Barlow

Presented by Geek's Guide to the Galaxy

By Adrienne So

Authored by Eric Ravenscraft

The team of scientists trained their prototype machine to identify vocalized vowel sounds, which is a typical standard test for artificial neural networks. Leveraging the benefits of optical technology, it was able to perform this task more rapidly and with greater efficiency compared to its electronic counterparts. Previous studies had suggested that optics could be beneficial for performing matrix calculations; the publication from 2017 demonstrated the method to apply this theory.

The research "sparked a significant resurgence in the focus on Optical Neural Networks (ONNs)," according to Peter McMahon, a specialist in photonics from Cornell University. "That particular study has had a profound impact."

Innovative Developments

Following the publication of a pivotal 2017 study, the realm of optical computing has experienced consistent advancements, thanks to the efforts of numerous researchers proposing new models of optical computers. Englund, along with his team, has recently introduced a novel optical network known as HITOP, which integrates several improvements. A key goal of HITOP is to enhance computational efficiency by leveraging time, space, and wavelength. Zaijun Chen, who previously worked as a postdoctoral researcher at MIT and is now at the University of Southern California, mentioned that HITOP addresses a major limitation of optical neural networks: the high energy requirement for converting data between electronic and optical formats. According to Chen, by encoding information across three dimensions of light, HITOP can process more data through the optical neural network (ONN) more quickly, distributing the energy expenditure across numerous computations. This method effectively reduces the energy cost per computation. The team has demonstrated that HITOP is capable of executing machine-learning algorithms that are up to 25,000 times larger than those manageable by earlier ONN chips.

It's important to note that the system hasn't yet reached the level of its digital counterparts; HITOP is capable of executing around 1 trillion operations per second, but advanced Nvidia processors can handle 300 times that amount of data, according to Chen, who aims to enhance the technology to boost its performance. However, the optical chip's energy efficiency is noteworthy. "The key advantage we've achieved is reducing the energy consumption by a factor of 1,000," Chen stated.

Various collectives have developed optical computing technologies, each with unique benefits. Recently, a team from the University of Pennsylvania unveiled a novel type of Optical Neural Network (ONN) characterized by its exceptional adaptability. This innovative approach involves directing a laser beam onto a specific area of the semiconductor within the electronic chip, altering its optical characteristics. This process allows the laser to dictate the path of the optical signal, and consequently, the operation it executes. Such a method enables the team to modify the system's functions with ease, setting it apart from the majority of chip-based systems, both optical and electronic, where the pathways are permanently established during the manufacturing process and are typically difficult to alter.

Bhavin Shastri played a key role in creating an optical neural network designed to eliminate the disruption caused by overlapping wireless signals.

Authored by Jason Barlow

Presented by Geek's Guide to the Galaxy

By Adrienne So

Authored by Eric Ravenscraft

Tianwei Wu, who spearheaded the research, remarked, "We've developed something remarkably straightforward. The laser patterns can be swiftly adjusted according to our needs." The team utilized this innovative system to craft a neural network capable of distinguishing between vowel sounds. Traditionally, photonic systems require configuration prior to assembly, as the training process demands modifications to the connections. However, this novel system's flexibility allows for the training phase to occur post-installation on the semiconductor. The researchers are now looking to expand the chip's capacity and incorporate additional data through various light spectrums, thereby enhancing its data processing capabilities.

The advancements made have even astonished Psaltis, the architect of the facial recognition technology from the 1990s. "What we considered our most ambitious hopes four decades ago pale in comparison to the reality we've witnessed."

Dawning Light

The field of optical computing has seen rapid progress in recent years, yet it hasn't come close to replacing the electronic chips that power neural networks outside research environments. Although there have been announcements of photonic systems surpassing their electronic counterparts, these claims often involve running outdated network designs on small-scale models and tasks. Moreover, Bhavin Shastri from Queen’s University in Ontario points out that many claims of superiority in photonics don't provide a complete picture. He explains that comparisons between photonic and electronic systems are challenging. For example, discussions about the use of lasers in photonic systems frequently overlook the energy required to operate those lasers.

Laboratory setups must undergo expansion to reveal their competitive benefits. McMahon inquired, "What size does it need to reach to achieve superiority?" The response is significantly large. This is the reason Nvidia's chips remain unparalleled since they fuel some of the most sophisticated AI technologies in use presently. Along the path to scaling, numerous complex engineering challenges must be addressed—challenges that have been resolved by the electronics sector over many years. "Electronics begins with a considerable lead," McMahon noted.

Some experts believe that AI systems powered by Optical Neural Networks (ONN) will initially make a mark in niche areas where they can leverage their distinct benefits. According to Shastri, one area where they show great promise is in mitigating the interference among various wireless signals, such as those from 5G networks and the radar altimeters crucial for aircraft navigation. Earlier this year, Shastri, along with a team of researchers, developed an ONN capable of isolating and identifying specific transmissions in real-time. Impressively, this ONN can process signals in less than 15 picoseconds (a picosecond is one trillionth of a second)—a speed over a thousand times faster than its electronic counterparts, while consuming less than 1/70th of the energy.

McMahon emphasized that striving for the ultimate goal—a superior optical neural network for widespread application—still holds significant value. His team conducted simulations last year that suggest a comprehensive optical system could potentially enhance the efficiency of certain AI models by over a thousand times compared to upcoming electronic systems, within the next decade. "Numerous firms are currently aiming for a modest 1.5-times improvement. Achieving a thousand-fold increase would be extraordinary," he remarked. "This could be a decade-long endeavor, should it prove successful."

This article is republished with consent from Quanta Magazine, a standalone publication supported by the Simons Foundation. Its goal is to boost public comprehension of science through reporting on new findings and movements in the fields of mathematics, physics, and the biological sciences.

Discover More About…

A Glimpse into the Largest FBI Undercover Operation Ever

The WIRED Artificial Intelligence Elections Initiative: Monitoring over 60 worldwide electoral events

Ecuador finds itself completely at the mercy of a severe drought.

Be confident: Here are the top mattresses available for purchase on the internet.

Allain Rhett

Charles Timber

Steve Nadis

John Smith

Simon Matt

Mullin,

Sachi Mulkey

Matthew Reynolds

Additional Content from WIRED

Insights and Tutorials

© 2024 Condé Nast. All rights reserved. Purchases made via our site may result in WIRED receiving a commission, as part of our Affiliate Partnership agreements with various retailers. Content from this website is not to be copied, shared, distributed, or used in any other way without the explicit approval from Condé Nast. Ad Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.