AI

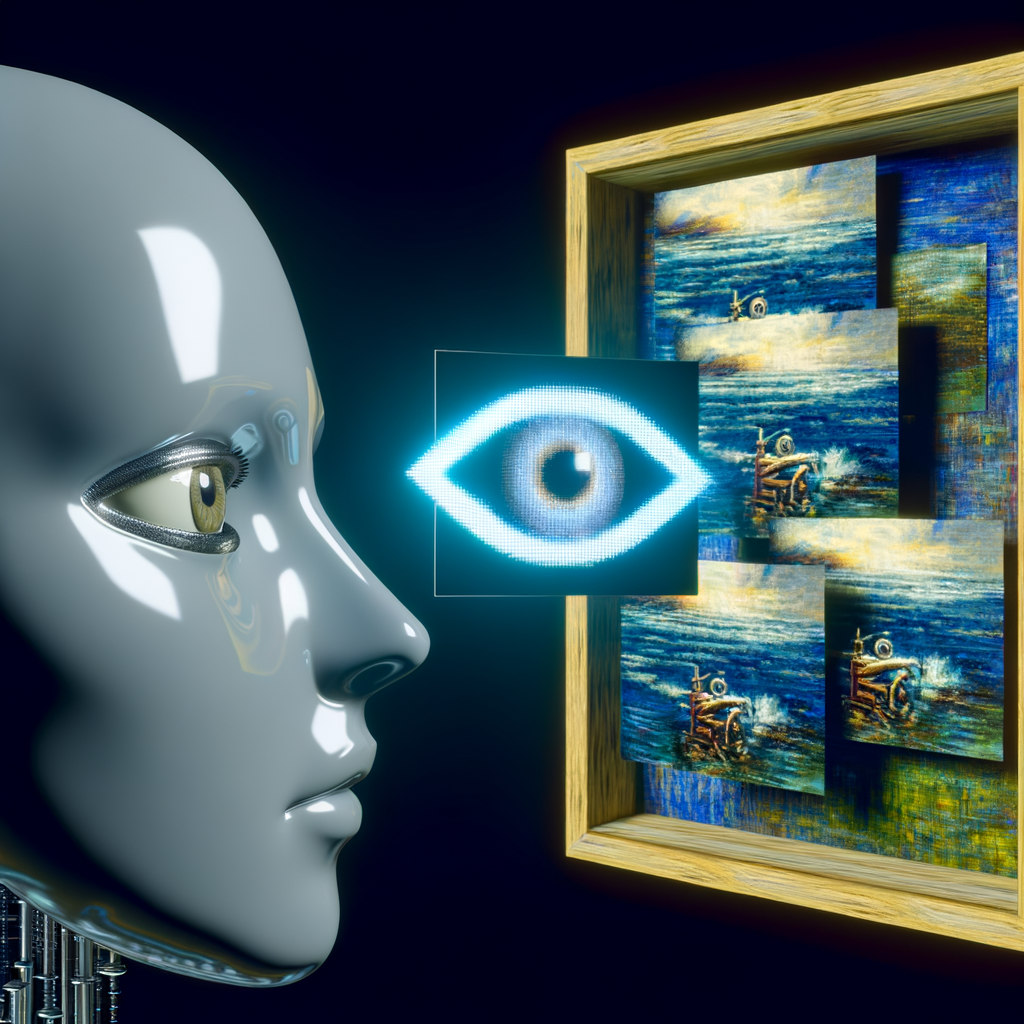

Adobe’s AI Training Controversy: Artists Skeptical Despite Clarifications on Content Use

To look back at this article, go to My Profile and then click on Saved stories.

Purchases made through links in our articles might result in us receiving a commission. Find out more.

Tiffany Ng

Adobe Announces It Will Not Use Artists' Work for AI Training, Skepticism Remains Among Creatives

The creative community was in turmoil after discovering Adobe had discreetly altered its terms of service in February. The company indicated it might utilize users' content via automated and manual processes, employing machine learning to enhance its services and software. This was widely interpreted by users as Adobe demanding unrestricted use of their creations to train its generative AI technology, Firefly.

On Tuesday evening, Adobe released a statement to clear things up: It committed in a revised terms of service policy to not use AI to learn from its users' data, whether saved on their devices or in cloud storage, and to allow users to decline participation in content analysis.

Entangled in the legal battles over intellectual property rights, the recent vague updates to the terms have highlighted a growing distrust among creators, with a significant number depending heavily on Adobe for their creative processes. “Our trust has been shattered,” states Jon Lam, a leading storyboard artist at Riot Games, pointing out the incident where renowned artist Brian Kesinger found his unique art style being replicated and sold without his permission on Adobe's stock image platform. Furthermore, just this month, the family of the deceased photographer Ansel Adams openly criticized Adobe for purportedly marketing AI-generated replicas of his iconic photographs.

Scott Belsky, the chief strategy officer at Adobe, attempted to alleviate worries after artists began to voice their concerns, explaining that the company's reference to machine learning pertains to its non-generative AI tools. One example he mentioned was Photoshop’s “Content Aware Fill” feature, which enables users to effortlessly erase objects from a photo, a function achieved through machine learning. Despite Adobe's assurance that the recent terms of use changes do not grant the company rights over user-generated content and its promise not to utilize such content for training its AI tool Firefly, this clarification failed to quell fears. This led to a broader debate about Adobe's dominance in the market and the potential risks such policy changes pose to the livelihoods of creatives. Lam stands among those artists who remain skeptical, fearing that despite the company's statements, Adobe might still harvest content created on its platforms to train Firefly without obtaining permission from the creators.

Concern about the unauthorized utilization and profit from copyrighted material by generative AI technologies has been a concern for some time. At the beginning of the previous year, Karla Ortiz, an artist, discovered that by simply entering her name, images of her art could be generated by various AI platforms. This action led to a collective legal action against Midjourney, DeviantArt, and Stability AI. Ortiz's case was not unique; Greg Rutkowski, a Polish artist known for his fantasy artwork, found his name to be among the most frequently entered prompts in Stable Diffusion, a generative AI tool, upon its debut in 2022.

Adobe, the company behind Photoshop and the invention of PDFs, has maintained its position as the top choice in the creative sector for more than three decades, serving as the go-to for a vast number of creative professionals. However, its bid to purchase the product design firm Figma in 2023 was halted and ultimately dropped due to regulatory worries about its market dominance.

Authored by Dmitri Alperovitch

Authored by Caroline Haskins

Authored by Mark

Authored by Louryn Strampe

Adobe claims that its Firefly technology has been developed in an ethical manner, drawing on images from Adobe Stock for its training. However, veteran stock image contributor Eric Urquhart challenges this assertion, arguing that Adobe's training methods for Firefly were far from ethical, given that Adobe does not possess the rights to use images from individual contributors. Urquhart, who initially shared his photographs on Fotolia—a platform later acquired by Adobe in 2015—highlights that the original agreement he entered into did not cover the use of his work for training generative AI technologies. Following the acquisition, Adobe subtly modified its terms of service, thereby granting itself the authority to utilize Urquhart’s images to develop Firefly, without directly obtaining his permission. Urquhart notes, “The wording in the recent terms of service amendment closely resembles what I've encountered in the Adobe Stock terms of service.”

Following the launch of Firefly, numerous creatives have opted to terminate their Adobe subscriptions, turning instead to alternatives such as Affinity and Clip Studio, a move they describe as challenging and strenuous. However, some remain tethered to Adobe out of necessity. “From a professional standpoint, leaving Adobe isn't an option for me,” states Urquhart.

In the past, Adobe has recognized its duty towards the creative sector. In September 2023, Adobe unveiled the Federal Anti-Impersonation Right (FAIR) act, a proposed law designed to safeguard artists against unauthorized use of their creations. The initiative focuses solely on deliberate impersonations for profit, prompting concerns about its effectiveness (since the act doesn't cover works unintentionally created in an artist's style) and privacy issues (as proving intent involves the collection and scrutiny of user inputs).

Beyond Adobe, various entities are exploring innovative methods to verify ownership of creative works and combat the theft of intellectual property. At the University of Chicago, a group of scholars has introduced Nightshade, a mechanism designed to corrupt training data and disrupt the performance of AI models that generate images. Additionally, they've created Glaze, a technology aimed at allowing creators to conceal their unique styles from AI firms. From a regulatory perspective, the Concept Art Association, which includes Lam as a member, is actively campaigning for the protection of artists through the organization of lobbying efforts funded by public contributions.

Recommended for You…

Direct to your email: Explore the future of artificial intelligence with Will Knight's Fast Forward newsletter.

Within the largest undercover operation ever conducted by the FBI

The WIRED AI Elections Initiative: Monitoring over 60 worldwide elections

Ecuador finds itself completely without electricity due to a severe drought.

Be confident: Here are the top mattresses available for purchase on the internet

Caroline Haskins

Chris Baraniuk

Jessica Thornton

Name:

Morgan

Author: Joel Khalili

Name: Amos Zeeberg

Amanda Hoover

Paresh Dave

Additional Content from WIRED

Insights and Tutorials

© 2024 Condé Nast. All rights reserved. Purchases made through our site involving products linked to our affiliate retailer partnerships may generate revenue for WIRED. Content from this site is not authorized for reproduction, distribution, transmission, storage, or any form of usage without the explicit written consent from Condé Nast. Ad Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.