Loneliness Unleashed: How the Quest for Connection Fuels a Multimillion-Dollar Romance Scam Crisis

The Crisis of Isolation as a Security Threat

The issue of loneliness has escalated to unprecedented levels. Beyond the substantial impacts on mental health, the growing sense of isolation and diminished social connections among individuals are contributing to significant security risks. Particularly alarming is the surge in romance scams, a type of digital deception that preys on individuals' sense of solitude, funneling hundreds of millions of dollars annually into the pockets of fraudsters. With scammers streamlining their operations and integrating advanced AI tools, the scope and efficiency of these scams are expanding dramatically.

Romance frauds, often referred to as trust tricks, involve a high level of interaction. Perpetrators must develop connections with their victims through online dating platforms and social networks. Although generative AI chatbots are currently employed to craft dialogues and communicate in various languages for different fraud activities, they haven't yet mastered conducting romance frauds independently. However, as the number of susceptible individuals increases, experts think that automation could significantly aid these con artists.

"Fangzhou Wang, an assistant professor specializing in cybercrime studies at the University of Texas at Arlington, observes that these fraudulent activities are becoming increasingly structured. According to him, these operations are recruiting people globally, allowing them to reach a diverse range of targets. With the widespread use of dating apps and social media, there are numerous chances for fraudsters to exploit, providing them with a rich environment for their schemes."

Scamming through romantic deception has become a lucrative venture. In the United States, victims have been defrauded of approximately $4.5 billion due to romance and confidence scams over a decade, based on a review of a decade's worth of data from the FBI's yearly reports on internet crime. (The latest data includes information up until the end of 2023.) The FBI's records indicate that, on average, romance and confidence scams have caused financial damages of about $600 million annually over the last five years, with 2021 witnessing a surge in losses up to nearly $1 billion. Some projections suggest the financial impact could be even greater. Although there's been a slight decrease in the financial losses attributed to romance scams in recent years, there's been an uptick in what's known as pig butchering scams, which typically involve aspects of confidence fraud.

WIRED embarked on a quest to uncover the dynamics of contemporary love, discovering a complex landscape filled with fraudulent schemes, artificial intelligence companions, and exhaustion from endless swiping on Tinder. However, they also found that a future enriched with intelligence, humanity, and greater joy remains within reach.

Romance frauds proliferate across the digital landscape, with perpetrators sending mass messages on Facebook to countless individuals, while some swipe right on every account they come across on dating platforms. These schemes are executed by a diverse group of fraudsters, ranging from West African "Yahoo Boys" to large-scale fraudulent operations in Southeast Asia. Regardless of the scammer's origin, once they establish communication with a target, they uniformly employ a disturbingly consistent strategy to foster an emotional bond with the people they aim to swindle.

"Elisabeth Carter, an associate professor of criminology at Kingston University London, who has conducted in-depth research on these scams and their effects on individuals, states that being a victim of romance fraud is incomparably the most harrowing experience."

Digital dating has evolved over time to become a widely accepted concept in the search for love and companionship. With the advent of advanced AI-driven chatbots on numerous mobile devices, these technologies have rapidly become a new means for individuals to explore romantic and social connections. Although it's not yet feasible to delegate the entirety of a romance scam to a chatbot with today's technology, there's an evident risk that malicious individuals could leverage AI to craft deceptive scripts and generate conversation for numerous simultaneous interactions, potentially across different languages.

Wang from UTA mentions that although she hasn't evaluated if fraudsters are employing generative AI for crafting scripts for romance scams, she has observed indications of its use in creating content for internet dating profiles. "It seems to be a reality already, sadly," she remarks. "At the moment, scammers are simply utilizing profiles generated by AI."

In Southeast Asia, perpetrators are incorporating AI technology into their fraudulent activities, according to a United Nations report from October which highlighted that these organized crime groups are creating customized scripts to trick individuals during live interactions across numerous languages. Google has reported that businesses are receiving scam emails produced by AI. Additionally, the FBI has pointed out that AI enables offenders to communicate with their targets more rapidly.

Offenders employ various manipulative strategies to ensnare their targets and cultivate what appears to be genuine romantic bonds. This involves posing personal inquiries that would typically only be exchanged between close friends or partners, such as those regarding past relationships or dating experiences. Perpetrators further deepen this illusion of intimacy by engaging in "love bombing," a method where they shower their targets with affectionate language to foster an accelerated sense of connection and intimacy. As these romance scams develop, it's increasingly common for the perpetrators to refer to their victims as their significant other, using terms like "girlfriend," "boyfriend," or even "husband" or "wife" to denote a false sense of commitment and loyalty.

Carter points out that a fundamental strategy employed by individuals committing romance fraud involves portraying their fabricated romantic identities as defenseless and in distress. For instance, these deceivers on dating platforms may go as far as to assert they've been victims of scams themselves, expressing a reluctance to trust anew. By addressing suspicions of deceit upfront, it appears less probable to the victim that the individual they're conversing with is, in fact, a fraudster.

This vulnerability plays a pivotal role in enabling perpetrators to extract money from their targets. Carter outlines a common tactic where these individuals initially claim to be experiencing financial difficulties within their business without directly asking for money. They then let the subject drop, only to revisit it a few weeks later. At this juncture, the manipulated individual might feel compelled to help and might even suggest sending money themselves. In some instances, culprits may initially reject the offer of financial help, pretending to dissuade the victim from parting with their money. This strategy is designed to convince the target that it is not only safe but also crucial to support someone they hold dear, further deepening the manipulation.

Carter points out that the motive is never presented as the offender desiring financial gain for personal reasons. He highlights a significant connection between the way fraudsters communicate and the vernacular used by domestic abusers and those who exert controlling behavior.

Brian Mason, a constable at the Edmonton Police Service in Alberta, Canada, who assists scam victims, notes that individuals grappling with loneliness often fall prey to romance scams. He mentions, "Convincing a victim that their romantic interest doesn't actually harbor feelings of love for them is particularly challenging in cases of romance scams."

Mason recounts a scenario where he dedicated two years to assisting a person who fell prey to a romantic deception. During a progress report, he discovered the victim had resumed communication with the fraudster. "He managed to reel her back into the scheme, convincing her to remit funds once more, all because she yearned for his photographs due to her solitude," Mason elaborates. By the close of 2023, the World Health Organization recognized severe loneliness as a persistent risk to individuals' well-being.

Shame and humiliation often play significant roles in making it challenging for victims to acknowledge their circumstances. Carter from Kingston observes that perpetrators take advantage of this early on, insisting that their exchanges remain confidential under the guise that their bond is unique and misconstrued by others. The secrecy surrounding their relationship, together with strategies designed to deceive the victim into voluntarily giving money instead of directly soliciting it, complicates the ability of even the most vigilant and reflective individuals to recognize the deceit they're subjected to.

Carter explains that fraudsters effectively mask warning signals and alerts. They manage to deceive individuals in such a way that those targeted not only lose a significant amount of money but are also betrayed by someone they hold in high esteem and trust deeply at that time. The fact that these interactions occur digitally and are entirely fabricated doesn’t diminish the genuine feelings of the victims involved.

The Romance and Intimacy Issue

Discovering Your Next Top Pick for a Pleasure Device Could Be an Over-the-Counter 'Egg'

Am I Being Unreasonable in My Interactions?

What Follows OnlyFans?

I Was Romantically Involved with Several AI Companions Simultaneously. Things Became Quite

The Crisis of Widespread Loneliness Poses a Threat to Security

Additional Content from WIRED

Evaluations and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made through our site involving products may result in a commission for WIRED, courtesy of our Affiliate Partnerships with retail merchants. Any content from this site is prohibited from being copied, shared, broadcast, stored, or utilized in any form without explicit written consent from Condé Nast. Advertisement Choices

Choose a global website

AI

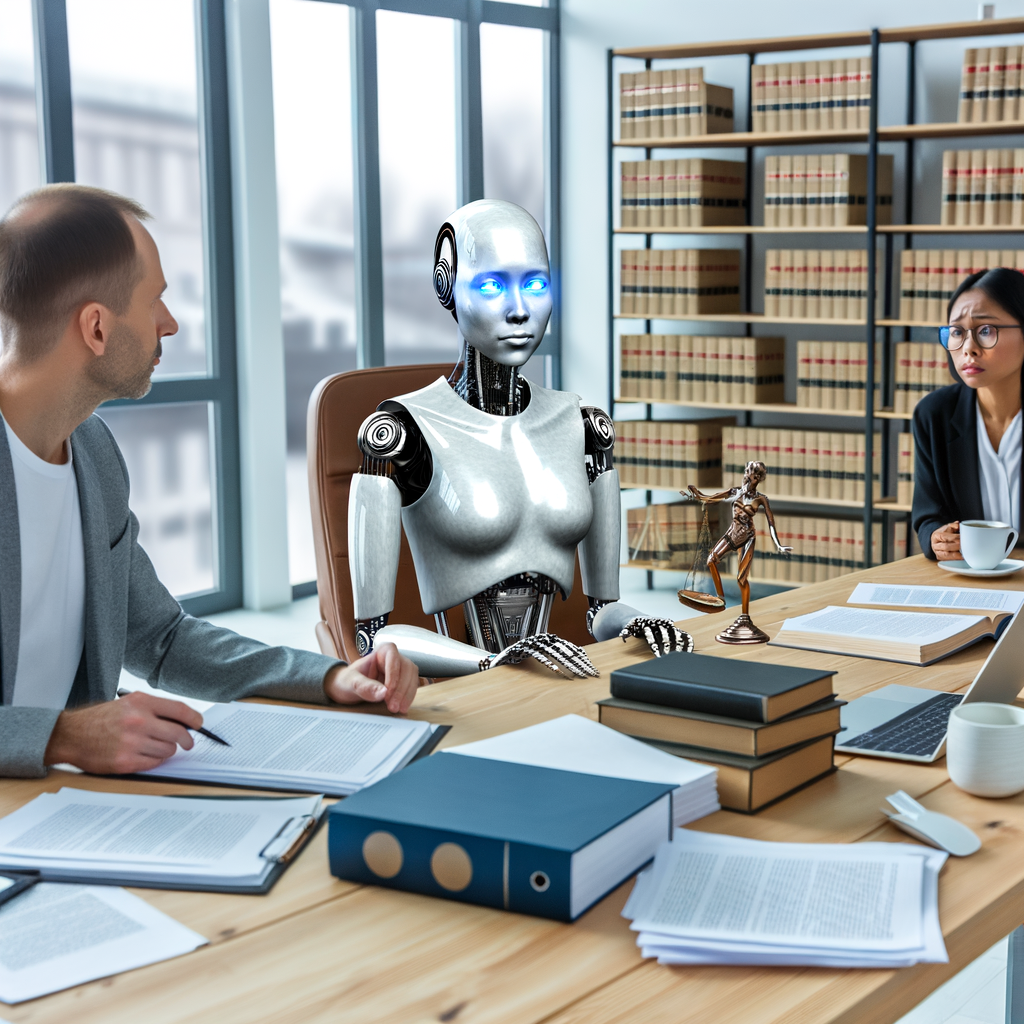

Empowering Justice: How AI Lawyer Transforms Legal Support for Employees, Tenants, and Small Businesses

In an era where technology is revolutionizing nearly every aspect of our lives, the legal field is not left behind. Enter the AI Lawyer—a groundbreaking digital legal assistant designed to empower individuals with instant access to essential legal support. From understanding employment rights after a wrongful termination to navigating the complexities of divorce and tenant disputes, this AI legal tool is redefining the way people approach legal challenges. With its ability to provide free legal advice online, the AI Lawyer ensures that individuals, regardless of their background or income, have the resources they need to advocate for themselves.

This article will explore the myriad ways in which the AI Lawyer serves as a virtual legal assistant, offering quick, understandable answers to pressing legal questions. Whether you’re a freelancer seeking guidance on small business regulations or a tenant facing unfair rent increases, this digital legal platform is always online—ready to provide comprehensive support. We’ll delve into inspiring stories of empowerment, showcasing how this innovative legal chatbot has given a voice to the underdog, transforming daunting legal hurdles into manageable tasks. Join us as we uncover the future of legal assistance, where instant legal support is just a question away.

- 1. **"Empowering Employees: How AI Lawyer Provides Instant Legal Support for Workplace Rights"**

- Explore how this AI legal tool helps individuals understand their rights after being fired or unfairly treated, ensuring they have access to free legal advice online.

- 2. **"Tenant Triumphs: Utilizing the AI Lawyer for Effective Dispute Resolution in Rental Issues"**

1. **"Empowering Employees: How AI Lawyer Provides Instant Legal Support for Workplace Rights"**

In today's fast-paced work environment, employees often find themselves navigating complex employment laws that can feel intimidating and overwhelming, especially after facing termination, layoffs, or unfair treatment. This is where an AI lawyer, designed as a virtual legal assistant, steps in to offer crucial support. By leveraging cutting-edge technology, this digital legal advice tool provides instant legal support for workplace rights, helping individuals understand their entitlements and options without the added stress of traditional legal processes.

With the rise of legal chatbots and AI legal tools, employees can access free legal advice online at any time, making it easier to confront workplace issues head-on. Whether it's understanding the implications of a wrongful termination, deciphering a layoff notice, or addressing claims of harassment, the AI lawyer simplifies complex legal jargon into clear, actionable insights. Users can input their specific situations and receive tailored guidance within seconds, ensuring they are well-informed about their rights and available recourse.

Moreover, the AI lawyer operates as a 24/7 digital legal support system, providing assistance even outside regular business hours. This constant availability is particularly beneficial for those in precarious employment situations who may need immediate answers or support. By empowering employees with easy access to legal knowledge, the AI legal platform helps level the playing field, enabling users to advocate for themselves and seek justice confidently.

As more individuals turn to online legal help, the AI lawyer stands out as a revolutionary solution that democratizes access to legal resources. It transforms the landscape of employment law by not only offering instant legal support but also by fostering a culture of awareness and empowerment among employees. With the help of this innovative technology, employees can navigate their workplace rights with clarity and confidence, ensuring they are never alone in their fight for fairness and justice.

Explore how this AI legal tool helps individuals understand their rights after being fired or unfairly treated, ensuring they have access to free legal advice online.

Navigating the complexities of employment law can be daunting, especially for individuals who have recently been fired or unfairly treated in the workplace. Fortunately, the emergence of AI legal tools is transforming how employees access legal information and support. With the help of an AI lawyer or virtual legal assistant, individuals can now understand their rights in a fraction of the time it would take through traditional means.

When faced with job loss, many employees feel overwhelmed and uncertain about their next steps. This is where an online legal help platform becomes invaluable. By simply typing a question into a legal chatbot, users can receive instant legal support tailored to their specific situation. Whether it's understanding wrongful termination, navigating severance packages, or identifying signs of discrimination, these AI legal tools provide free legal advice online that is both accessible and easy to comprehend.

Moreover, the digital legal advice offered by AI platforms is designed to empower users with knowledge. Employees can explore their rights without the pressure of scheduling consultations or incurring hefty legal fees. This level of accessibility ensures that even those from underserved communities have the opportunity to seek justice and understand their entitlements.

As a result, countless individuals who previously felt powerless now have the resources to challenge unfair treatment. The AI lawyer acts as a bridge, connecting users to the legal information they need to advocate for themselves. This empowerment is crucial, especially in a landscape where many feel they have no recourse. With 24/7 availability, these digital legal assistants are always on hand, ready to provide guidance and reassurance at any hour.

In summary, the integration of AI legal tools into the realm of employment law is a game changer. By providing free, instant legal advice and support, these innovative platforms ensure that everyone, regardless of their background or income, can understand their rights after being fired or unfairly treated. The future of legal assistance is here, and it’s more accessible than ever before.

2. **"Tenant Triumphs: Utilizing the AI Lawyer for Effective Dispute Resolution in Rental Issues"**

In today's rental landscape, tenants often face challenges such as unfair rent increases, unjust eviction notices, and disputes over security deposits. Fortunately, the advent of the AI lawyer has transformed how tenants can address these issues. By utilizing a virtual legal assistant, renters can access instant legal support that was once reserved for those who could afford a traditional attorney.

The AI legal tool provides a user-friendly interface where individuals can input their specific concerns and receive tailored digital legal advice in seconds. For example, if a tenant is faced with a sudden rent hike, they can simply query the legal chatbot about their rights and potential defenses. This immediate access to free legal advice online empowers renters to understand their options and take action before the situation escalates.

Moreover, the AI lawyer offers a wealth of resources, guiding users through the processes of disputing eviction notices or recovering their deposits. With the ability to navigate complex legal language and regulations, this online legal help ensures that tenants are equipped with the knowledge they need to advocate for themselves effectively.

The 24/7 availability of these digital legal platforms means that tenants can seek assistance at any time, alleviating the stress of waiting for office hours to resolve urgent issues. By leveraging the power of AI in the realm of tenant rights, individuals can turn the tide in their favor, transforming potential disputes into triumphs. The stories of those who have successfully utilized this technology highlight how the AI lawyer is not just a tool, but a crucial ally for renters seeking justice in a complicated rental market.

In an era where access to legal support can often seem daunting, the emergence of AI Lawyer as a virtual legal assistant is revolutionizing the way individuals navigate their rights and legal challenges. From empowering employees to understand their workplace rights after being unfairly treated, to assisting tenants in disputing unjust rent increases or eviction notices, this AI legal tool is proving to be an invaluable resource.

Moreover, in the emotionally charged realm of divorce and separation, especially for women seeking clarity on custody and alimony, AI Lawyer stands as a compassionate ally. Small business owners and freelancers, who traditionally might shy away from legal consultations due to cost concerns, can now leverage this digital legal advice platform to receive practical guidance tailored to their needs.

The beauty of AI Lawyer lies in its commitment to providing free legal advice online, ensuring that everyone, regardless of background or income, has access to instant legal support. With its 24/7 availability, this legal chatbot is always on hand to deliver quick, plain-English answers, bridging the gap where traditional law offices fall short.

As we reflect on the stories of those who have found empowerment through this innovative technology, it becomes clear: AI Lawyer is not just a tool but a beacon of hope for the underdog. By democratizing legal support, it is redefining the landscape of justice, allowing individuals to reclaim their power and assert their rights with confidence. In a world where legal complexities can feel overwhelming, the AI legal platform is paving the way for a more equitable future.

AI

Empowering Your Rights: How AI Lawyer Revolutionizes Access to Legal Support for Employees, Tenants, and Individuals in Need

In an era where legal complexities often leave individuals feeling powerless, the advent of AI lawyer technology is revolutionizing access to legal support. This innovative legal AI platform serves as a virtual legal assistant, providing instant legal help to those navigating the turbulent waters of employment disputes, tenant rights, divorce, and small business challenges. Whether you’ve been unfairly treated at work, face unjust rent increases, or are dealing with the emotional fallout of separation, the AI legal tool is here to empower you with clarity and confidence. With the ability to deliver free legal advice online, this digital legal advice resource is transforming how individuals secure their rights—no matter their background or income level. In this article, we’ll explore the various ways AI Lawyer is changing the legal landscape, offering 24/7 support and plain-English answers to legal questions, ensuring that everyone has access to the justice they deserve. Join us as we delve into the stories of empowerment and resilience, showcasing how this legal chatbot is giving a voice to the underdog and redefining the meaning of legal support.

- 1. **Revolutionizing Rights: How AI Lawyer Provides Instant Legal Support for the Unfairly Treated**

- Explore how this innovative legal AI platform empowers employees to understand their rights after being fired or laid off.

- 2. **Navigating Tenant Rights: Using AI Lawyer for Fair Housing and Legal Clarity**

1. **Revolutionizing Rights: How AI Lawyer Provides Instant Legal Support for the Unfairly Treated**

In an era where immediate access to information is a given, the legal industry is experiencing a significant transformation through the introduction of AI lawyers. These virtual legal assistants are revolutionizing the way individuals receive support when facing unfair treatment in the workplace, ensuring that employees who have been fired, laid off, or unjustly treated are not left in the dark about their rights.

AI lawyers serve as powerful legal tools, providing instant legal support that is both accessible and user-friendly. With just a few clicks, individuals can engage with a legal chatbot that offers tailored, plain-English advice on their specific situations. This online legal help eliminates the often intimidating barriers associated with traditional legal consultations, allowing users to gain crucial insights into their rights without the stress of high costs or complex legal jargon.

One of the standout features of an AI legal platform is its ability to offer free legal advice online, making essential information available to everyone, regardless of their background or income level. This democratization of legal support empowers those who may not have previously sought help due to financial constraints or fear of the legal system. With the ability to ask questions and receive legally sound answers in mere seconds, users can quickly understand their options and take informed steps toward resolving their issues.

Moreover, the 24/7 availability of these digital legal services means that individuals can seek assistance at any hour, breaking free from the limitations of traditional law offices that operate on standard business hours. This constant support is particularly beneficial for those who may be navigating emotionally taxing situations, such as employment disputes or unfair treatment.

In summary, AI lawyers are not just a technological advancement; they are a transformative force in the legal landscape. By providing instant legal support and easy access to vital information, these innovative solutions are empowering individuals who feel powerless in the face of unfair treatment, ensuring that everyone has a chance to understand and assert their rights.

Explore how this innovative legal AI platform empowers employees to understand their rights after being fired or laid off.

In today’s rapidly changing job market, employees often face uncertainty regarding their rights after being fired or laid off. Enter the AI lawyer—a groundbreaking virtual legal assistant designed to empower individuals with instant legal support when they need it most. This innovative legal AI platform offers online legal help that demystifies the complexities of employment law, ensuring that employees understand their rights and options.

Many employees may feel overwhelmed and unsure of their next steps after receiving a termination notice. With the AI legal tool, users can access free legal advice online, providing clarity on issues such as wrongful termination, severance pay, and unemployment benefits. The legal chatbot feature allows individuals to ask specific questions and receive legally sound, plain-English answers in seconds—removing the barriers that often inhibit access to legal information.

By utilizing this digital legal advice resource, employees gain the confidence to challenge unfair treatment by their employers. The AI lawyer not only informs users of their rights but also offers guidance on how to take action—be it filing a complaint or negotiating a severance package. This level of support and empowerment is especially crucial for those who may lack the financial means to consult traditional legal counsel.

Moreover, the 24/7 availability of AI lawyer ensures that employees can pursue help at any time, even when traditional law offices are closed. This accessibility is vital for individuals navigating the emotional turmoil that often accompanies job loss. With the AI legal platform, employees can find solace in knowing they have a dependable ally in their corner, ready to provide the information they need to advocate for themselves effectively.

As stories of empowerment and justice through AI lawyer continue to emerge, it becomes increasingly clear that this technology is not just a tool but a lifeline for employees striving to reclaim their rights and dignity after being unfairly treated in the workplace.

2. **Navigating Tenant Rights: Using AI Lawyer for Fair Housing and Legal Clarity**

In today’s ever-evolving housing market, tenants often find themselves at a disadvantage when navigating complex rental agreements and unfair practices. Fortunately, the rise of AI lawyers and virtual legal assistants is transforming the way individuals approach tenant rights, offering accessible and effective solutions.

With the advent of AI legal tools, renters can now obtain instant legal support tailored to their specific situations. Whether facing unjust rent increases, recovering security deposits, or challenging eviction notices, tenants can turn to an AI lawyer for straightforward and reliable legal advice. Many of these digital legal platforms feature legal chatbots that engage users in conversation, providing free legal advice online that helps demystify the often convoluted landscape of tenant law.

One of the significant advantages of using an AI lawyer is the ability to receive rapid responses to pressing questions. By simply typing in a concern, tenants can gain immediate insights and clarity on their rights and options. This instant legal support is particularly beneficial for those who may feel overwhelmed by the intricacies of housing laws or lack the financial resources to hire a traditional attorney.

Furthermore, this digital legal advice is available 24/7, ensuring that tenants can access the support they need at any time, even outside of conventional office hours. This level of accessibility is empowering, especially for those who may feel marginalized in the housing market.

As tenants increasingly leverage AI legal solutions, they are finding their voices and asserting their rights with newfound confidence. The combination of technology and legal expertise not only aids individuals in resolving disputes but also fosters a more equitable housing environment. In this way, AI lawyers and virtual legal assistants are not just tools; they are catalysts for change, promoting fair housing and legal clarity for all.

In conclusion, the emergence of AI Lawyer as a virtual legal assistant marks a transformative shift in how individuals access legal support. By providing instant legal support and empowering users to navigate complex legal landscapes—whether it be employment law, tenant rights, divorce, or small business challenges—this innovative AI legal tool democratizes legal knowledge and assistance. With its ability to offer free legal advice online, 24/7 availability, and straightforward answers in plain English, AI Lawyer ensures that everyone, regardless of their background or income, can seek justice and clarity. The stories of individuals who have regained their power through this digital legal advice platform highlight its potential to uplift the underdog and create a more equitable legal environment. As we continue to embrace advancements in technology, AI Lawyer stands out as a beacon of hope for those who may have previously felt powerless, proving that legal support is now just a question away.

AI

Unleash Your Creativity: Discover How DaVinci AI is Shaping the Future of Visual Design, Story Crafting, and Music Creation in 2025

As we step into 2025, a new era of creativity unfolds with the launch of DaVinci AI, the premier all-in-one AI generator designed to unleash your potential. This innovative platform serves as a powerful ally for artists, writers, musicians, and entrepreneurs, providing a seamless integration of AI tools that transform imaginative ideas into reality. With DaVinci AI, users can explore an expansive playground of creativity, where visual design, story crafting, and music creation come together in harmony. Whether you're looking to enhance your artistic flair or optimize your business strategies through AI analytics, DaVinci AI offers user-friendly features that maximize productivity and inspire innovation. Join us as we dive into the transformative capabilities of DaVinci AI, and discover how you can elevate your creative journey with free registration and easy app download from the Apple Store. The future of creativity is here—are you ready to embrace it?

- 1. "Unlocking Creativity: How DaVinci AI is Revolutionizing Visual Design, Story Crafting, and Music Creation in 2025"

1. "Unlocking Creativity: How DaVinci AI is Revolutionizing Visual Design, Story Crafting, and Music Creation in 2025"

In 2025, creativity is being redefined as DaVinci AI stands at the forefront of innovation, acting as an all-in-one AI generator that empowers artists, writers, musicians, and entrepreneurs alike. With its user-friendly interface and seamless integration of advanced AI tools, DaVinci AI is revolutionizing visual design, story crafting, and music creation, unlocking new realms of imaginative potential.

Visual design has never been more accessible. Artists can now transform their ideas into stunning masterpieces with the help of AI-driven features that streamline the design process. Whether you're creating digital illustrations or stunning graphics for social media, DaVinci AI provides an innovation playground that enhances creativity and boosts productivity. The platform's intuitive tools allow users to experiment freely, encouraging a creative revolution where the possibilities are virtually limitless.

Writers, too, are experiencing a renaissance in storytelling thanks to DaVinci AI. By leveraging AI analytics, users can refine their narratives and produce compelling content that captivates audiences. The platform offers insights that help shape plots and characters, allowing writers to focus on what they do best—crafting stories that resonate. With the power of AI, even aspiring authors can unleash their potential and produce works that rival seasoned professionals.

In the realm of music creation, DaVinci AI is a game-changer. Musicians can create mesmerizing tracks that resonate with every note, all while exploring new genres and styles. The AI-driven music tools simplify composition, enabling artists to experiment with sounds and arrangements that might have previously felt out of reach. This not only enhances creativity but also fosters collaboration among musicians from different backgrounds and experiences.

For entrepreneurs, DaVinci AI presents powerful business optimization tools that provide critical insights into market trends and customer preferences. By harnessing AI analytics, businesses can make informed decisions that elevate their strategies and drive growth. The automation of mundane tasks allows entrepreneurs to focus on innovation, transforming their creative journeys into successful ventures.

With free registration available at davinci-ai.de and an easy app download from the Apple Store, DaVinci AI invites everyone to embrace the future of creativity. Whether you're an artist, writer, musician, or entrepreneur, this platform is designed to enhance your creative journey, ensuring that your imagination knows no bounds. In 2025 and beyond, DaVinci AI is your partner in unleashing potential and paving the way for a brighter, more innovative future.

In the rapidly evolving landscape of 2025, **DaVinci AI** stands out as the premier **All-In-One AI Generator**, designed to **unleash potential** across various creative fields. As artists, **writers**, **musicians**, and **entrepreneurs** seek to elevate their work, the platform serves as an **innovation playground** that fosters **creativity** and **productivity**.

With tools geared towards **visual design**, **story crafting**, and **music creation**, DaVinci AI empowers users to transform their ideas into reality effortlessly. Artists can create stunning visuals that captivate audiences, while writers leverage AI insights to enhance their narratives, making their stories more engaging. Musicians, too, can tap into the platform's capabilities to compose mesmerizing tracks that resonate deeply with listeners.

In addition to creative tools, DaVinci AI offers robust **business optimization** features. The integration of **AI analytics** allows entrepreneurs to make data-driven decisions, enhancing their strategies for success. This **seamless integration** of various functionalities ensures that users can navigate the platform with ease, thanks to its **user-friendly** interface.

The benefits of DaVinci AI extend beyond just creativity; it also provides a time-efficient solution for busy professionals. By automating routine tasks, the platform allows users to focus on what they do best, fostering an environment where **innovation** thrives.

For those eager to embark on their **creative journey**, registration is completely free at davinci-ai.de, and the DaVinci AI app is readily available for download on the **Apple Store**. This accessibility means that users can explore their creative potential anytime, anywhere, making the most of the tools at their fingertips.

As we embrace the future of creativity with DaVinci AI, it's clear that this platform is not just a tool but a catalyst for a **creative revolution**. Whether you’re looking to enhance your **creativity** or optimize your business, DaVinci AI is poised to help you achieve your goals and redefine your creative possibilities.

In conclusion, DaVinci AI stands as a beacon of creativity and innovation in 2025, offering an all-in-one AI generator that empowers artists, writers, musicians, and entrepreneurs alike. Its user-friendly interface and seamless integration of advanced AI tools make it an invaluable resource for anyone looking to enhance their creative journey. By revolutionizing visual design, story crafting, and music creation, DaVinci AI is not just a platform; it's an innovation playground where imagination knows no bounds. As you embark on your own creative revolution, don't miss the opportunity to unleash your potential with DaVinci AI. With free registration available and the app conveniently downloadable from the Apple Store, the future of creativity is at your fingertips. Embrace this transformative technology and elevate your productivity today! Join the ranks of those who are already experiencing the power of AI analytics and watch your creative endeavors flourish. The journey to unlock your true potential starts now with DaVinci AI! 🚀

AI

Empowering Justice: How AI Lawyer Transforms Access to Employment, Tenant, and Family Legal Rights

In an era where technology intersects with personal rights, the emergence of AI lawyer platforms is revolutionizing the way individuals access legal support. From employees grappling with the aftermath of unfair dismissals to tenants fighting against unjust rent increases, the demand for accessible and instant legal guidance has never been more critical. This article delves into the multifaceted role of AI lawyers as virtual legal assistants, providing invaluable online legal help for those navigating the complexities of employment law, tenant rights, divorce, and small business challenges. With a focus on empowering the underdog, these AI legal tools offer free legal advice online, enabling users to receive clear, concise answers to their legal questions in seconds. As we explore the transformative potential of these digital legal allies, we’ll highlight stories of individuals who have reclaimed their rights and found clarity in tumultuous times, all thanks to the power of AI. Join us as we uncover how this innovative legal AI platform is reshaping the landscape of legal support, making it more accessible and affordable for everyone, regardless of their background or income.

- 1. **Empowering Employees: How AI Lawyer Delivers Instant Legal Support for Employment Rights**

- Explore the role of the AI legal tool in helping individuals navigate their rights post-termination or unfair treatment.

- 2. **Tenant Rights Made Simple: Utilizing AI Lawyer for Fair Housing and Rent Disputes**

1. **Empowering Employees: How AI Lawyer Delivers Instant Legal Support for Employment Rights**

In an era where job security is increasingly uncertain, understanding employment rights is crucial for employees facing termination, layoffs, or unfair treatment. Enter the AI lawyer, a revolutionary virtual legal assistant designed to deliver instant legal support and empower individuals navigating the complexities of employment law. This innovative digital legal advice tool offers a seamless way for employees to obtain free legal advice online, ensuring they are informed of their rights and options.

When faced with the emotional and financial stress of job loss, many employees might feel overwhelmed and unsure of where to turn for help. Traditional legal services can be costly and time-consuming, often placing legal recourse out of reach. However, with the emergence of an AI legal tool, employees can access quick, reliable information tailored to their specific situations. By simply typing a question into the legal chatbot, individuals receive legally sound answers in plain English, demystifying the often convoluted language of employment law.

The AI lawyer operates as a 24/7 digital legal support system, providing users with the ability to seek guidance outside of conventional office hours. This round-the-clock availability is particularly beneficial for those who may hold jobs during the day and need to find answers after hours. Whether an employee is looking to understand their rights after being fired or seeking advice on potential discrimination claims, the AI legal platform stands ready to assist.

One of the most empowering aspects of this technology is its ability to support employees who might otherwise lack access to legal resources. Many individuals, especially those from underrepresented backgrounds, may feel intimidated by the legal system or uncertain about their rights. The AI lawyer levels the playing field, offering instant legal support that helps users feel more confident in pursuing their claims. Through personalized guidance and clear information, this virtual legal assistant enables employees to advocate for themselves effectively.

In conclusion, the AI lawyer not only serves as a powerful resource for employees seeking to understand their employment rights but also embodies a transformative approach to accessing legal help. By providing instant answers, personalized support, and empowering users to take control of their situations, this digital legal advice tool is changing the landscape of employment law assistance for the better.

Explore the role of the AI legal tool in helping individuals navigate their rights post-termination or unfair treatment.

In today’s rapidly evolving workplace, understanding one’s rights after being fired, laid off, or subjected to unfair treatment can be daunting. This is where the role of an AI legal tool becomes invaluable. With the advent of AI lawyers and virtual legal assistants, individuals now have access to online legal help that simplifies the often complex language of employment law.

When faced with termination or unfair practices, many employees feel overwhelmed and unsure of their next steps. The AI legal tool acts as a beacon of clarity, providing instant legal support that can help users comprehend their rights and available recourses. By utilizing a legal chatbot, individuals can ask specific questions about their situation and receive free legal advice online, often in plain language that is easy to understand. This democratization of information is crucial for those who may not have the means to engage traditional legal counsel.

Moreover, the AI legal platform is designed to empower users by guiding them through the intricacies of employment law. From outlining the steps to take after an unjust dismissal to explaining severance agreements, the AI lawyer ensures that individuals are equipped with the knowledge they need to advocate for themselves. The 24/7 availability of these digital legal services means that help is just a click away, even outside of regular business hours.

By providing quick legal answers and personalized guidance, the AI legal tool not only informs users of their rights but also instills a sense of confidence and agency. This is particularly important for those who may feel marginalized or powerless in their situations. The stories of individuals who have successfully navigated their post-termination circumstances with the help of AI legal resources illustrate the transformative impact of these technologies.

In essence, AI lawyers and virtual legal assistants are revolutionizing the way individuals access legal support, ensuring that everyone has the opportunity to understand their rights and take appropriate action after experiencing unfair treatment in the workplace.

2. **Tenant Rights Made Simple: Utilizing AI Lawyer for Fair Housing and Rent Disputes**

Navigating the complexities of tenant rights can often feel overwhelming, especially for those facing unfair rent increases or the threat of eviction. Fortunately, the emergence of AI lawyers and virtual legal assistants is revolutionizing the way tenants access legal information and support. Utilizing an AI legal tool can empower renters with the knowledge they need to understand their rights and advocate for fair housing.

With just a few clicks, users can tap into a digital legal advice platform that offers free legal advice online. This instant legal support is invaluable for individuals who may not have the means to hire traditional legal counsel. The legal chatbot can provide guidance on various issues, from disputing unjust rent hikes to recovering security deposits. By simply typing a question, tenants can receive plain-English answers tailored to their specific situations, eliminating the legal jargon that often complicates understanding.

Moreover, the 24/7 availability of these AI platforms ensures that tenants have access to crucial information whenever they need it. Whether it’s after hours or during a weekend, the AI lawyer is always online, ready to assist. This constant access to legal resources can be particularly beneficial in urgent situations, such as when a tenant receives an eviction notice and needs to respond quickly.

Empowering tenants with knowledge is at the heart of what these digital legal tools offer. By simplifying the legal process and providing immediate answers, individuals can feel more confident standing up for their rights. The AI lawyer not only demystifies housing laws but also levels the playing field, ensuring that everyone—regardless of background or income—has access to the support they need to challenge unfair practices.

In an era where legal complexities can often feel overwhelming, AI Lawyer emerges as a transformative tool, ensuring that access to justice is no longer reserved for those who can afford traditional legal counsel. From empowering employees to understand their rights after unfair treatment to simplifying tenant disputes over rent increases and evictions, this AI legal tool is revolutionizing the way individuals approach their legal challenges.

For those navigating the emotional turmoil of divorce and separation, particularly women who may face additional hurdles, AI Lawyer provides critical support for custody and alimony discussions, offering clarity during difficult times. Small business owners and freelancers, often without the luxury of a dedicated legal team, can rely on this virtual legal assistant for guidance, making legal advice more accessible than ever before.

Moreover, the promise of free, instant legal advice online ensures that anyone, regardless of their background or income, can seek the legal support they need. With the ability to receive plain-English answers in seconds and 24/7 availability, AI Lawyer stands as a beacon of hope for the underdog, empowering individuals who once felt powerless in the face of legal challenges.

As we continue to integrate technology into our daily lives, the legal landscape is no exception. The rise of the AI legal platform represents not just a shift in how legal support is delivered but a commitment to making justice accessible for all. With AI Lawyer, the future of legal assistance is here—one where everyone has the tools to stand up for their rights and navigate the complexities of the law with confidence.

AI

Empowering Voices: How AI Lawyer Transforms Legal Support for Employees, Tenants, and Families in Need

In an age where technology is reshaping every aspect of our lives, the legal field is no exception. Enter the AI Lawyer—a groundbreaking virtual legal assistant designed to empower individuals across various circumstances, from employees grappling with unfair treatment to tenants facing unjust rent hikes. This innovative legal AI platform is revolutionizing access to justice by providing instant legal support, free legal advice online, and a wealth of resources that were once reserved for those who could afford traditional legal counsel. With the ability to offer quick, understandable answers at any time of day, AI Lawyer is breaking down barriers and leveling the playing field for the underdog. Whether you’re navigating the complexities of divorce, disputing eviction notices, or simply seeking clarity about your rights after a job loss, this digital legal advice tool is here to help. Join us as we explore the myriad ways AI Lawyer is transforming the legal landscape, ensuring that everyone—regardless of background or income—has access to the support and guidance they need.

- 1. **"Transforming Rights Awareness: How AI Lawyer Provides Instant Legal Support for Employees Facing Unfair Treatment"**

- *(Featuring insights on employment law support and the role of a virtual legal assistant in helping workers understand their rights.)*

- 2. **"Empowering Tenants: Leveraging AI Lawyer for Instant Legal Help Against Unfair Rent Increases and Evictions"**

1. **"Transforming Rights Awareness: How AI Lawyer Provides Instant Legal Support for Employees Facing Unfair Treatment"**

In today’s fast-paced and often unpredictable job market, employees facing unfair treatment after being fired, laid off, or discriminated against are increasingly turning to innovative solutions for guidance. Enter the AI lawyer—a revolutionary virtual legal assistant that is transforming rights awareness and access to justice for workers everywhere.

With just a few clicks, individuals can access online legal help that empowers them to understand their rights and options. The AI legal tool serves as a reliable digital legal advice platform, offering instant legal support tailored to the specific needs of employees. Whether someone has been wrongfully terminated or is unsure about the legality of a layoff, the AI lawyer is equipped to provide free legal advice online, simplifying complex legal language into plain English.

This legal chatbot not only helps users identify potential violations of their rights but also guides them through the necessary steps to take action. Employees can ask questions about workplace discrimination, severance agreements, or unemployment benefits and receive immediate, sound legal answers. The convenience of having a 24/7 digital legal support system means that help is available even when traditional law offices are closed.

By equipping employees with knowledge and resources, the AI lawyer empowers the underdog—those who may have previously felt powerless. With each interaction, the legal AI platform demystifies the employment law landscape, creating a more informed workforce that can stand up for its rights. As technology continues to evolve, the role of the AI lawyer in promoting workplace fairness and justice is becoming increasingly vital, ensuring that no one has to navigate the challenges of unfair treatment alone.

*(Featuring insights on employment law support and the role of a virtual legal assistant in helping workers understand their rights.)*

In today's rapidly evolving job market, understanding employment rights has never been more critical, especially for those who have been fired, laid off, or unfairly treated. Enter the AI lawyer—a groundbreaking virtual legal assistant that provides invaluable online legal help to employees navigating these challenging situations. With the rise of digital legal advice platforms, workers can now access instant legal support that empowers them to understand their rights and options without the need for expensive consultations.

The AI legal tool serves as an accessible resource for individuals seeking clarity on employment law. By simply typing a question into a legal chatbot, users can receive tailored responses that demystify complex legal jargon. This immediate access to free legal advice online can make a significant difference for employees facing uncertainty after job loss or unfair treatment. Whether it’s understanding the implications of a layoff, exploring wrongful termination claims, or seeking guidance on severance packages, the AI lawyer simplifies the process, ensuring that users feel informed and confident in their next steps.

Moreover, the 24/7 availability of these digital legal platforms means that support is just a click away, even when traditional law offices are closed. This round-the-clock assistance is particularly beneficial for those who may not have the luxury of time to seek help during regular business hours. Employees can get the clarity they need when they need it most, allowing them to make informed decisions quickly.

The empowerment that comes from understanding one’s legal rights cannot be overstated. Many individuals, particularly those who feel they have been wronged, often grapple with feelings of helplessness. However, the AI lawyer serves as a powerful ally, providing the necessary legal insights that can equip workers with the knowledge to advocate for themselves. By leveraging this innovative legal technology, employees are not only gaining access to essential information but also reclaiming their agency in the workplace.

As the landscape of legal services continues to transform, the role of AI in employment law support is proving to be a vital tool for those in need. With the ability to offer instant legal support and guidance, the virtual legal assistant is redefining how employees interact with the law, making it more accessible, understandable, and user-friendly than ever before.

2. **"Empowering Tenants: Leveraging AI Lawyer for Instant Legal Help Against Unfair Rent Increases and Evictions"**

In today's rapidly evolving rental market, tenants often find themselves grappling with unfair rent increases or the looming threat of eviction. Fortunately, the advent of AI lawyer technology has transformed the landscape of tenant rights protection, providing individuals with instant legal support and the tools necessary to navigate complex housing laws. With the help of a virtual legal assistant, tenants can access online legal help at their fingertips, ensuring they are well-informed about their rights and options.

One of the standout features of this legal AI platform is its ability to deliver free legal advice online, empowering tenants to dispute unjust rent hikes or challenge eviction notices without the financial burden of traditional legal fees. These AI legal tools serve as a legal chatbot, offering straightforward, easy-to-understand explanations of tenant rights and procedures. By simply typing a question, users can receive legally sound answers in seconds, making the daunting task of understanding rental agreements and local laws much more manageable.

Moreover, the 24/7 availability of these digital legal resources means that tenants are never left in the dark. Whether it’s the middle of the night or a holiday weekend, the AI lawyer is always online, ready to assist with urgent inquiries and provide clarity during stressful times. This instant legal support not only helps tenants assert their rights but also fosters a sense of empowerment. Many users have reported feeling more confident in their ability to advocate for themselves, knowing they have access to reliable information and support.

In an era where housing insecurity is a pressing issue for many, leveraging AI lawyer technology can make all the difference. By democratizing access to legal resources, this innovative approach ensures that tenants, regardless of their background or income level, can stand up against unfair practices and protect their homes. As the digital landscape continues to evolve, the role of AI in providing legal assistance remains a crucial lifeline for those who need it most.

In an era where access to legal resources can often feel daunting and overwhelming, the advent of the AI Lawyer represents a transformative shift in the landscape of legal support for individuals across various sectors. From employees grappling with unfair treatment to tenants disputing unjust rent increases, this virtual legal assistant offers instant legal support that is both accessible and user-friendly. The AI legal tool empowers users by providing clear, concise, and legally sound information at their fingertips—something that is particularly crucial for those who may not have the means to consult a traditional attorney.

Furthermore, as we explored the role of AI Lawyer in navigating the complexities of divorce and separation, as well as its invaluable assistance to small business owners, it becomes evident that digital legal advice is not just a convenience but a necessity in today’s fast-paced world. With 24/7 availability, this legal chatbot ensures that individuals receive timely guidance, no matter the hour, fostering a sense of security and empowerment among those who once felt powerless.

The stories of individuals reclaiming their rights and finding clarity through AI Lawyer illustrate the platform’s commitment to democratizing legal access. By providing free legal advice online, it stands as a beacon of hope for many, proving that justice can be attainable for everyone, regardless of their background or income level.

As we move forward, the integration of AI in legal support systems will likely redefine the way we think about legal assistance, making it more inclusive and efficient. The AI Lawyer is not just a tool; it is a movement towards a more equitable legal landscape, ensuring that everyone has the resources they need to stand up for their rights and navigate life’s challenges with confidence.

AI

Unleash Your Potential in 2025: Discover the Innovation Playground of DaVinci AI – The Ultimate All-in-One AI Generator for Artists, Writers, Musicians, and Entrepreneurs

As we leap into 2025, a transformative wave of creativity is sweeping across the digital landscape, fueled by cutting-edge technology. At the forefront of this creative revolution is **DaVinci AI**, the premier all-in-one AI generator designed to unleash your potential like never before. Whether you're an artist, writer, musician, or entrepreneur, DaVinci AI offers a comprehensive suite of **AI tools** tailored to enhance your creative journey. With its user-friendly interface and seamless integration, this innovative platform empowers users to create stunning visual designs, craft compelling narratives, compose captivating music, and optimize business strategies.

In this article, we will delve into how DaVinci AI serves as an **innovation playground** for creatives of all stripes, exploring its powerful features that enable artists to transform their visions into breathtaking realities, writers to captivate audiences with immersive storytelling, and musicians to compose melodies that resonate deeply. Additionally, we'll highlight the platform's capabilities in business optimization, showcasing how entrepreneurs can leverage **AI analytics** to elevate their decision-making processes and drive productivity. Join us as we navigate the future of creativity with Max AI, and discover how you can register for free at davinci-ai.de and download the DaVinci AI app from the **Apple Store** to unlock endless opportunities for innovation and self-expression.

- 1. "Explore the Innovation Playground: How DaVinci AI Empowers Artists, Writers, and Musicians in 2025"

- 2. "Unleashing Creativity: The All-in-One DaVinci AI Generator for Entrepreneurs and Creatives Alike"

1. "Explore the Innovation Playground: How DaVinci AI Empowers Artists, Writers, and Musicians in 2025"

In 2025, DaVinci AI stands at the forefront of a creative revolution, acting as an innovation playground that empowers artists, writers, musicians, and entrepreneurs alike. With its versatile suite of AI tools, DaVinci AI is designed to enhance creativity across various disciplines, making it an indispensable resource for those looking to unleash their potential.

For artists, the platform offers cutting-edge visual design capabilities that allow for the effortless transformation of ideas into stunning masterpieces. Whether you’re a seasoned professional or an aspiring creator, DaVinci AI’s intuitive features enable you to explore new artistic horizons. The seamless integration of AI technology ensures that even complex designs can be executed with user-friendly precision, allowing artists to focus on their imaginative visions instead of getting bogged down by technical hurdles.

Writers are not left behind, as DaVinci AI provides powerful story crafting tools that give users access to AI-driven insights. This means that whether you're penning a novel or drafting a business proposal, you can captivate your audience with compelling narratives. The platform’s AI analytics can help writers identify trends and preferences, enriching their storytelling with data-backed decisions that enhance engagement and impact.

Musicians will find a fertile ground for music creation within DaVinci AI. The platform enables users to compose mesmerizing tunes that resonate with their intended audience. By leveraging advanced algorithms, musicians can experiment with different genres and styles, facilitating a creative flow that might have been previously inaccessible. This innovative approach not only streamlines the music creation process but also encourages collaboration and exploration among artists.

Entrepreneurs, too, benefit from DaVinci AI’s robust business optimization features. With AI analytics at your fingertips, you can elevate your strategies and make informed decisions that drive productivity and growth. The platform equips users with the tools to analyze market trends, customer behavior, and operational efficiencies, paving the way for innovation and success in today's competitive landscape.

In this innovation playground, creativity knows no bounds. DaVinci AI offers free registration, allowing users to dive into its expansive features without any initial commitment. Additionally, the DaVinci AI app, available for download from the Apple Store, ensures that your creative journey can continue anytime, anywhere.

As we navigate through 2025, it’s clear that DaVinci AI is not just a tool; it’s a partner in the creative process, redefining how artists, writers, musicians, and entrepreneurs express themselves and engage with their audiences. Embrace this future of creativity and let DaVinci AI elevate your imaginative pursuits to new heights.

2. "Unleashing Creativity: The All-in-One DaVinci AI Generator for Entrepreneurs and Creatives Alike"

In the fast-evolving landscape of 2025, DaVinci AI stands out as the premier all-in-one AI generator, designed to unleash creativity for both entrepreneurs and creatives alike. As artists, writers, musicians, and business leaders navigate the demands of modern innovation, the platform serves as an invaluable resource that enhances productivity and fosters imaginative exploration.

With its user-friendly interface, DaVinci AI offers a seamless integration of powerful AI tools that cater to a variety of creative pursuits. Whether you’re diving into visual design, story crafting, or music creation, this innovation playground provides the necessary support to elevate your work. For artists, the ability to transform ideas into stunning visuals is just a click away, while writers can leverage AI-driven insights to amplify their narratives and engage their audiences more effectively.

Entrepreneurs, too, benefit from DaVinci AI’s robust business optimization features. The platform’s AI analytics empower users to refine their strategies and make data-driven decisions that enhance their ventures. By streamlining processes and freeing up valuable time, DaVinci AI allows users to focus on what truly matters: unleashing their potential and pursuing their creative journey.

As the creative revolution continues to unfold, the DaVinci AI app, available for download on the Apple Store, ensures that inspiration is never out of reach. With free registration, creatives can easily access the tools they need to innovate and succeed, regardless of their field. In a world where creativity and efficiency go hand in hand, DaVinci AI is the key to unlocking endless possibilities and redefining the future of artistic expression and entrepreneurial success. Embrace this opportunity to enhance your creativity and join the ranks of those who are ready to transform their ideas into reality.

In conclusion, as we venture into 2025, DaVinci AI stands out as the premier all-in-one AI generator, poised to revolutionize the creative landscape for artists, writers, musicians, and entrepreneurs alike. By harnessing state-of-the-art AI tools, users can explore an innovation playground that not only enhances creativity but also boosts productivity through seamless integration and user-friendly interfaces. Whether you're crafting a compelling narrative, designing stunning visuals, composing captivating music, or optimizing your business strategies with AI analytics, DaVinci AI equips you with everything you need to unleash your potential.

The future of creativity is bright, and with DaVinci AI, you can embark on a transformative journey that places you at the forefront of this creative revolution. Don't miss out on the opportunity to elevate your craft—register for free at davinci-ai.de and download the app from the Apple Store today. Join the ranks of forward-thinking creators ready to redefine what’s possible. Embrace the future of innovation and let DaVinci AI be your guide in unlocking endless possibilities! 🚀

AI

Empowering the Underdog: How AI Lawyer Transforms Access to Employment, Tenant, and Family Law with Instant Digital Legal Support

In an age where technology continually reshapes our lives, the legal landscape is no exception. Enter the AI lawyer—a groundbreaking virtual legal assistant designed to democratize access to legal support and empower individuals facing various challenges, from employment disputes to tenant rights and personal separations. This digital legal tool offers instant legal support and free legal advice online, making it easier than ever for employees to understand their rights after being unjustly fired or laid off. For tenants grappling with unfair rent increases or eviction notices, the AI lawyer serves as an invaluable ally, providing clear, actionable insights. Moreover, it extends its support to those navigating the emotional turmoil of divorce, helping individuals—especially women—secure their rights concerning custody and alimony.

Small business owners and freelancers, often priced out of traditional legal counsel, can now turn to this innovative legal AI platform for guidance tailored to their unique needs. With its ability to deliver quick, legally sound answers in plain English, the AI lawyer is transforming the way people approach legal issues. Available 24/7, this legal chatbot is always on duty, providing peace of mind to those who need it most. In this article, we will explore the myriad ways AI lawyer is empowering the underdog, providing critical support to individuals who once felt powerless in the face of legal complexities. Join us as we delve into the transformative potential of this technology, and discover how it is making legal help accessible to everyone, regardless of background or income.

- 1. **"Empowering Employees: How AI Lawyer Provides Instant Legal Support for Workers Facing Unfair Treatment"**

- 2. **"Tenant Rights Revolutionized: Discover How AI Lawyer Offers Free Legal Advice Online to Combat Unjust Rent Increases"**

- 3. **"Navigating Divorce with Confidence: The Role of AI Lawyer as Your Virtual Legal Assistant for Custody and Alimony Issues"**

1. **"Empowering Employees: How AI Lawyer Provides Instant Legal Support for Workers Facing Unfair Treatment"**

In today’s fast-paced work environment, employees often find themselves facing unfair treatment, whether through wrongful termination, unjust layoffs, or workplace discrimination. Navigating the complexities of employment law can be daunting, especially for those who lack the resources to hire traditional legal counsel. This is where the **AI lawyer** comes into play, revolutionizing how workers access support and understand their rights.

The **virtual legal assistant** offered by AI legal tools provides **instant legal support** for employees grappling with unfair treatment. By simply typing a question into a **legal chatbot**, users can receive tailored **digital legal advice** within seconds. This immediacy eliminates the stress of waiting for appointments and empowers workers to take timely action against injustices they face.

For many, the barriers to seeking help can feel insurmountable. However, the emergence of **free legal advice online** through AI platforms breaks down these obstacles. With a few taps on a smartphone or clicks on a computer, individuals can gain access to a wealth of information about their rights and options. This democratization of legal knowledge ensures that even those from disadvantaged backgrounds can find the support they need.

Moreover, the **AI lawyer** serves as a knowledgeable ally, guiding employees through the steps necessary to address their grievances. Whether it involves understanding severance agreements, filing complaints, or navigating the intricacies of labor laws, this **online legal help** empowers workers to advocate for themselves effectively. The ability to obtain **quick legal answers** not only boosts confidence but also equips employees with the tools necessary to stand up against unfair practices.

In a world where traditional law offices may be closed or inaccessible due to time constraints, the **24/7 digital legal support** provided by AI lawyers is a game changer. Employees can reach out at any hour, ensuring that they are never alone in their fight for justice. This constant availability fosters a sense of security, knowing that expert advice is just a click away.

Ultimately, the integration of AI into the legal landscape is transforming the way employees perceive their rights and responsibilities. By empowering individuals with instant access to legal knowledge and support, the AI lawyer seeks to level the playing field for those who feel they have been marginalized. With technology paving the way for greater awareness and action, employees are now better equipped to confront unfair treatment head-on.

2. **"Tenant Rights Revolutionized: Discover How AI Lawyer Offers Free Legal Advice Online to Combat Unjust Rent Increases"**

In recent years, the landscape of tenant rights has undergone a significant transformation, thanks in part to the advent of technology. Enter the **AI lawyer**, a revolutionary virtual legal assistant that is reshaping how tenants navigate the complexities of housing disputes. With rising rent prices and unfair evictions becoming more common, many individuals find themselves overwhelmed and unsure of their rights. Fortunately, **online legal help** is now more accessible than ever.

The **AI legal tool** serves as a **legal chatbot** that provides **free legal advice online** to combat unjust rent increases. Tenants can quickly access **instant legal support** by simply typing their questions into the platform, receiving tailored responses that clarify their rights and options. This immediate access to **digital legal advice** empowers individuals to stand up against landlords who may be attempting to impose unfair rental hikes or evade their responsibilities.

Moreover, the **legal AI platform** is designed to cater to a diverse audience, ensuring that everyone, regardless of their background or income, can benefit from its services. This democratization of legal knowledge is crucial in a climate where many tenants feel powerless against larger property management companies. By providing **free legal advice online**, the AI lawyer is enabling tenants to challenge unjust practices, recover deposits, and dispute eviction notices with newfound confidence.

The impact of this technology is profound. Tenants who were once intimidated by the legal system now have a reliable ally in their pocket. The convenience of 24/7 access to **online legal help** means that individuals can seek clarity on their rights and responsibilities any time of the day. This level of accessibility is particularly important in urgent situations, where traditional legal offices may be closed, and tenants need guidance to address immediate concerns.

As stories of successful tenant advocacy through AI lawyer platforms continue to emerge, it’s clear that the combination of technology and legal support is revolutionizing tenant rights. By breaking down barriers and providing accessible resources, the **AI lawyer** is not just a tool—it's a catalyst for change in the fight against unjust rent practices.

3. **"Navigating Divorce with Confidence: The Role of AI Lawyer as Your Virtual Legal Assistant for Custody and Alimony Issues"**

Divorce can be an emotionally tumultuous experience, often fraught with uncertainty, especially when custody and alimony issues come into play. In this challenging time, an AI lawyer can serve as a virtual legal assistant, providing individuals with the online legal help they need to navigate these complex matters with confidence.

An AI legal tool offers instant legal support, allowing users to quickly access the information they require regarding custody arrangements and alimony calculations. By simply typing in their questions, individuals can receive clear and concise answers, eliminating the stress of deciphering complicated legal jargon. This free legal advice online is invaluable for those who may not have the financial means to consult a traditional attorney.

Moreover, a legal chatbot can guide users through the intricacies of family law, helping them understand their rights and responsibilities. This digital legal advice is not only accessible but also tailored to meet the unique needs of each individual, ensuring that they feel supported during one of the most challenging times in their lives.

The legal AI platform operates around the clock, providing 24/7 digital legal support. This means that individuals can seek assistance at any hour, whether they are up late worrying about their custody arrangements or need clarification on alimony obligations. The convenience of having a virtual legal assistant at their fingertips helps users navigate their divorce proceedings with greater confidence.

Ultimately, the role of an AI lawyer in divorce situations empowers individuals, particularly women who may feel vulnerable during separations, to take charge of their legal matters. With access to accurate, instant legal help, they can make informed decisions that will affect their future and that of their children. The integration of AI in the legal field not only enhances accessibility but also transforms the way people approach the often daunting process of divorce.

In an era where access to legal support can often feel out of reach, the emergence of AI Lawyer stands as a transformative solution for individuals facing a myriad of legal challenges. Whether navigating the complexities of employment law after an unfair dismissal, contesting unjust rent hikes as a tenant, or seeking clarity during the emotionally charged process of divorce, this innovative virtual legal assistant empowers users with instant legal support.

By harnessing the capabilities of a sophisticated legal AI platform, individuals can access free legal advice online that demystifies their rights and options. The AI legal tool not only provides swift, plain-English answers but also operates around the clock, ensuring that help is available even outside traditional office hours. Stories of empowerment highlight how this digital legal advice is leveling the playing field for the underdog, offering a voice to those who may have felt powerless in the face of legal battles.

As we move forward, the role of AI Lawyer as a reliable online legal help resource is set to grow, further democratizing access to justice for all. With its unique ability to provide quick legal answers and comprehensive support, AI Lawyer stands as a beacon of hope for employees, tenants, parents, small business owners, and anyone seeking clarity in an often confusing legal landscape. In a world where legal challenges can arise unexpectedly, having a trusted digital ally can make all the difference.

AI

Empowering Justice: How AI Lawyer Revolutionizes Legal Support for Employees, Tenants, and Small Businesses

In an age where technology is rapidly transforming every facet of our lives, the legal landscape is no exception. Enter the AI lawyer—a revolutionary virtual legal assistant designed to empower individuals facing legal challenges with instant legal support and accessible online legal help. Whether you're navigating the complexities of employment law after being fired or unfairly treated, disputing unjust rent hikes as a tenant, or seeking clarity during a stressful divorce, the AI legal tool offers free legal advice online, leveling the playing field for those who may not have the resources to hire traditional legal counsel. This article will explore how this innovative legal AI platform provides critical support to underrepresented groups, including employees, tenants, and small business owners, ensuring that everyone has access to the legal guidance they deserve. From quick and straightforward answers to complex legal questions to 24/7 digital legal advice, discover how the AI lawyer is redefining the way we approach legal issues, empowering the underdog and making justice accessible to all.

- 1. **"Navigating Employment Law: How AI Lawyer Provides Instant Legal Support for Fired or Unfairly Treated Employees"**

- This section will delve into how the AI legal tool empowers employees to understand their rights and seek justice after job-related issues.

- 2. **"Tenant Rights at Your Fingertips: Leveraging AI Lawyer for Fair Housing and Legal Protection"**

1. **"Navigating Employment Law: How AI Lawyer Provides Instant Legal Support for Fired or Unfairly Treated Employees"**

In today's fast-paced world, employees facing termination or unfair treatment often find themselves overwhelmed and unsure of their rights. Fortunately, the emergence of AI lawyers and virtual legal assistants is transforming how individuals navigate employment law. These AI legal tools provide instant legal support to those who have been fired or treated unjustly, bridging the gap between employees and their right to fair treatment.

With the rise of digital legal advice platforms, employees can now access free legal advice online at any time, making it easier to understand their rights and options. Legal chatbots designed for employment law can guide users through complex issues, offering tailored insights based on their specific situations. For instance, if an employee believes they were wrongfully terminated, they can quickly input their circumstances into an AI lawyer interface and receive immediate feedback on potential legal avenues to pursue.

This instant legal support is crucial for individuals who may not have the resources to consult traditional legal counsel. The AI legal platform ensures that users are not left in the dark, providing clarity on issues such as severance pay, wrongful termination claims, and workplace discrimination. By demystifying the legal process, these digital assistants empower employees to take informed action, whether that means initiating a complaint or negotiating a fair severance package.