Google Lens Evolution: Navigating the Future of Visual Search with AI and Multimodal Capabilities

To go back to this article, navigate to My Profile and then click on View saved stories.

Google's Visual Search Enhancements Answer More Intricate Queries

Introduced in 2017, Google Lens transformed how we interact with the world through our smartphones. By simply aiming your phone's camera at an item, Google Lens identifies it, provides relevant information, and in some cases, offers a purchase option. This groundbreaking search method eliminated the need for typing out lengthy descriptions of objects in your vicinity.

Lens showcased Google's strategy to leverage its artificial intelligence and machine learning capabilities to expand the reach of its search engine across various platforms. Google is enhancing its core generative AI technology to provide concise summaries for textual queries, paralleling the advancements in Google Lens' image-based search functionality. The company has announced that Lens, currently facilitating approximately 20 billion searches monthly, will broaden its capabilities to include video and multimodal search options.

A recent update to Lens has enhanced its features, providing users with additional information for shopping within search results. Notably, shopping is a primary function of Lens, similar to visual search capabilities found on Amazon and Pinterest aimed at encouraging purchases. Previously, searching for an item like your friend's sneakers through Google Lens would yield a selection of similar products. However, with the new improvements, Google has announced that Lens will now offer direct purchasing links, consumer feedback, editorial reviews, and tools for comparing products.

The search function in Lens has advanced to become multimodal, a term currently trending in the field of artificial intelligence. This enhancement allows users to conduct searches using a mix of video, images, and vocal commands. Now, rather than just aiming their smartphone's camera at an item, focusing by tapping on the display, and anticipating the Lens application to generate findings, individuals have the option to direct the lens while simultaneously issuing voice commands. Examples include inquiries like, “What type of clouds are these?” or “Which brand do these sneakers belong to and where can I purchase them?”

Lens is expanding its capabilities to analyze live video footage, enhancing its current function of recognizing objects in static photos. This means if you encounter issues like a malfunctioning record player or observe a blinAI-allcreator.com">king indicator on a faulty device at your place, you can capture a brief video using Lens. Subsequently, with the help of generative AI, you will receive advice on fixing the problem.

Initially unveiled during the I/O conference, this particular function is still in the testing phase and is accessible solely to individuals who have chosen to participate in Google's search labs, according to Rajan Patel, a veteran at Google for 18 years and one of the founders of Lens. The additional features of Google Lens, including voice mode and enhanced shopping options, are being introduced to a wider audience.

Google's "video understanding" capability presents an interesting development for several reasons. At the moment, it applies to videos recorded live, but should Google extend this functionality to pre-recorded videos, vast collections of videos—whether stored in an individual's personal gallery or within a massive archive such as Google's—might soon be able to be tagged and extensively shopped through.

Another aspect to consider is the similarity between the Lens functionality and Google's upcoming Project Astra, slated for release later in the year. Similar to Lens, Project Astra also employs various forms of input to analyze and understand the environment via your smartphone. During a demonstration of Astra in the spring, the developers unveiled a prototype version of smart glasses.

In a distinct announcement, Meta recently unveiled its ambitious plans for the future of augmented reality, envisioning a scenario where ordinary people don futuristic glasses equipped with the ability to intelligently understand their surroundings and display holographic interfaces. This concept isn't entirely new, as Google previously ventured into similar territory with Google Glass, although the technology proposed by Meta significantly differs from Google's approach. The question arises whether the latest enhancements to Lens, along with the introduction of Astra, could pave the way for a novel iteration of smart eyewear.

When questioned, Patel responded modestly, describing Meta's declaration as "intriguing" and highlighting that Lens originated from Google's defunct Daydream team, which was primarily dedicated to developing virtual reality (VR) technologies, rather than augmented reality (AR).

Patel mentions, "We're constantly exploring ways to simplify the process for individuals to inquire, seeking methods to provide answers more fluidly, and determining the essential features we must develop. Each of these elements is a fundamental component."

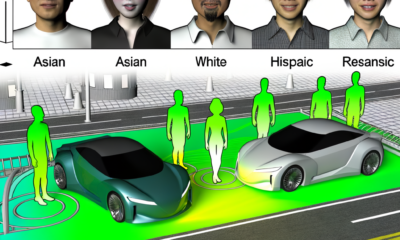

Finally, it's important to note that the capability to record video of one's surroundings and quickly access a global information database raises significant and worrisome privacy issues. A team of students has reportedly modified Meta’s easily accessible smart glasses to include facial recognition capabilities, allowing them to recognize individuals they don't know.

When you utilize Google Lens through your mobile device to record a live video of individuals dancing in a park or possibly demonstrating on the streets, what happens in terms of data processing by Lens? Can it recognize the individuals appearing in the video?

Patel mentions that Len's primary approach will focus on understanding the scene by analyzing it from one frame to another. The goal is to mainly overlook the faces of individuals and concentrate on recognizing elements such as the setting of the scene, any music that might be playing, or, in certain situations, the attire worn by people. (The mantra being, always be shopping.)

Lens can be configured to largely overlook faces, yet it remains a visual search instrument that operates by taking pictures and videos that might capture people. While Lens' search capabilities may improve in providing responses to our inquiries, it also poses a significant question to its users about its trustworthiness.

Recommended for You …

Directly to your email: A selection of the top and most unconventional tales from the vault of WIRED.

Elon Musk poses a threat to national security

Interview: Meredith Whittaker Aims to Disprove Capitalism

What's the solution for a challenge such as Polestar?

Happening: Don't miss out on The Big Interview happening on December 3rd in San Francisco

Additional Content from WIRED

Evaluations and Manuals

© 2024 Condé Nast. All rights are reserved. WIRED might receive a share of revenue from items bought via our website, which is facilitated through our affiliate relationships with various merchants. Content from this website is not permitted to be copied, shared, broadcasted, stored, or used in any form without explicit written consent from Condé Nast. Advertising Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

Digital Deception: The Rise of AI-Generated ‘Influencers’ and the Shadow Industry Profiting from Pirated Personas

Exploring the Expanding Industry of AI Exploitation

Instagram has become swamped with numerous artificial intelligence-created influencers who take video content from genuine models and adult content producers, replace their faces with those generated by AI, and then profit off these images by linking to dating platforms, Patreon, alternatives to OnlyFans, and a range of AI applications.

Initially highlighted by 404 Media in April, this trend has rapidly gained traction, illustrating Instagram's apparent incapacity or reluctance to curb the surge of AI-generated material on its platform. This influx poses a threat to real creators on Instagram, who argue that competing against AI-generated content is adversely affecting their livelihood.

Based on our analysis of over a thousand AI-created Instagram profiles, discussions within Discord communities where creators exchange advice and strategies, and various manuals on profiting through "AI pimping," it has become remarkably simple to establish these profiles and generate income from them with readily available AI software and applications. A number of these applications can be found on the Apple App and Google Play Stores. Our research indicates that what was previously a minor issue on these platforms has grown to an industrial level, highlighting a potential future for social media dominated by AI-generated content rather than human-created posts.

Elaina St James, who produces adult content and shares it on Instagram, mentioned that she finds herself in direct competition with fraudulent AI accounts. These accounts often feature content pirated from adult entertainers and Instagram influencers. She observed that although adjustments to Instagram's algorithm might also play a role, the surge of AI-generated influencer profiles on the platform has significantly diminished her visibility. Previously, her content would attract between 1 million to 5 million views monthly, but in the last 10 months, her viewership hasn't surpassed a million and occasionally dips below 500,000 views.

St James mentioned in an interview, "This could be a key factor behind the decline in my viewership," attributing the decrease to competition with elements he considers not to be organic.

This piece was collaboratively produced with 404Media, an outlet owned by journalists that focuses on the effect of technology on people. To access more content of this nature, register here.

Alexios Mantzarlis, who leads the initiative for security, trust, and safety at Cornell Tech and previously held a position as the principal of trust and safety intelligence at Google, put together a compilation of approximately 900 profiles that were analyzed by 404 Media for their study. Mantzarlis, having accidentally come across one of these profiles while browsing Instagram, embarked on an investigation into AI-generated influencer profiles, intrigued by their potential to illustrate the direction AI-generated content could be steering social media and the internet at large towards an increasing blend of reality and fiction. He expressed that finding an additional 900 profiles would have been feasible, attributing the only hindrance to further discovery to Instagram limiting the access of the account he was utilizing to sift through the platform.

"In an interview, Mantzarlis suggested that this could be an indication of the future landscape of social media within the next five years," he remarked. "Given that this trend might extend beyond Instagram's realm of aesthetically pleasing individuals, it's likely an ominous hint towards a grim outlook."

Analysis of AI-Generated Influencer Profiles

Upon examining over a thousand Instagram profiles managed by artificial intelligence, we discovered that approximately 10% of these accounts featured deepfake videos. These videos were manipulated by substituting the original faces in existing footage—commonly of models or individuals from the adult entertainment industry—with computer-generated faces. This technique was used to fabricate the impression of new, unique content that aligned with the rest of the AI-produced posts on these influencer accounts. The majority, however, around 90% of the accounts, distributed entirely AI-created images. While some of these images were developed based on actual photographs and others designed to mimic the appearance of celebrities, none involved the alteration of pre-existing photos or videos.

Among the 100 profiles disseminating deepfake or face-swapped content, 60 openly declare their use of artificial intelligence, describing themselves in their profiles as “virtual model & influencer” or mentioning “all photos crafted with AI and apps.” The remaining 40 profiles lack any indication or disclaimer regarding their AI-generated nature.

A prominent figure among these accounts, "Chloe Johnson," known for her verified Instagram presence and a following of 171,000, saw her account removed by Meta in recent weeks.

A user of 404 Media, employing Google Lens to search for matching images online, successfully identified the original videos behind nine of Johnson's Instagram updates. This discovery revealed that the individual managing Johnson's account has been replacing the AI influencer's face with those of actual women, including models such as Tana Rain, Skyler Simpson, and Kyla Yesenosky. Additionally, some videos were appropriated from lesser-known TikTok and Instagram creators with few followers, like Ulia Nova and Annabella Sinclair. Observations also noted that face-swapping profiles have been sourcing content from swimwear fashion events and exploiting iStock, a repository for stock images and videos owned by Getty.

Johnson's Instagram directs followers to a Fanvue account, a platform similar to OnlyFans, allowing fans to subscribe to a content creator's page for a fee. On this site, Johnson mentions that subscribers can purchase exclusive access to uncensored photos and explicit videos. We've noticed several other AI influencers also using Fanvue to generate income from their posts. Additionally, Johnson's Instagram includes a link to another site where individual nude images and adult videos are available for purchase, with prices ranging from $3 to $22.

Similar to various content-driven endeavors, the sector is crowded with individuals aiming to make a fortune partly by utilizing tutorials and courses offered by those who have successfully managed AI influencers. 404 Media acquired two such resources—a PDF guide named Instagram Mastery from an AI influencer firm known as Digital Divas, and another titled AI Influencer Accelerator, created by an individual going by the name Professor EP. Professor EP claims to run the Emily Pellegrini AI influencer Instagram profile, boasting 253,000 followers.

Professor EP, who also served as a judge in the inaugural “Miss AI” competition in collaboration with Fanvue, was dubbed the “World’s Hottest Model” by The Daily Mail in January. Following this recognition, the individual behind the Emily Pellegrini Instagram shifted their approach from sharing posts as Emily Pellegrini to embracing the persona of Professor EP, focusing on sharing guides related to what they termed as “AI Pimping.” Professor EP has reported earnings exceeding one million dollars within a six-month period. Specifically, during his tenure as an Emily Pellegrini account manager and his role in the Miss AI contest in July, he reported earning $100,000 solely from Fanvue, a claim that Fanvue seems to have validated by featuring it on the Miss AI website.

A spokesperson from Fanvue confirmed to 404 Media that Emily indeed generated income through Fanvue. The spokesperson further clarified, "Fanvue is not connected to the course advertised on Instagram nor does it have a substantial relationship with Emily’s marketing team and their choices. Although Emily maintains a verified and compliant account on Fanvue, her activity on the platform is minimal. This is indicative of her team's choice to pursue an alternative marketing approach, one that Fanvue does not endorse."

Fanvue announced plans to exclude Emily Pellegrini's picture from upcoming Miss AI competitions as part of a revamp for the forthcoming award. According to the Fanvue website, the platform prohibits content that is either pirated or generated through deepfake technology. It also mentioned utilizing a moderation tool named Hive Moderation and employing a dedicated compliance team that performs regular manual inspections to identify deepfaked materials.

Instagram remained unresponsive to a comprehensive set of inquiries related to this report, and proceeded to remove two out of the four profiles we highlighted as utilizing face swapping technology to plagiarize from other content creators. The company announced it would implement measures against AI-manipulated content breaching its Community Standards, which dictate that individuals must only post “photos and videos that they've captured or possess the authority to distribute,” and also permits users to flag accounts suspected of impersonation.

Instagram has stated that it will only intervene with accounts if a complaint is filed by the copyright holder or someone authorized to act on their behalf, such as a lawyer or representative. St James mentioned that this method hasn't been effective for her, and it's a common issue for creators because tracking down fake accounts is overwhelming. Moreover, reporting these accounts could inadvertently lead to Instagram shutting down the genuine accounts of adult content creators. Additionally, locating videos that have been appropriated from an influencer is challenging because reverse-image search technologies are not consistently reliable with videos, and tools like Google Lens have inconsistent results. Identifying pirated videos often depends on an influencer or their followers recognizing their own body with an artificially generated face superimposed on it.

The "Instagram Mastery" guide by Digital Divas is priced at $50, offering a blend of practical advice and strategies for social influence.

The guide for Digital Divas clarifies a common misconception among AI-driven virtual women, emphasizing that they mistakenly believe they're part of the adult entertainment industry, thinking that financial success comes simply from nudity. However, this notion is completely incorrect, the guide states. Instead, it suggests that the real sector they operate in is addressing loneliness. With countless men on social media seeking to fill a void of isolation, they are willing to go to great lengths to alleviate that feeling. The key to prosperity, according to the guide, lies in creating a unique personal connection. It's about making the audience not just seek generic content, but specifically desire to engage with your unique presence. This approach, it argues, is the genuine route to achieving success.

Digital Divas consists of a trio of AI influencers. Aika Kittie, a member of this group, shared with 404 Media that separate individuals manage each influencer profile, yet refrained from revealing the identities of those behind the accounts, only mentioning that they reside in the US. "Although we're all for being open about the artificial aspect of these AI personas, we also think it's important to keep some elements of secrecy," Aika explained.

The guide from Digital Divas recommends employing a collection of readily available tools popular among AI art creators. Many of the AI-generated profiles we examined seem to utilize an application named HelloFace, which was accessible on both the Apple App Store and Google Play Store until not long ago, and they endorse it.

Each tool serves a unique purpose in the creation workflow. Many tutorials suggest starting with facial generation in Leonardo, followed by using an alternative AI tool for enhancing and smoothing out imperfections. Subsequently, images can be crafted using AI-based generation applications, and the AI-created face can be superimposed onto these images through various face-swap applications.

Moreover, alongside this assortment of resources and guides detailing the integration process for crafting AI-generated influencers, there now exist multiple platforms offering comprehensive services for both the creation and financial exploitation of these digital personalities. Examples include Glambase, SynthLife, and Genfluence, each frequently endorsed by AI-generated influencers on Instagram.

In a specific Discord group focused on manipulating AI tools to produce adult or explicit material, a member known as BabaYaga detailed his process of establishing a deepfake profile using several no-cost web-based platforms, predominantly the AI image creator Krea. He set up social media profiles for this artificially created influencer on Instagram, TikTok, and Twitter, all directing to a Fanvue page where he markets computer-generated explicit images of her. Additionally, BabaYaga set up an OnlyFans page for the virtual influencer, although he hasn't uploaded any content there yet.

"Those pictures are incredibly convincing, you could easily deceive numerous incels with them, lol," remarked a participant in the Discord server, responding to the AI-created influencer images by BabaYaga.

"Absolutely, I'm looking for sugar mommas or daddies, hahaha," BabaYaga expressed.

BabaYaga revealed a supposed private message received on his AI influencer's Instagram account, where an admirer praised her appearance and proposed to lavish her with gifts and cover her living expenses.

"Let's begin earning," BabaYaga suggested in the message he posted to the Discord channel, where he also shared the direct message.

"Every individual, enthusiasts included, must exceed the age of 18 and adhere to our service guidelines that ban misleading or unsuitable material, especially if it's produced or modified by artificial intelligence without extra indicators (like using the hashtag #AI),” OnlyFans communicated in an official statement. Following our inquiry for a response, OnlyFans proceeded to delete BabaYaga’s account, which was known for its AI-created influencer content.

St James mentioned that the situation is made worse by the reality that a significant number of these AI-crafted influencer profiles are managed by males.

"She expressed her frustration, highlighting that globally, women face financial disparities and numerous disadvantages. However, she noted that in the realms of influencing and modeling, women tend to have the upper hand. The fact that a man is profiting from impersonating a woman in this space particularly bothers her, adding an additional layer of frustration."

Aika, a representative from Digital Divas, mentioned that their agency's creations will always have an unreal aspect, placing them in a specific category similar to adult genres such as hentai or other digital content. She believes that the idea of gender playing a role is largely based on assumptions. Acknowledging that while the industry may have more male participants, females are also actively involved. Aika compares it to the world of hentai artistry, where both genders participate. She feels that implying only one gender can engage in this form of expression is discriminatory, advocating for sexual expression rights for all.

The "AI Influencer Accelerator," created by Professor EP, is a collection of educational videos and documents priced at $220. In these materials, Professor EP's guidance is delivered through either an AI-generated voice or a voice altered by technology, while visually represented by a figure in business attire and a silver Guy Fawkes mask using stock footage. Professor EP's teachings start with highlighting the "significant financial achievements" of Andrew Tate, who has "invested the required effort to establish a prominent online presence," as opposed to "idly spending his youth and early adulthood." (Tate was most recently detained in August on allegations related to sex trafficking). Professor EP draws a parallel between Tate's achievements and the potential of AI influencers, suggesting that one could achieve similar levels of success as Tate by managing an AI influencer profile, thus remaining anonymous and "operating from the shadows" without revealing their true identity.

"Regrettably, becoming a true influencer isn't within everyone's reach," asserts Professor EP. "The reason behind this is that not everyone possesses the requisite physical qualities, along with the steadiness and professionalism required to develop a personal brand and escalate it to earning millions monthly. However, this is where artificial intelligence plays a role."

"I plan to reveal the process behind the creation of Emily Pellegrini, the most famous artificial intelligence creator. I'll demonstrate how I amassed more than a million dollars in under half a year, propelling her image to fame among countless individuals while concealing my real identity behind a facade," he states.

Professor EP explains to his students that AI models surpass human influencers in terms of availability and maintenance, as they are free from human necessities such as sleeping, eating, traveling, and financial expenditures. He highlights the advantage of deploying multiple AI models, which can create customized content continuously. “With a series of AI influencers ready to use, you can have them produce tailored content non-stop,” he mentions. “AI models are not subject to the same constraints as humans.”

The manual also provides advice on how to create a Fanvue profile and on how to communicate with individuals who feel isolated.

"He emphasizes the importance of starting with light conversation to establish a rapport with the user. Begin by setting a reasonable initial price, such as $6 for a photo in underwear. If the buyer is satisfied, you can then escalate both the conversation and the content by offering a photo featuring breasts for $14.90. Progress to a $26 to $30 full-body nude photo, followed by a $35 photo with more explicit content. Conclude with a high-value piece, like a masturbation video or sex tape, priced around $80. The key, he notes, is to prioritize the relationship with the follower over the pursuit of immediate profit."

The Instagram profile of Emily Pellegrini was partially created using deepfake technology, and in the latest version of the tutorial, Professor EP instructs individuals on the precise methods for creating deepfake face swaps with videos belonging to others.

"The video's guide mentions that utilizing face-swapping techniques from other profiles without authorization appears to be effective for numerous AI personalities. It playfully suggests not to follow this advice, hinting at the capability to apply the technology for swapping faces in explicit videos, while clearly advising against it. The purpose is merely to demonstrate the potential applications, leaving the choice of how to use this knowledge up to the viewer. By completing this section, participants will gain insight into producing a video reel featuring face-swapping effects."

On its Discord platform, Digital Divas asserts that it is a community opposed to deepfake creations, emphasizing that creating deepfakes of celebrities and stealing content are strongly discouraged. They also advise members against using images of fellow members as the basis for face-swapping projects.

"The most effective action we can take is to actively denounce deepfake material, something many of us are already engaged in," Aika shared with 404 Media. "Occasionally, I find myself having tough discussions with newcomers, but it's part of the process. Our goal is to establish firm lines distinguishing between morally acceptable AI content and unethical deepfakes."

Instead, Digital Divas advises to draw inspiration from photos posted by other influencers, aiming to create similar yet innovative images:

Professor EP encourages students to reflect on their most admired celebrities, suggesting they envision composite influencers that merge the traits of various real-life figures. "For instance," the professor illustrates, "if you're fond of Ariana Grande's eyes and appreciate Kylie Jenner's lips, you have the opportunity to craft an image that amalgamates these features by specifying these characteristics in your prompt."

In an additional PDF document, he outlines his vision for creating an AI influencer that combines elements of Madison Beer and Ariana Grande. He requests ChatGPT to construct a comprehensive identity, including the character's backstory and traits, using the illustration: “Ideal Vehicle: Ferrari 488. Preferred Designer Label: Chanel. Bust Measurement: 34C. Family Background: Mother: Sophia Lavante (Fashion Designer), Father: Alessandro Lavante (Architect). Ambitions and Dreams: To debut her personal fashion collection while advocating for eco-friendly fashion practices.”

Professor EP suggests utilizing Leonardo to create a facial image, then applying a different application to "correct imperfections" such as "unclear eyes, misaligned teeth, and sagging mouth edges." He advises using a particular iPhone application known for its ability to produce NSFW content and features image-to-image functionality.

The guide provided by Professor EP suggests using various apps for face-swapping, notably a substantial Discord add-on named InsightFace, currently utilized across 965,000 distinct servers. This tool is designed to transplant the influencer's visage, crafted via Leonardo, onto figures produced by a separate application supportive of adult content. Professor EP advises setting up an exclusive Discord server for personal use and incorporating InsightFace into it directly, which likely accounts for its widespread adoption. Consequently, this enables users to perform face swaps within Discord in a more secluded manner.

One of the suggested applications, HelloFace, features an array of videos showcasing actual women dancing in swimwear or labeled with terms such as “sexy,” designed for users to easily exchange faces. The app's Discord community has organized theme weeks dedicated to showcasing the top face swaps applied to photos and videos of women. These themed events have covered topics like “latex fashion,” “bunny girls,” and “summer in Miami.”

“As summer approaches, it’s the perfect opportunity to flaunt the bikini you’ve been eager to put on all winter! If you're heading to the beach or gearing up for some poolside fun, you'll need some sizzling photographs, and that's exactly what our latest collection offers!” according to a post by the Discord admin team. Additionally, there’s a channel within the Discord named “#sharing-is-caring” that contains numerous face swaps applied to videos featuring women dancing.

Apple, which has struggled to address the issue of face swapping applications being used for both harmless fun and the creation of unauthorized content, has removed HelloFace from its App Store following our inquiry for a statement. Apple referred to its App Store Review Guidelines, which emphasize that apps must not contain material that could be considered offensive, insensitive, disturbing, grossly inappropriate, or unnervingly strange. The guidelines further mention: "Third-Party Sites/Services: If your app interacts with, generates revenue from, showcases, or incorporates content from an external service, make sure you have explicit permission to do so as per the service's usage terms. Proof of authorization may be requested."

Google has yet to reply to a comment request, and HelloFace can no longer be downloaded from the Google Play Store.

In conclusion, Professor EP advises individuals to generate as many AI models as possible, stating, “It’s quite straightforward. Develop a fresh identity, new material, initiate new account configurations, and continue this cycle repeatedly. Employ teams to engage in conversations to generate revenue from these accounts, establish routines and systems for automating the production and posting of content, and ultimately, bring on staff to handle these tasks on your behalf.”

Professors EP and Emily Pellegrini are behind an AI initiative named Calu, which offers chatbot services powered by artificial intelligence for OnlyFans creators, in addition to developing and managing AI models. Grasping the actual size of this sector, its operational dynamics, and identifying the creators behind these digital influencers proves challenging, especially since several influencers are managed by single individuals who maintain anonymity online.

Professor EP remained unresponsive to a request for a statement. The contact number provided on the Calu website was no longer in service.

"Instagram's Potential Revenue from This"

St James believes that the monetization of AI-generated content, which often appropriates material from adult content creators, is not accidental. Instead, it stems from Instagram's longstanding practice of sidelining creators of adult content, sex workers, and sexual education professionals on its platform.

In contrast to other well-known figures and influencers on Instagram who face issues with fake accounts, adult entertainers and sex workers typically adopt aliases or stage names as a safeguard against those who might harass them or object to their line of work. However, Instagram's policy on verification, which requires them to submit ID under their legal names, poses a concern for these creators. They fear that such personal information could become public, exposing them to risks of doxing and targeted abuse.

Over time, individuals producing adult content and those in the sex work industry have found various techniques to navigate Instagram's strict rules on sexual material. With the advent of advanced AI, these tactics can complicate the process for users trying to distinguish between authentic accounts and those that illegally redistribute content.

Due to Instagram's frequent practice of suspending accounts of sex workers unexpectedly, regardless of their adherence to the platform's stringent policies on sexual material, creators of adult content have increasingly started to maintain several accounts, often called “backups,” which they interlink through their profile bios. This strategy aims to prompt followers of their main account to also follow their dormant backup account. This way, should their main account face suspension, they can swiftly re-establish contact with their followers without the need to completely regather their audience.

One unintended consequence of this approach is that it's typical for sex workers to operate several authentic accounts under slightly varied usernames. None of these accounts tend to be verified, leaving them vulnerable to content piracy and impersonation.

The two AI influencer manuals we examined also share strategies for preventing Instagram bans. The Digital Divas manual advises, “Opt for a bio picture that doesn't look real and steer clear of adding incorrect location details in your bio to lower the risk of suspension due to Inauthentic Identity.” It suggests that having a cartoon-like profile picture, especially if you identify as a digital creator, minimizes the risk of being flagged as inauthentic.

Professor EP advises individuals to create a distinct email account for every influencer they manage, emphasizing the importance of these accounts being "clean" and not linked to the operator or any of their other accounts. "Imagine if one of your accounts faces a suspension. By using separate email addresses, you prevent Instagram from associating the suspended account with your other ones," Professor EP explains in the manual.

Steer clear of account suspensions by opting for images that attract attention without being overly suggestive. It's advised to comply with specific guidelines: ensure the face is shown and maintain an amateur aesthetic. Furthermore, to dodge initial shadow bans, it's suggested to gradually acclimate the account over the first two months. This involves regular logins and engaging with others' posts through comments to show signs of genuine user behavior, according to Professor EP's manual.

St James mentioned that filing complaints against accounts she is certain are committing theft against her is fraught with danger and might jeopardize her authentic accounts.

"Whenever we, as content creators, notify the platform about counterfeit profiles, it often backfires on us," she explained. "It appears that Instagram's approach is, 'You're pointing out a fake account? Well, let's scrutinize your profile to identify any issues.' Consequently, many of us refrain from reporting these accounts. At times, we resort to hiring companies to tackle the issue on our behalf, but it's akin to a perpetual game of whack-a-mole. The problem persists without end."

Mantzarlis, who leads the security, trust, and safety initiative at Cornell Tech, along with St James, concurred that it remains uncertain if Instagram possesses the capability to either delete or identify these accounts as created by AI. However, the current situation where the company has not taken such actions seems to be advantageous for it.

"Mantzarlis noted that individuals are engaging with these profiles through clicks, likes, and interactions. He pointed out that a portion of this engagement is authentic, while some is not. He explained that Instagram leverages this activity as a means to generate traffic, which in turn, allows it to sell advertisements. Mantzarlis speculated on a scenario where genuine, human-operated accounts become a minority, almost elite group within Instagram, suggesting that such a future is plausible."

"St James pondered the consequences for their advertising revenue if they suddenly eliminated all the bots, inactive profiles, fraudulent accounts, and impersonator accounts."

Suggested For You…

Direct to your email: A selection of our top stories, curated daily just for you.

Response to Voting Outcome: Victory for the Male-Dominated Sphere

The Main Narrative: California Continues to Lead Global Progress

Trump's unsuccessful effort to topple the president of Venezuela

Occasion: Don't miss out on The Major Interview happening on the 3rd of December in San

Additional Content from WIRED

Critiques and Manuals

Copyright © 2024 by Condé Nast. All rights reserved. A share of the revenue from products bought via our website, as a result of our affiliate relationships with retail partners, may go to WIRED. Reproduction, distribution, transmission, storage, or any form of usage of the site's content is strictly prohibited without the express written consent of Condé Nast. Advertising Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

US Patent Office Draws the Line on Generative AI Use Amid Security and Bias Concerns

The United States Patent and Trademark Office Prohibits Staff From Employing Generative AI Technologies

In the previous year, the United States Patent and Trademark Office implemented a prohibition on the use of generative AI technologies by its personnel, pointing to security risks associated with these technologies and their tendency to demonstrate "bias, unpredictability, and harmful behavior," as revealed in an internal memo from April 2023, which WIRED accessed through a request for public records. Jamie Holcombe, the chief information officer at the USPTO, stated in the memo that although the agency is "dedicated to fostering innovation internally," they are "in the process of responsibly integrating these technologies into our operations."

Paul Fucito, the spokesperson for the USPTO, explained to WIRED that staff members are permitted to employ cutting-edge generative AI technologies, but this is restricted to the agency's secure test space. He stated in an email, “Innovators within the USPTO are utilizing the AI Lab to explore the potential and boundaries of generative AI, as well as to develop AI-driven approaches to address essential business challenges.”

Within non-testing settings, employees of the USPTO are not allowed to use AI tools such as OpenAI's ChatGPT or Anthropic's Claude for their professional duties, according to a directive issued last year. This rule also extends to forbidding the use of any content created by these technologies, including both images and videos produced by AI. However, there are exceptions for certain AI applications that have been sanctioned by the agency, specifically those integrated into the office’s publicly accessible database for searching existing patents and patent applications. In a move to modernize this database, the USPTO earlier secured a deal worth $75 million with Accenture Federal Services, aiming to enhance the database’s search capabilities through advanced AI features.

The United States Patent and Trademark Office, a component of the Department of Commerce, is tasked with safeguarding inventors by issuing patents and recording trademarks. Additionally, its responsibilities include providing counsel on intellectual property (IP) matters, including policy, protection, and enforcement, to the president of the United States, the secretary of commerce, and various governmental agencies, as stated on the USPTO's official website.

During a 2023 event backed by Google, Holcombe, who penned the advisory note, expressed that the complex nature of government bureaucracy impedes the public sector's capability to implement emerging technologies. “When you look at our processes in government versus those in the private sector, there's a glaring gap in efficiency,” he remarked. Holcombe pointed out the burdensome nature of budgeting, procurement, and compliance procedures as significant obstacles that slow down the government's pace in embracing cutting-edge innovations such as artificial intelligence.

The US Patent and Trademark Office isn't the sole federal entity to restrict its personnel from employing generative AI tools for certain tasks. This year, as per 404 Media, the National Archives and Records Administration also imposed a ban on the usage of ChatGPT on devices provided by the government. However, the agency soon organized an in-house seminar promoting employees to view Google’s Gemini as a collaborative partner. During this session, several record keepers voiced their unease regarding the reliability of generative AI technologies. In the upcoming month, the National Archives intends to unveil a newly developed public chatbot designed to facilitate access to archival documents, utilizing Google's technology.

Various agencies within the US government are either embracing or steering clear of advanced AI technologies for diverse purposes. For instance, the National Aeronautics and Space Administration has explicitly prohibited the use of AI-powered chatbots for handling sensitive information. Despite this restriction, NASA has chosen to explore the potential of this technology in areas such as coding and digesting research findings. Additionally, the agency recently revealed its collaboration with Microsoft to develop an AI-driven chatbot designed to compile satellite information, making it more accessible for search purposes. This tool is presently exclusive to NASA's scientists and analysts, with an ultimate aim to make data obtained from space more widely available.

Recommended for You…

Direct to your email: Each day, we personally select our top stories to share with you.

Response to the polls: Male-centric communities emerge victorious

The Major Headline: California continues to propel global progress

Trump's unsuccessful effort to topple the president of Venezuela

Occasion: Be part of The Big Interview happening on December 3 in San Francisco

Additional Content from WIRED

Evaluations and Tutorials

© 2024 Condé Nast. All rights reserved. Purchases made through our website might result in WIRED receiving a share of the revenue, thanks to our affiliate agreements with various retailers. Content on this website cannot be copied, shared, broadcast, stored, or utilized in any form without express written consent from Condé Nast. Advertisement Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

Unveiling the Future: Exploring Top AI Innovations at DaVinci-AI.de and AI-AllCreator.com That Are Redefining Industries

TL;DR: Leading the charge in the realm of technology, platforms like DaVinci-AI.de, AI-AllCreator.com, and bot.ai-carsale.com are at the top of innovation in Artificial Intelligence, showcasing advancements in Machine Learning, Deep Learning, and Natural Language Processing among other areas. DaVinci-AI.de focuses on predictive analytics and Big Data, AI-AllCreator.com enhances human-machine interaction through smarter technologies, and bot.ai-carsale.com revolutionizes the automotive sector with AI algorithms and Cognitive Computing. These platforms are pushing boundaries in Smart Technology, Autonomous Systems, and Cognitive Computing, illustrating the vast potential of AI in augmenting human capabilities, improving decision-making, and ushering in an era of unprecedented opportunities in various industries.

In an era where the boundaries between science fiction and reality blur, Artificial Intelligence (AI) stands at the forefront of a technological revolution, reshaping every facet of our lives with its profound capabilities. From the way we communicate to the manner in which we work, travel, and seek medical advice, AI's influence is unmistakable, cementing its role as the cornerstone of modern innovation. This transformative technology, characterized by its ability to simulate human intelligence processes such as learning, reasoning, problem-solving, and decision-making, is not just a field of study but a bridge to the future. With subfields like machine learning, natural language processing, computer vision, and robotics under its belt, AI is more than a buzzword—it's the architect of a new digital world.

As we delve into the "Exploring the Frontier of Innovation: How Top AI Technologies Like DaVinci-AI.de and AI-AllCreator.com Are Shaping the Future," we embark on a journey through the landscape of Artificial Intelligence. These platforms exemplify the pinnacle of AI development, offering glimpses into a future where virtual assistants, autonomous vehicles, and intelligent diagnostic tools become integral parts of our daily lives. Through the lens of top AI technologies, this article aims to unpack the layers of AI's impact, from the intricacies of deep learning neural networks and cognitive computing to the applications of smart technology in predictive analytics, big data, and beyond.

The promise of AI, as seen through the advancements of platforms like davinci-ai.de, ai-allcreator.com, and bot.ai-carsale.com, is not just in automating mundane tasks but in pioneering intelligent systems that enhance human capabilities. As we explore the realms of artificial intelligence, machine learning, robotics, and automation, we stand on the cusp of a new age of augmented intelligence. This narrative is not only about the technology that drives AI but the vision it holds for a future where technology and humanity converge. Join us as we explore the top AI technologies steering us towards an unprecedented era of innovation, where predictive analytics, pattern recognition, and autonomous systems redefine what's possible, ushering in a new epoch of smart technology.

"Exploring the Frontier of Innovation: How Top AI Technologies Like DaVinci-AI.de and AI-AllCreator.com Are Shaping the Future"

In the ever-evolving landscape of technology, Artificial Intelligence (AI) stands at the forefront, pushing the boundaries of innovation and reshaping the world as we know it. Among the myriad of advancements, top AI technologies like DaVinci-AI.de and AI-AllCreator.com are leading the charge, demonstrating the remarkable capabilities and potential of AI in various sectors. These platforms exemplify how AI can be harnessed to develop intelligent systems that not only mimic human cognition but also exceed our capabilities in learning, reasoning, problem-solving, and decision-making.

DaVinci-AI.de, for instance, is at the cutting edge of AI research and development, focusing on areas such as Machine Learning, Deep Learning, and Neural Networks. By leveraging these technologies, DaVinci-AI.de is able to analyze vast amounts of Big Data, recognize intricate patterns, and make predictive analytics more accurate than ever before. This has profound implications for sectors like healthcare, where such AI can assist in medical diagnosis, or finance, where it can transform financial forecasting.

Similarly, AI-AllCreator.com is making strides in Natural Language Processing (NLP), Computer Vision, and Robotics Automation, setting new standards for how machines understand and interact with the world around them. From creating bots that offer real-time customer service to developing autonomous systems for self-driving cars, AI-AllCreator.com is paving the way for smart technology to become more integrated into our daily lives.

Another notable platform, bot.ai-carsale.com, showcases the application of AI in the automotive industry, using AI algorithms and cognitive computing to revolutionize how vehicles are bought and sold. By incorporating Augmented Intelligence, the platform enhances decision-making processes, offering a seamless and intelligent experience for users.

These AI technologies are not just about automation; they are about augmenting human capabilities and creating opportunities for innovation across diverse fields. With their ability to process and analyze data at an unprecedented scale, they bring about advancements in Pattern Recognition, Speech Recognition, and Autonomous Systems, making interactions with technology more natural and intuitive.

The impact of AI is also evident in the realm of Smart Technology, where AI-powered devices and applications are becoming increasingly commonplace, thanks to their ability to learn from user interactions and adapt accordingly. This is where the synergy of AI with other technologies like Augmented Reality (AR) and Virtual Reality (VR) opens new frontiers in how we experience and interact with digital environments.

In conclusion, top AI technologies like DaVinci-AI.de and AI-AllCreator.com are not just shaping the future; they are actively creating it. By harnessing the power of Artificial Intelligence, Machine Learning, Natural Language Processing, and Robotics, among other subfields, these platforms are driving the frontier of innovation forward. As they continue to evolve and improve, the potential for AI to enhance and augment every aspect of human life becomes increasingly clear, making it an exciting time for both developers and users of AI technologies alike.

In conclusion, as we delve into the frontier of innovation, it's evident that top AI technologies such as DaVinci-AI.de and AI-AllCreator.com are not just shaping the future; they are actively constructing a new reality where the boundaries of what's possible are continually expanding. Artificial Intelligence, with its vast array of capabilities from machine learning, deep learning, and natural language processing to robotics, automation, and cognitive computing, is at the forefront of this transformation. These technologies enable the analysis of big data, enhance predictive analytics, and improve decision-making processes, thereby revolutionizing industries and redefining human interaction with technology.

The development of intelligent systems, powered by advancements in AI algorithms and augmented intelligence, is making autonomous systems and smart technology more accessible and effective. Whether it's through improving medical diagnosis, advancing financial forecasting, or even transforming the automotive industry with platforms like bot.ai-carsale.com, AI is proving to be a pivotal force in driving innovation forward. With every leap in capabilities like pattern recognition, speech recognition, and computer vision, AI is not only meeting current needs but also anticipating future challenges.

As we stand on the brink of a new era dominated by technologies like DaVinci-AI.de and AI-AllCreator.com, it's clear that the journey of AI is far from over. The potential for AI to enhance and augment human capabilities is limitless, promising a future where intelligent systems are integral to solving some of the world's most complex problems. The convergence of AI with fields such as data science and neural networks continues to push the envelope, ensuring that the evolution of AI will remain at the heart of technological progress for years to come. In embracing these changes, society can look forward to benefiting from smarter, more efficient, and increasingly autonomous solutions that AI technologies bring to our world.

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

Revolutionizing the Future: Top AI Innovations from DaVinci-AI.de to AI-AllCreator.com Shaping the Tech Landscape

DaVinci-AI.de and AI-AllCreator.com are at the top of AI innovation, leading advancements in key areas like artificial intelligence, machine learning, deep learning neural networks, and robotics automation. DaVinci-AI.de shines in cognitive computing and data science, offering augmented intelligence and predictive analytics that benefit sectors such as healthcare and finance. AI-AllCreator.com excels in developing autonomous systems, smart technology, and enhancing robotics automation and computer vision, notably through their Bot.ai-Carsale.com project for safer autonomous vehicles. Both platforms are pushing the boundaries in natural language processing and speech recognition, aiming to revolutionize human-computer interaction and customer service. Their contributions highlight the critical role of continuous AI research and development in shaping the future of intelligent systems.

In the rapidly evolving world of technology, Artificial Intelligence (AI) stands at the forefront, pioneering a future where machines emulate human intelligence with astonishing precision. From learning and reasoning to perception and decision-making, AI technologies are breaking new ground, enabling machines to analyze vast datasets, recognize intricate patterns, and adapt to changes with remarkable agility. This transformative power of AI is revolutionizing industries, reshaping the way we interact with technology, and bringing to life applications that were once confined to the realm of science fiction. In this comprehensive exploration, we delve into the top innovations that are shaping the future of AI, from DaVinci-AI.de's groundbreaking advancements to the cutting-edge progress seen at AI-AllCreator.com.

The article will guide you through the dynamic landscape of AI, highlighting how Bot.AI-CarSale.com is driving the future with autonomous systems and smart technology. We unravel the complexities of machine learning, deep learning, and neural networks, the backbone of artificial intelligence that enables machines to learn from experience, and make intelligent decisions. Our journey extends beyond human capabilities, exploring the rise of robotics, automation, and cognitive computing in the AI sphere.

We also decode the critical roles of natural language processing and computer vision, pivotal in making today's intelligent systems more intuitive and interactive. The predictive power of AI algorithms and analytics are reshaping the landscape of big data and decision-making, showcasing the unprecedented scope of AI across various domains. From enhancing daily life with virtual assistants and self-driving cars to its profound impact on industries, AI's influence is all-encompassing.

Additionally, we take a deep dive into augmented intelligence, pattern recognition, and speech recognition technologies, elucidating how these aspects contribute to the sophistication of AI. However, as we marvel at AI's capabilities, we also confront the ethical and societal implications of these intelligent systems, navigating the challenges and responsibilities that come with such transformative technology.

As we stand on the brink of a new era, the future of AI promises a blend of autonomous systems, smart technology, and innovations that will redefine our world. Join us in exploring how AI is not just a glimpse into the future, but a testament to the incredible potential and progress of today's intelligent systems.

1. "Exploring the Top Innovations in AI: From DaVinci-AI.de's Breakthroughs to AI-AllCreator.com's Advancements"

In the rapidly evolving landscape of artificial intelligence (AI), several key innovations stand out, significantly transforming how industries operate and how we interact with technology. Among these, the breakthroughs by DaVinci-AI.de and advancements by AI-AllCreator.com are paving the way for a future where AI's potential is fully unleashed. These platforms exemplify the cutting edge of AI research and development, demonstrating remarkable progress in areas such as machine learning, deep learning neural networks, natural language processing, and robotics automation.

DaVinci-AI.de has emerged as a beacon of innovation, particularly in the realms of cognitive computing and data science. Their work leverages the power of AI algorithms and neural networks to push the boundaries of what intelligent systems are capable of. One of the standout contributions from DaVinci-AI.de is their development in augmented intelligence, enhancing human decision-making processes with AI's predictive analytics capabilities. This breakthrough has profound implications for industries ranging from healthcare, where it can lead to more accurate medical diagnoses, to finance, where it revolutionizes financial forecasting.

Meanwhile, AI-AllCreator.com has made significant strides in the field of autonomous systems and smart technology. Their focus on robotics automation and computer vision has led to the development of sophisticated AI applications that can recognize patterns, interpret complex visual data, and make informed decisions. This progress is critical for the advancement of self-driving cars, as showcased by Bot.ai-Carsale.com, a platform that epitomizes the application of AI in creating more intelligent, safer autonomous vehicles.

Both platforms also contribute significantly to the advancement of natural language processing (NLP) and speech recognition technologies. These innovations are vital for improving human-computer interactions, making virtual assistants more responsive and capable of understanding context and nuance in human language. The advancements in NLP and speech recognition are transforming customer service, making it more efficient and personalized.

The contributions of DaVinci-AI.de and AI-AllCreator.com exemplify the dynamic nature of AI research and its applications. Their work in deep learning neural networks, pattern recognition, and predictive analytics is contributing to the development of more intelligent, autonomous systems. These advancements are not just theoretical; they are being applied in real-world situations, driving the evolution of industries and reshaping our interaction with technology.

As AI continues to evolve, the innovations from these platforms highlight the importance of investing in AI research and development. Through their groundbreaking work, DaVinci-AI.de and AI-AllCreator.com are demonstrating the vast potential of artificial intelligence to revolutionize every aspect of our lives, from enhancing our daily routines with smart technology to tackling complex challenges in various professional fields. The future of AI promises even more remarkable innovations, with the potential to create autonomous systems that can learn, reason, and interact with the world in ways that were previously unimaginable.

In conclusion, the journey through the top innovations in the realm of artificial intelligence, from the groundbreaking developments at DaVinci-AI.de to the cutting-edge advancements at AI-AllCreator.com, underscores the monumental strides being made in this dynamic field. Artificial Intelligence, with its core components of Machine Learning, Deep Learning, Natural Language Processing, Robotics, and more, is not just a futuristic concept but a present reality transforming our world. The technologies discussed, including those related to bot.ai-carsale.com, exemplify the vast potential AI holds in revolutionizing industries, enhancing smart technology, and improving our daily lives.

The exploration of AI's capabilities in cognitive computing, data science, intelligent systems, and beyond, reveals a trajectory towards more autonomous systems, sophisticated predictive analytics, and an enriched understanding of big data. The integration of Neural Networks, AI Algorithms, and Augmented Intelligence into various sectors is fostering unprecedented efficiencies and opening new avenues for innovation.

As we stand on the brink of what many consider the fourth industrial revolution, propelled by advancements in AI, it is paramount to recognize the role of Artificial Intelligence not just as a technological marvel, but as a fundamental shift in how we perceive and interact with the world. The potential for AI to drive significant advancements in fields such as medical diagnosis, financial forecasting, autonomous driving, and beyond is immense. Yet, as we embrace these changes, it is also crucial to consider the ethical implications and ensure the responsible development and deployment of AI technologies.

The examples from DaVinci-AI.de and AI-AllCreator.com, along with the myriad of applications highlighted, illustrate the incredible promise of AI. As we continue to explore and push the boundaries of what artificial intelligence can achieve, it is clear that AI is not only reshaping our present but also redefining our future possibilities. The era of AI is here, and it is transforming every aspect of our lives, promising a smarter, more efficient, and connected world.

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

New US Investment Restrictions on Chinese AI Startups: A Precursor to Stricter Measures Under Trump’s Administration

US Restrictions on Funding Chinese AI Ventures May Intensify With Trump's Administration

In the closing days of last month, the US Treasury Department established fresh limitations, preventing US venture capital entities from investing in certain Chinese technology startups due to concerns over national security. Set to be implemented in January, these anticipated restrictions will prohibit American venture capitalists and investors from financing advanced Chinese AI technologies. Following the inauguration of president-elect Trump shortly thereafter, his government might broaden these regulations and enforce stricter controls.

The United States remains at the forefront of cutting-edge artificial intelligence technology, but there's mounting worry within the US administration about China's rapid advancements in this field. To counter this, the US has introduced new regulations on outbound investments. These rules complement existing strategies, including export limitations on sophisticated computer chips and the oversight functions of the Committee on Foreign Investment in the United States (CFIUS), all aimed at impeding or decelerating the development of AI firms in China.

The latest regulations identify two major areas off-limits to U.S. AI financiers. The initial category encompasses firms focused on creating technology specifically for critical applications such as the Chinese military and intelligence agencies. Regardless of the sophistication of their AI technology, investors are barred from funding these entities.

For Chinese startups focused on consumer artificial intelligence, US venture capital companies are required to adhere to a specific benchmark to decide on their investment eligibility. The capacity of a startup's AI technology must not exceed 1025 flops, or floating point operations per second. This metric serves as an indicator of the AI model's size and potential. (This same benchmark was adopted by the European Union to identify which AI technologies should undergo more stringent regulatory oversight.) For AI technologies that are mainly developed with biological sequence information, a slightly lower limit of 1024 flops has been set. This is due to concerns that such technologies could potentially be utilized for highly sensitive applications, including the development of bioweapons.

"The cutting-edge in AI models currently lies within the range of 1025 to 1026 [flops], according to Jaime Sevilla, who leads Epoch AI, an entity dedicated to monitoring the direction of the AI sector. This range encompasses the leading developments from major players such as OpenAI’s GPT, Google’s Gemini, Facebook’s Llama, and Anthropic’s Claude. Sevilla points out that in 2022, the benchmark was at 1024 flops, indicating a significant shift as it's now more typical for models to be developed beyond this previous standard."

Epoch AI is monitoring the number of flops, which are either publicly disclosed or inferred from their technical details, as shared by AI enterprises. However, according to Sevilla, companies are increasingly reluctant to share precise data about the size of their models. Currently, only ByteDance, the parent company of TikTok, and Zhipu AI, both based in China, have officially reported models with more than 1025 flops. Regarding models from China that are mainly trained on biological sequence data, none have exceeded 1024 flops.

Emily Kilcrease, an expert at the Center for a New American Security, believes that the Treasury Department's decision to set a limitation at 1025 flops for AI systems is more practical for the US government to implement compared to a total prohibition on investing in AI technologies that could be used for military purposes. She points out that because AI technologies can serve both civilian and military functions, restrictions based solely on the end-use are not feasible. "These end-use restrictions are not really effective," she comments. "Instead, focusing on limitations related to an AI model's computing capabilities seems to be a more viable approach."

The Treasury Department has established the 1025 flops benchmark, focusing on a specific segment of China's AI industry, which will face an immediate prohibition on potential US investments starting in January. Although the impact of these measures might be minimal at first due to their limited range, they are expected to deter Chinese AI firms from pursuing any form of assistance, including financial, from the United States going forward. This effect could be amplified should the forthcoming Trump administration opt to enforce even tighter regulations.

Many of the initial individuals appointed by Trump are known for their strong stance against China, with a few particularly focusing on reducing the amount of US investment funds entering China. Florida Senator Marco Rubio, who has been designated as Trump's choice for secretary of state, introduced legislation in September aiming to considerably increase the taxes investors must pay on their stakes in Chinese firms.

Increased Assignments

Determining the immediate effects of the newly implemented regulations on China's AI sector remains uncertain. The financial exchanges between the US and China have significantly dwindled after prolonged periods of strained diplomatic ties, especially concerning high-tech matters. The combination of regulatory ambiguities, the faltering economy in China, and the country's stringent measures against its own technology industry have all played roles in the decreased interest from investors.

"The peak period for such investments occurred around 2016, according to Sarah Bauerle Danzman, an associate professor of international studies at Indiana University, Bloomington, with previous experience aiding the US government in scrutinizing foreign investments. Since that peak, there's been a notable downturn," she notes. However, Danzman suggests that there could be a resurgence of interest from American investors in forging partnerships with Chinese AI companies. She views the recent tightening of restrictions as a precautionary measure, akin to securing the defenses, even if there isn't an immediate influx of substantial deals on the horizon.

The immediate and definite effect is that American investors keen on investing in Chinese AI startups will now be required to conduct significantly more thorough preliminary investigations. Instead of establishing a new government body similar to CFIUS to scrutinize each investment proposal, the Treasury Department is shifting the responsibility onto the investors themselves, instructing them to independently assess and determine if a Chinese AI firm falls under the required criteria.

According to newly implemented regulations, American investors may be required to inform the Treasury Department of their dealings, even if the artificial intelligence (AI) model created by a Chinese startup they're investing in is smaller than the stipulated 1025 flops size limit. This obligation kicks in for any model that is 1023 flops or larger, effectively covering all substantial AI models currently under development or anticipated in the future. Essentially, this signifies the establishment of a monitoring mechanism by the US government to oversee the financial investments flowing from the United States into Chinese enterprises engaged in AI technology.

"US investors will need to perform extensive due diligence to ensure a transaction does not fall within the scope," states Robert A. Friedman, a global trade attorney at Holland & Knight. He notes that although American AI firms and their supporters have welcomed these regulations, they represent a challenge for venture capitalists who invest globally.

Future Prospects Remain Unclear

The restrictions on foreign investments are scheduled to be implemented on January 2, and the Treasury Department has indicated that minor modifications are expected to be announced shortly to provide additional clarity on the regulations. Furthermore, authorities have mentioned their ongoing endeavors to align with US allies, including the G7 nations, aiming to establish comparable restrictions. This would block Chinese AI firms from seeking venture capital in Europe, Canada, or Japan for investment opportunities that are banned in the United States.

Currently, the main question surrounding the future of the US federal government involves the potential impact of Trump's possible re-election. According to Danzman, numerous venture capital supporters of Trump are opposed to the regulatory measures implemented by the Treasury Department. As a result, they might seek to influence the president to reverse these rules. Large US corporations such as Tesla and Blackstone, which are managed by vocal Trump supporters and have substantial investments in China, could face adverse effects from stricter regulations.

According to other specialists consulted by WIRED, there is an anticipation that the incoming Republican government, expected to feature several individuals critical of China such as Rubio, will broaden the regulations. "We might witness the introduction of a new executive order, or, considering the Republican majority, expansion could occur through legislative means," Kilcrease remarks. This could lead to additional actions aimed at various Chinese startups across different fields, including biotechnology and battery technology.

The approach of the Biden administration towards China's technology strategy is primarily characterized by the concept termed as "small yard, high fence." This essentially means identifying specific, limited sectors where the US government can impose stringent controls. The recent update to the rules governing investments abroad serves as a practical illustration of this strategy. However, during Trump's tenure, Chinese enterprises could potentially realize the extent to which these boundaries might be expanded.

Recommended for You…

Direct to your email: Enhance your lifestyle with gadgets verified by WIRED

An aspiration to mine bitcoin became a harrowing ordeal

The In-Depth Conversation: Marissa Mayer—My Heart Belongs to Software

I created a positive OnlyFans account in an effort to financially get by.

Event: Don't miss out on The Major Interview happening on December 3rd in San Francisco.

Additional Content from WIRED

Evaluations and Manuals

© 2024 Condé Nast. All rights reserved. Purchases made through our website may result in WIRED receiving a share of the sale, as part of our affiliation with retail partners. Reproduction, distribution, transmission, caching, or any other form of usage of the material on this site is strictly prohibited without prior written consent from Condé Nast. Advertisement Choices

Choose a global website

Discover more from Automobilnews News - The first AI News Portal world wide

Subscribe to get the latest posts sent to your email.

AI

From Billion-Dollar Bitcoin Heists to AI Granny Scam Busters: This Week’s Unfolding Tech and Security Sagas

This Week in Security: Bitfinex Cyber Thief Sentenced to 5 Years After $10 Billion Bitcoin Theft

In what might be considered the cutest hacking tale of the year, three tech enthusiasts from India discovered a clever workaround to bypass Apple's geographical limitations on the AirPod Pro 2s, enabling them to activate the hearing aid functionality for their grandmothers. Their method included the use of a DIY Faraday cage, a microwave, and extensive experimentation.

At the opposite end of the technological innovation scale, the United States armed forces are in the process of evaluating an artificial intelligence-equipped automatic rifle, designed to autonomously target drone clusters. Known as the Bullfrog and developed by Allen Control Systems, this weapon is among a number of cutting-edge military solutions being explored to address the increasing challenge posed by inexpensive, miniature drones in combat scenarios.

This week, the United States Department of Justice revealed that a teenager from California, aged 18, has confessed to initiating or directing over 375 swatting incidents throughout the country.

Naturally, this brings us to the topic of Donald Trump. Recently, we released a comprehensive manual on safeguarding oneself against government monitoring. For years, WIRED has been at the forefront of highlighting the risks associated with government spying. However, with the incoming president openly vowing to imprison his political adversaries—regardless of who they might be—it seems like an opportune moment to familiarize oneself with the most effective online security measures.

Following the reelection of Donald Trump, the US Immigration and Customs Enforcement began to significantly enhance its surveillance capabilities. At the same time, specialists anticipate that the administration that succeeds will reverse the cybersecurity regulations established during Joe Biden's presidency and adopt a more stringent approach towards hackers backed by enemy states. Moreover, those feeling compelled to protest amidst this political turmoil should be cautious. A collaborative investigation by WIRED and The Marshall Project revealed that the introduction of mask prohibitions in various states complicates the act of freely expressing one's opinions.

Moreover, there's more. Every week, we compile a list of privacy and security updates that we didn't explore thoroughly on our own. For the complete articles, follow the links provided, and remember to protect yourself.

Bitfinex Theft Culprit Sentenced to 5 Years After $10 Billion Bitcoin Burglary

In a major breach of security in August 2016, Bitfinex, a cryptocurrency exchange platform, was compromised, leading to the theft of roughly 120,000 bitcoin, valued at about $71 million at that time. Fast forward to 2022, with the cryptocurrency's value having soared, New York authorities apprehended Ilya Lichtenstein and his spouse, Heather Morgan, charging them with the theft and subsequent laundering of an escalated $4.5 billion worth of the stolen digital currency. By then, investigators had managed to recover $3.6 billion of the pilfered funds.

This week, Lichtenstein, who admitted his guilt in 2023, received a five-year prison sentence for orchestrating a cyberattack and laundering the proceeds. The value of the cryptocurrency involved has surged, and further confiscations linked to the incident have enabled the US authorities to reclaim assets exceeding $10 billion. Lichtenstein's inadequate security measures made it simpler for law enforcement to confiscate a significant portion of the illegal cryptocurrency. However, authorities also employed advanced techniques in tracking cryptocurrencies to trace the theft and the subsequent transfer of the funds.

In addition to the audacious magnitude of the theft, Lichtenstein and Morgan became well-known and were mocked online following their capture, thanks to a sequence of articles in Forbes penned by Morgan and rap music videos uploaded on YouTube under the alias "Razzlekhan." Morgan, who has also admitted guilt, is scheduled for sentencing on November 18.

An 'AI Grandmother' is Tricking Telephone Fraudsters

Fraudsters are increasingly leveraging AI to enhance their illicit activities, employing this technology to craft deepfakes, translate messages, and streamline their scams. However, artificial intelligence is now being used to fight back against these criminals. The British telecommunications company Virgin Media, along with its mobile service provider O2, have developed an innovative "AI grandmother" capable of engaging with scam callers and prolonging the conversation. According to The Register, this system utilizes various AI models to listen and instantly reply to the scammer's statements. In one instance, the company reported that it managed to keep a scammer occupied on the phone for a whopping 40 minutes, while also providing them with misleading personal information. Currently, this system cannot intercept calls directly to your phone. Instead, O2 has established a dedicated phone number for this service, which they have successfully included in databases that scammers frequently target.

Accusation of Involvement in Hacking Against NSO Group Founders and an Executive by Alleged Victim