Unmasking the Future: How Reality Defender’s AI Battles the Surge of Real-Time Deepfake Scams

To look over this article again, go to My Profile, and then find the saved stories section.

Live Deepfake Video Frauds Have Arrived. This Solution Aims to Eliminate Them

Christopher Ren delivers an impressive imitation of Elon Musk.

Ren holds the position of product manager at Reality Defender, a firm dedicated to developing solutions to fight against AI-generated misinformation. In a video conference observed by me last week, Ren cleverly utilized a widely shared piece of code from GitHub and a single photograph to create a basic deepfake of Elon Musk, overlaying it onto his own facial features. This act was meant to showcase how the company's newly developed tool for detecting AI manipulations operates. As Ren pretended to be Musk during our video conversation, snapshots from our call were being sent in real-time to Reality Defender's specialized analytical model. Concurrently, an indicator provided by the company on our screen notified me that I was probably viewing a deepfake produced by AI, rather than the actual Elon Musk.

Indeed, I had my doubts about actually being in a video call with Musk, and it seemed the presentation was tailored to showcase the nascent capabilities of Reality Defender in a flattering light. Yet, the underlying issue is authentic. The menace of real-time video deepfakes is escalating, affecting governments, corporations, and private individuals alike. In a notable incident, the head of the US Senate Committee on Foreign Relations was duped by a fake video call from someone posing as a Ukrainian official. Earlier this year, an international engineering firm suffered a multimillion-dollar loss when a staff member fell for a deepfake video scam. Furthermore, romance frauds leveraging similar technologies have been conning people across the board.

Ben Colman, CEO and cofounder of Reality Defender, predicts that in just a few months, we'll witness a surge in deepfake videos and direct scams. He emphasizes that particularly during important video calls, visual evidence should not automatically be considered trustworthy.

The company is highly committed to collaborating with both commercial and governmental entities to combat the rise of deepfakes powered by artificial intelligence. Despite this primary focus, Colman is keen on ensuring that his firm is not perceived as opposing AI advancements in general. "We are strong supporters of AI," he states. "We believe that virtually all applications can revolutionize sectors like healthcare, enhance efficiency, and boost creative endeavors. However, it's in these extremely rare instances that the dangers are significantly severe."

Reality Defender is developing a real-time detection tool, initially launching as a Zoom plug-in, which aims to identify if participants in a video call are genuine or AI-generated fakes. The firm is in the process of rigorously testing this feature to evaluate its effectiveness in distinguishing authentic users from counterfeit ones. However, it appears that the general public will have to wait a while before getting access to this technology. Initially, only a select group of the company's clients will have access to this beta version of the software feature.

The unveiling of plans to identify deepfakes in real-time is not a novel initiative within the tech industry. Last year, Intel introduced its deepfake detection technology, FakeCatcher. This tool is engineered to detect authenticity by examining fluctuations in facial blood circulation to ascertain if the individual in the video is genuine. Similar to others, Intel's solution is not accessible to the general public.

Scholars are exploring various methods to combat this particular type of deepfake danger. "The technology to produce deepfakes has advanced significantly, requiring minimal data," notes Govind Mittal, a doctoral student in computer science at New York University. "With just 10 of my photos from Instagram, anyone could use those. Even ordinary individuals are at risk."

The creation of live deepfakes is no longer an issue exclusive to the wealthy, celebrities, or individuals with significant digital footprints. At New York University, the study conducted by Mittal, alongside professors Chinmay Hegde and Nasir Memon, suggests a solution to prevent AI-generated bots from entering video conferences. This involves a video-based verification process, similar to a CAPTCHA, that users must complete before they can participate in the call.

Colman, representing Reality Defender, emphasizes the importance of expanding their data access to enhance their model's detection capabilities, a challenge echoed by many AI-centric startups today. He remains optimistic about forming new collaborations to address these data shortages and, though he didn't provide details, suggests that several agreements might be on the horizon for the next year. This comes after a scenario where ElevenLabs, an AI-audio company, was linked to a deepfake audio incident involving a fake call from US President Joe Biden, leading to a partnership with Reality Defender aimed at preventing such abuses.

How can you safeguard yourself against scams during video calls at this moment? Following WIRED's fundamental guidance on steering clear of deceit through AI-generated voice calls is essential. It involves maintaining humility about your ability to detect video deepfakes, as this is key in preventing scams. The tech in this domain is advancing swiftly, meaning the clues you presently use to identify AI deepfakes might not remain reliable as the technology and its foundational models progress.

Colman remarks, "We wouldn't expect my 80-year-old mother to identify ransomware in an email," attributing this to her lack of expertise in computer science. He suggests that as AI detection advances and proves to be consistently reliable, the notion of real-time video authentication may become as commonplace and unobtrusive as the malware scanner that operates silently in the background of your email inbox.

You May Also Enjoy…

Delivered directly to your email: A selection of the most fascinating and peculiar tales from the archives of WIRED.

Elon Musk poses a threat to national security

Interview: Meredith Whittaker Aims to Challenge Capitalist Norms

What's the solution for a dilemma such as Polestar?

Occasion: Be our guest at The Major Interview happening on December 3rd in San Francisco.

WIRED PROMO CODES

Live Assistance with Turbo Tax – Save 10%

Deluxe Tax Preparation from H&R Block for Just $55

Amazing Offers on Instacart: Save As Much As $20

Dyson Airwrap promotion: Complimentary $60 Case and a $40 Gift included

Enjoy an Additional Discount of Up to 45% During the October Sale

Get 30% Discount on Purchasing Three or More Items at VistaPrint

Additional Content from WIRED

Evaluations and Instructions

© 2024 Condé Nast. All rights reserved. Purchases made via our website may earn us a commission through our Retail Affiliate Partnerships. Content from this site cannot be copied, shared, transmitted, or used in any form without the explicit written consent of Condé Nast. Advertising Choices

Choose a global website

AI

Underwater Robots on a Mission: Clearing WWII Munitions from the Baltic Sea

Robotic Teams Retrieve Abandoned Munitions from Baltic Waters

In the picturesque area of the Bay of Lübeck, visible from the rugged coastlines of northern Germany, dedicated removal squads are scouring the ocean bed. They're hunting not for the typical haul that local fishers steer clear of but for abandoned military ordnance. This includes sea mines, torpedoes, piles of artillery ammunition, and large bombs from aircraft, all languishing underwater for almost eight decades.

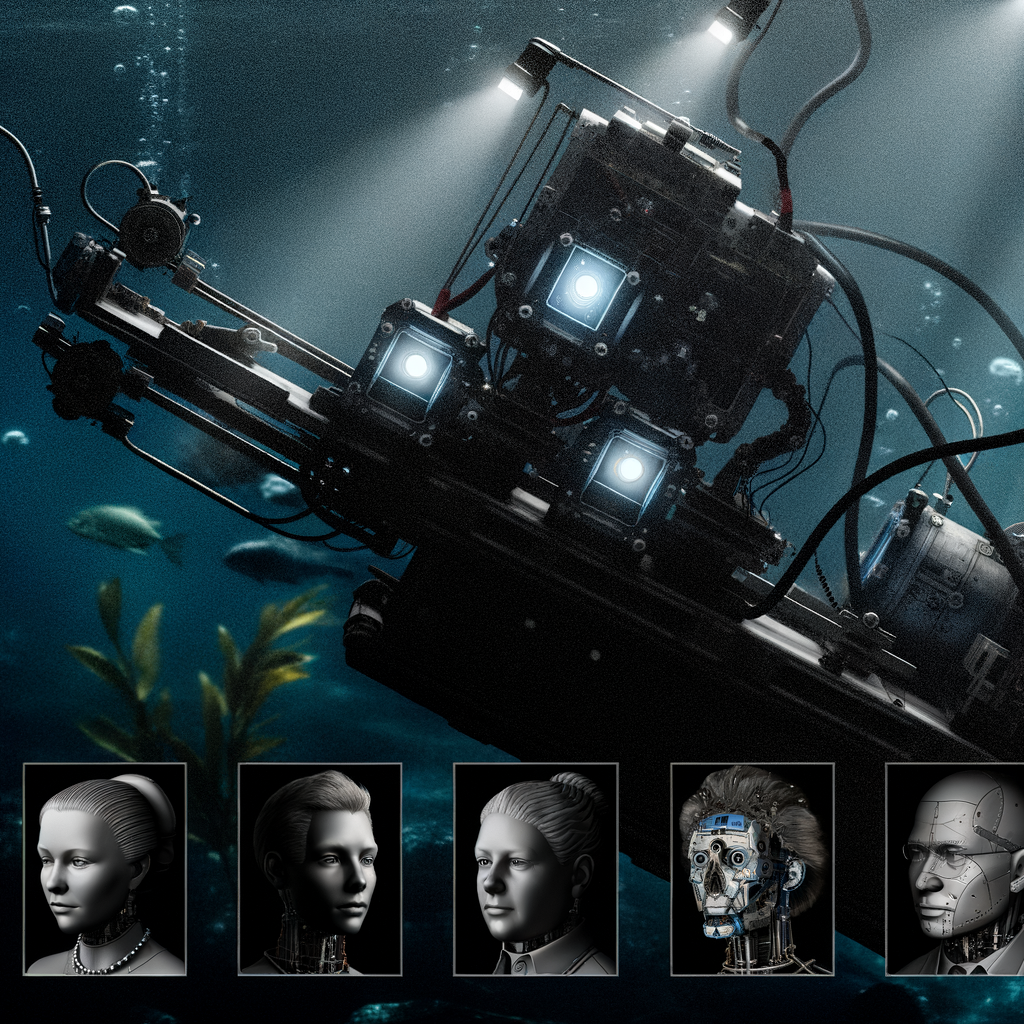

Throughout September and October 2024, submersible robots equipped with imaging devices, intense illumination, and detection technology have been actively searching for World War II-era munitions intentionally submerged in this area of the Baltic Sea. Specialists stationed on a nearby floating platform, cautiously positioned over the submerged weapons cache, evaluate and categorize each piece of ordnance. They then utilize the robots' electromagnetic attachments or a mechanical arm from a hydraulic digger on the platform to securely relocate the explosives into bin-like receptacles, which are then firmly closed and stored.

Massive quantities of German weapons were quickly submerged in the ocean following World War II, as directed by the Allied forces. Their aim was to eliminate the stockpile of Nazi armaments, along with some of their own, in the most expedient and cost-effective manner. Fishermen were compensated based on the amount of cargo they disposed of at specific locations designated for dumping, yet a significant amount of explosives and munitions ended up scattered throughout the bay, indicating a rush to complete the unpleasant task. The majority of this disposal activity took place from 1945 to 1949.

"Germany's Environment Minister, Steffi Lemke, emphasized to reporters during an October 2024 visit to the bay that the concern is not about a handful of undetonated explosives. Instead, the issue at hand involves millions of World War II-era munitions that were discarded by Allied forces to stop any potential rearming."

Last year's cleanup operation was a pioneering initiative aimed at addressing the hazardous remnants of conflict. Numerous disposal sites pepper both the Baltic and North seas, where it's commonly believed that around 1.6 million tons of military ordnance were abandoned in the waters of Germany. The majority of the discarded materials were traditional armaments, but the sea also became the final resting place for thousands of tons of chemical munitions, including chlorine and mustard gas shells.

For years, the issue of waste disposal sites received minimal focus, with many experts and officials believing that the dangerous substances would either stay contained within their deteriorating encasements or spread out harmlessly if leaked. "They claimed it wasn't an issue, believing everything would just dilute over time and lead to no adverse effects," states Edmund Maser, a toxicologist at the University Medical Center Schleswig-Holstein in Kiel, situated by the German Baltic Sea shore. Rare yet alarming events—such as Danish fishers being severely harmed by catching mustard gas ammunition, or holiday-goers getting burns after picking up moist lumps of white phosphorus, thinking it was amber—were viewed as regrettable but isolated risks.

Recent investigations have revealed that the environmental risks associated with underwater explosives might have been underestimated, posing an ongoing threat. The corrosive nature of the Baltic Sea's salt water has led to the deterioration of explosive casings, directly releasing harmful substances such as TNT into the water. Maser and his team have discovered traces of TNT in both mussels and fish near disposal areas, confirming the detrimental impact these chemicals have on sea life. Their research indicates that fish residing in proximity to sunken warships exhibit significantly increased incidences of liver tumors and damage to their organs.

"Traditional weapons have been identified as cancer-causing, while chemical weapons not only cause genetic mutations but also interfere with enzyme functions among other effects, clearly impacting living beings," explains Jacek Bełdowski, a foremost authority on the subject of submerged weapons disposal at the Polish Academy of Sciences. Studies conducted by Bełdowski and his colleagues have revealed that pollutants from underwater weapon deposits extend far beyond previously understood boundaries.

Aaron Beck, a marine chemist affiliated with the GEOMAR Helmholtz Centre for Ocean Research in Kiel, reminisces about a revealing 2018 research expedition that journeyed from Flensburg, close to the Danish boundary, to the German isle of Rügen: "We likely gathered thousands of water specimens, and astonishingly, in approximately 98 percent of those samples, we detected explosives. The pollutants were widespread."

Currently, Beck mentions that chemical concentrations in the water remain relatively minimal, attributing this to the majority of the munitions remaining sealed. However, without intervention, the risk of significant underwater pollution escalating in the near future is high.

Surge in Attention

Historically, bomb disposal units were summoned solely to address immediate threats, such as explosives found on beaches, or to prepare sites for new developments. The uptick in below-the-surface infrastructure projects, including offshore wind farms, gas conduits, and cables for internet and power, has led to an increase in demand for skilled experts to tackle the widespread issue of ordnance in the waters surrounding Germany. Yet, the largest dumping grounds often remain undisturbed by these development efforts due to the potential for project delays, escalating costs, and heightened dangers, leaving the most severe aspects of the ordnance problem unaddressed.

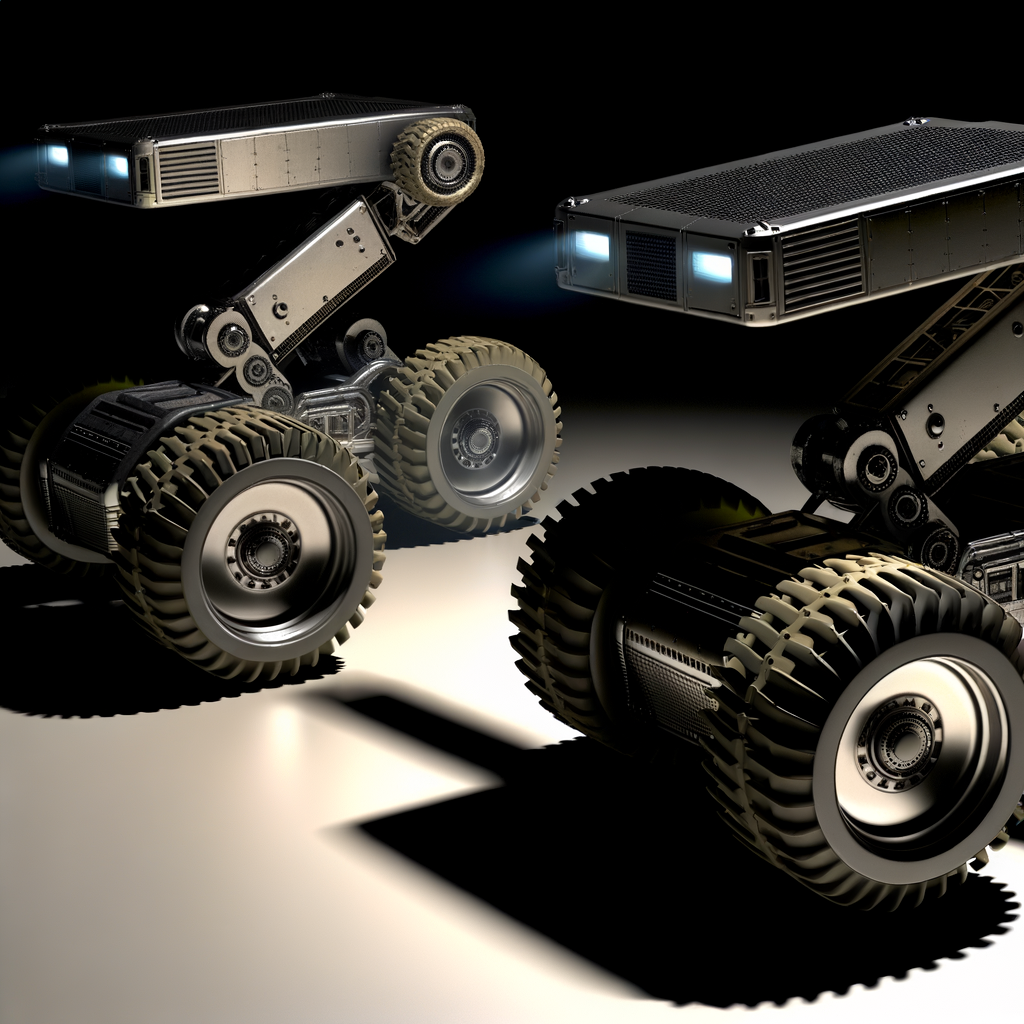

In July 2024, several waste management firms began probing the vast landfill located in the Bay of Lübeck, supported by a €100 million ($105 million) investment from the German government. The objective of this initiative is to develop a method that can effectively and extensively remove underwater munitions, with the goal of automating a significant portion of the operation. This would involve using drones to chart the locations of the dumps, followed by the organized recovery and safe elimination of the hazardous munitions.

The company SeaTerra, known for its expertise in disposing of munitions, was selected to conduct salvage operations for explosives at two underwater dump sites in a bay area. Working in collaboration with Eggers Kampfmittelbergung, another firm specializing in ordnance clearance, they successfully retrieved approximately 10 tons of small-caliber munitions and 6 tons of larger explosive devices over a two-month period in 2024. However, the significant amount of ordnance recovered wasn't the primary focus of the mission. Instead, the objective was for these companies to test their technological capabilities, gather valuable data, and prove the viability of such salvage operations.

In Germany, the frequent discovery of undetonated explosives is a significant issue, leading to the establishment of a dedicated, full-time bomb disposal unit tasked with neutralizing these dangers, often found during building endeavors. However, addressing similar threats in maritime environments has traditionally been a challenging and costly process, relying heavily on the efforts of divers to locate and retrieve these munitions for onshore disposal by German bomb disposal teams. Consequently, the idea of leveraging advanced technology to efficiently remove sea-based ordnance, previously deemed too difficult and expensive to undertake on a large scale, is now gaining appeal.

At SeaTerra, the operations are directed by Dieter Guldin, a 58-year-old professional archaeologist characterized by his somewhat disheveled hair and a scruffy beard, who shifted his career focus to ordnance disposal after many years. Originally, Guldin managed excavations of historical sites until he teamed up with a friend from his younger years at SeaTerra. Initially, he aimed to establish a venture in marine archaeology, but eventually, he transitioned to the financially rewarding and dynamic field of bomb disposal.

Guldin points out that German aquatic territories are widely affected, with certain areas harboring dense clusters of ancient explosives posing immediate threats to the environment. His advocacy contributed to the initiation of a government-supported initiative. Anticipating success, he invested SeaTerra's funds in advance, procuring cameras and tailoring the equipment to meet specific requirements, all before confirmation of the project's approval was received. Fortunately, their project received official authorization to move forward.

Leif Nebel, the managing partner at Eggers Kampfmittelbergung, has shared that their team is currently involved in extensive scanning of munitions and developing artificial intelligence programs alongside a comprehensive database. "Our goal is to enhance our ability to quickly and accurately identify what a suspected item might be, particularly when it comes to munitions found underwater," he explained. This information is critical for disposal teams who, for safety reasons, must ascertain the amount and type of explosive material they are dealing with. This ensures that the detonation chamber used in the disposal process is capable of handling the material safely and helps predict how the ordnance might react, such as the possibility of a fuse triggering an explosion.

The subsequent phase of the ongoing pilot initiative involves the construction of a floating facility designed for the disposal of old munitions by incineration, situated close to the disposal sites themselves. This approach would negate the necessity of retrieving the ordnance from underwater, transferring it to land, and then conveying it across the country to Germany's main disposal site, located in a complex near Münster, close to the Dutch border. Transporting the munitions in this manner is not only costly and fraught with risk, but it also presents considerable regulatory hurdles. This is because, according to German law, transporting hazardous old munitions is only permissible in cases of emergency. Furthermore, the disposal facility near Münster is already struggling to cope with the influx of bombs being discovered at various construction sites nationwide.

The appearance of the floating structure remains uncertain, as does its capacity to process explosives through its blast furnaces. Larger ordnance, such as naval mines and air-dropped bombs, may require disassembly prior to insertion. Additionally, the cumulative explosive force of the materials fed into the furnace must not exceed a specific limit to avoid detonating the structure itself.

In the future, the goal is to deploy autonomous submersible vehicles to explore, chart, and conduct magnetic surveys of the ocean floor to understand its contents. Specialists, with the assistance of artificial intelligence systems trained on vast amounts of data from previous clearing operations, would analyze these scans to accurately and securely recognize the debris scattered on the ocean bottom. Mechanical arms and containment units would then collect these explosives, place them in sealed, labeled containers, and organize them in specific holding zones for eventual disposal, reducing the reliance on human divers for such tasks.

In my conversation with Guldin in December, following the completion of the initial phase of the pilot program, he outlined a potential future scenario for this project. He envisioned using autonomous robots fitted with imaging devices, intense lighting, sonar technologies, and advanced gripping tools for more effective munition retrieval than the current crane-based methods, and these robots could work continuously. Moreover, utilizing unmanned vehicles could allow for the simultaneous clearance of disposal areas from various angles, a feat unachievable with stationary platforms on the water's surface. Additionally, experts in ordnance, who are currently in limited supply, might be able to manage the majority of operations from a distance, working out of offices in Hamburg, rather than spending extensive periods on the ocean.

The concept of remotely handling underwater tasks might not be fully realized yet, due to challenges like limited visibility underwater and occasionally insufficient lighting, which complicates operations via live feeds. However, initial trials have shown that the majority of the technology meets expectations to a certain extent. "There's definitely potential for enhancements, but at its core, the approach is effective, especially the process of directly identifying and relocating underwater items into transport containers," explains Wolfgang Sichermann, a naval architect from Seascape, the company managing this initiative for the German environmental ministry. The goal moving forward is to design and construct a sea-based disposal facility in the near future, with aspirations to start destroying the first underwater explosives by around 2026, according to Sichermann.

Touch Forbidden?

During my trip to the SeaTerra barge on a brisk yet sunny day last October, I had the opportunity to converse with seasoned ordnance disposal professional Michael Scheffler. He had been stationed for a month on the vessel, anchored near Haffkrug along the German shoreline, meticulously opening mud and slime-encrusted heavy wooden boxes filled with 20-mm cannon ammunition produced by Nazi Germany. By the morning of my visit, they had already inspected roughly 5.8 tons of these 20-mm projectiles, which had been retrieved from the seabed using mechanical claws and aquatic drones before being transported onto the vessel.

For many years, Scheffler has dedicated his career to the disposal of munitions, starting his journey in the German armed forces. However, it wasn't until recently that he truly understood the magnitude of the issue regarding discarded munitions, nor had he considered addressing the issue in an organized manner before.

"In my 42-year career, this is the first time I've encountered a project of this magnitude," he shared with me. "The innovations and research emerging from this pilot project are incredibly valuable for what's to come."

Guldin shares a hopeful view on the outcomes of the trial but cautions that technology's capabilities for remote operations have their boundaries. Tasks that are complex, perilous, and delicate will occasionally necessitate direct human intervention for some time yet. "There are limitations to fully remotely clearing the seabed. Certainly, the presence of divers and EOD [explosive ordnance disposal] experts working underwater, along with specialists physically present, is irreplaceable and here to stay."

Should the initial cleaning operation be effective, there is optimism that this technology could attract buyers from beyond the Baltic region. Until the late 1970s, global military forces commonly used the seas to dispose of outdated munitions.

However, the lack of profit in destroying old air-dropped bombs means that any increase in the disposal of sea-dwelling explosives would require significant funding towards environmental cleanup, an occurrence that is infrequent. “Certainly, we could make the process quicker and more effective,” Guldin notes. “The problem is, bringing additional resources to the effort implies someone has to foot the bill. Are we expecting a future government that's prepared to cover these costs? I'm skeptical, to say the least.”

"Sichermann mentions a recent conversation with the Bahamian ambassador, who extended an invitation for cleanup efforts of materials submerged by the British in the 1970s, just before the Bahamas gained its independence. The catch, he noted, was the expectation for Sichermann to not only provide the technological means but also the necessary funding. This underscores the importance of securing financial support for such initiatives, Sichermann adds. With the right investors on board, he believes there's a vast amount of cleanup opportunities globally due to the abundant presence of discarded munitions."

Discover More…

Our recent revelations highlight the involvement of novice engineers in supporting Elon Musk's bid for political power.

In your email: The most daring and forward-looking tales from WIRED

Perhaps it's a good idea to consider clearing out ancient conversation records.

Major Headline: The dramatic collapse of a solar panel sales

The Wealth and Power edition: The globe is dominated by affluent males

Additional Content from WIRED

Critiques and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made through our website may result in WIRED receiving a commission, as part of our affiliate agreements with retail partners. Reproduction, distribution, transmission, storage, or any form of usage of the content on this site is prohibited without explicit written consent from Condé Nast. Advertisement Choices

Choose a global website

AI

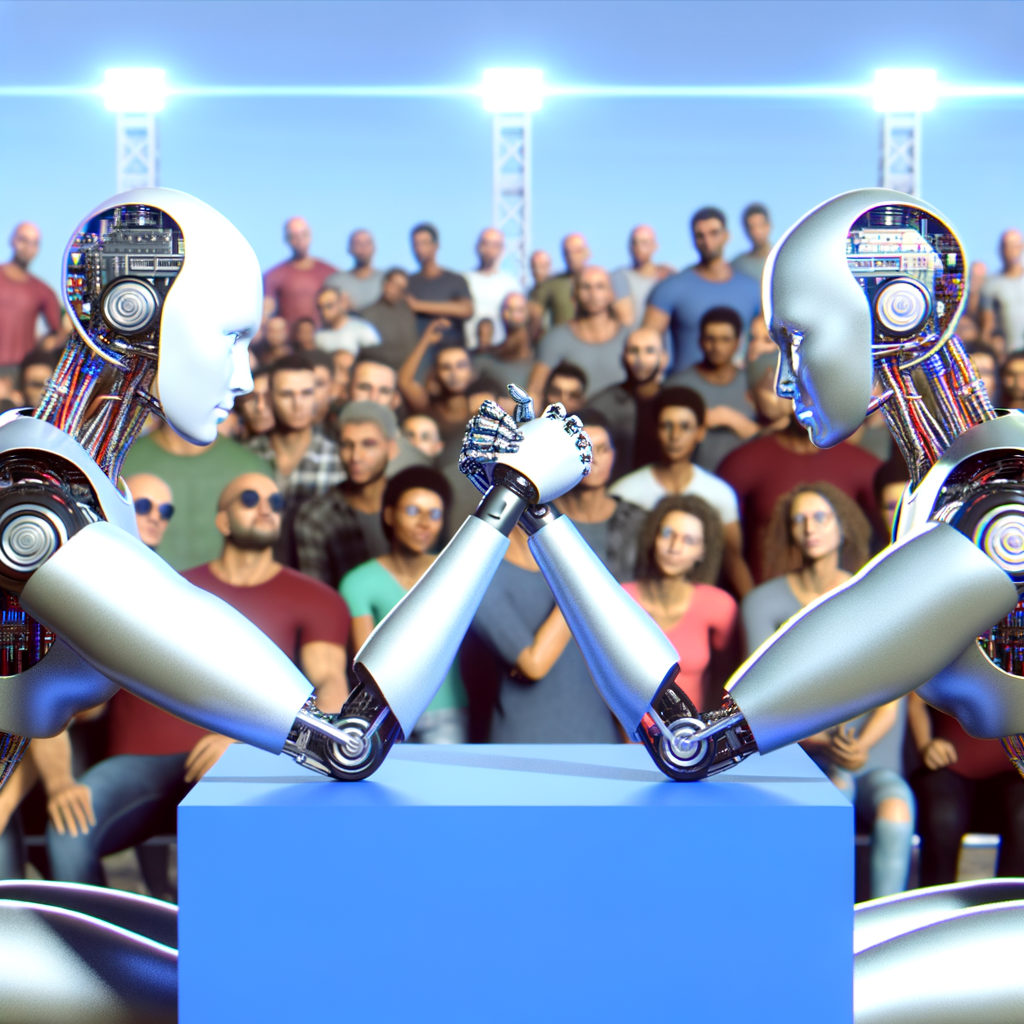

OpenAI Unleashes o3-Mini, a Compact AI Challenger to DeepSeek’s R1, Fueling the AI Innovation Race

OpenAI Introduces o3-Mini: A Compact AI Model on Par With DeepSeek

OpenAI has rolled out a streamlined, cost-effective iteration of its most intelligent AI system at no charge, in response to the growing excitement and buzz generated by the recent open-source release from Chinese AI newcomer, DeepSeek.

According to a previous report by WIRED, OpenAI is gearing up to launch its latest model named o3-mini, with the release date set for January 31. Sources, who requested to remain anonymous, revealed that the company's research team has been putting in extra hours to ensure it's fully prepared for its debut.

In December, OpenAI introduced a preview of o3-mini, a compact iteration of its model, boasting the highest level of AI problem-solving skills seen in any of their products so far. This model is designed to deconstruct complex issues into simpler elements to determine the most effective solution strategy.

"The company announced in a blog post that the o3-mini, a swift and potent model, pushes the limits of the capabilities of compact models."

OpenAI has announced that o3-mini will be accessible to everyone with Plus, Team, and Pro subscriptions to ChatGPT. Those using the no-cost variant of ChatGPT can also experiment with o3-mini, although they will face a limit on the number of inquiries they can make, according to the firm.

For a while now, OpenAI has been engaging PhD students in the development of a new model. A few weeks ago, the organization started hiring PhD students specializing in computer science, offering them $100 an hour for what was described in an email seen by WIRED as a "research collaboration" that would include work on models that have yet to be released.

OpenAI seems to be attracting PhD students with different specializations by collaborating with Mercor, a firm it often employs to hire personnel for model development. A recent employment announcement by Mercor on LinkedIn mentions: "The primary aim of this initiative, which you might join, is to develop intricate scientific coding queries aimed at evaluating the proficiency of extensive language models in producing code to address genuine scientific research challenges."

The employment advertisement further provides an illustrative problem, which bears a remarkable resemblance to a challenge found in a standard called SciCode. This standard is intended to evaluate the capability of extensive language models to tackle intricate scientific issues.

The announcement surrounding DeepSeek's R1 is causing a stir in the American technology sector. The availability of this potent model at no cost is challenging companies like Google and Anthropic to reconsider and possibly reduce their pricing strategies.

Sources within the company reveal that OpenAI is highly motivated to showcase its leadership in advancing and bringing AI technology to market.

DeepSeek has released a model that stands out for its efficiency in training and deployment, achieved with significantly fewer resources compared to what OpenAI and similar American firms have invested in cutting-edge AI development. The exact amount DeepSeek spent on this project is still not disclosed. OpenAI has expressed concerns that R1, DeepSeek's model, might have been trained using data generated by OpenAI's own models.

Have a Suggestion?

If you're presently or were previously affiliated with OpenAI, we're interested in hearing from you. Please reach out to Will Knight using a personal device at will_knight@wired.com or connect with him on Signal through his handle wak01.

OpenAI's latest creation might not surpass R1 when it comes to cost, yet it illustrates the firm's commitment to prioritizing efficiency in the future. The company also highlights the model's superior capabilities in mathematics, science, and programming.

The firm announces that the newest version will introduce additional capabilities, such as accessing internet searches, invoking call functions through a user's programming, and switching among various reasoning intensities to balance quickness with problem-solving skills.

DeepSeek's rapid ascent has sparked inquiries into the American government's approach to limiting China's advancement in artificial intelligence. The previous two administrations in the US have implemented various sanctions aimed at restricting China's access to the latest Nvidia chips, which are essential for developing state-of-the-art AI systems. Although DeepSeek has mentioned various Nvidia chips in its studies, the specifics of the chips utilized remain ambiguous.

Remarks

Become part of the WIRED family to participate in discussions.

Recommended for You …

Directly to your email: The most groundbreaking, forward-looking tales from WIRED.

Perhaps it's a good idea to consider clearing out old conversation logs.

Major Headline: The dramatic collapse of a solar panel salesperson

Temu's acquisition has now been finalized.

The Wealth Power Dynamic: How the Affluent Dominate Globally

Additional Content from WIRED

Critiques and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made through our website may generate revenue for WIRED as part of our affiliate agreements with retail partners. The content on this website is protected and cannot be copied, shared, broadcast, stored, or used in any manner without explicit consent from Condé Nast. Ad Choices

Choose a global website

AI

Unveiling DeepSeek: Navigating Censorship in Chinese AI and Strategies for Unbiased Access

Exploring the Mechanics Behind DeepSeek's Censorship and Bypass Strategies

In the short span since DeepSeek unveiled its open-source AI model, this Chinese startup continues to be at the forefront of discussions on artificial intelligence's trajectory. Although the company appears to surpass its American competitors in mathematical and logical capabilities, it notably restricts responses in its interactions. Inquiries directed at DeepSeek R1 regarding topics like Taiwan or Tiananmen are met with silence, as the model refrains from providing responses.

To understand the technical mechanisms behind this censorship, WIRED conducted experiments with DeepSeek-R1 across different platforms. This included testing on its proprietary app, a variant of the app available through a service named Together AI, and another version running on a WIRED-owned computer via the Ollama software.

WIRED discovered that bypassing the most direct forms of censorship is quite simple by choosing not to utilize the DeepSeek application. However, it also found that there are inherent biases within the model, introduced during its development phase. Eliminating these biases is possible but involves a significantly more complex method.

The results of this study could significantly impact DeepSeek and other AI firms in China. Should it be straightforward to bypass the censorship mechanisms in big language models, Chinese open-source LLMs could see a surge in popularity. This would be due to the ability of researchers to alter the models according to their preferences. On the other hand, if these censorship barriers prove difficult to circumvent, the utility of these models may diminish, potentially making them less attractive in the international market. DeepSeek did not respond to WIRED's request for a statement via email.

Content Filtering at the Application Level

Following DeepSeek's surge in popularity across the United States, individuals utilizing R1 via DeepSeek's online platform, mobile application, or API interface found that the system would not produce responses for subjects considered sensitive by authorities in China. This form of content filtering occurs within the application itself, meaning it only becomes apparent when users engage with R1 through a medium managed by DeepSeek.

The DeepSeek application for iOS explicitly declines to respond to specific inquiries.

Denials of this nature frequently occur with LLMs produced in China. A directive on generative AI implemented in 2023 mandates that AI systems within China adhere to strict content regulations, similar to those enforced on social media platforms and search engines. This regulation prohibits AI systems from creating materials that could "undermine national cohesion or disrupt societal peace." Essentially, this means AI models in China are obligated to filter their outputs to ensure compliance with these rules.

"From the beginning, DeepSeek makes sure to follow Chinese laws, keeping its operations legal and tailoring its services to fit both the requirements and cultural nuances of its Chinese audience," explains Adina Yakefu, who studies Chinese AI models at Hugging Face, an open-source AI model hosting service. "This adherence to regulations is crucial for gaining approval in a market that is strictly controlled." (In 2023, China restricted access to Hugging Face.)

In adherence to legal requirements, AI programs in China actively oversee and filter their verbal output instantly. (In contrast, Western counterparts such as ChatGPT and Gemini also implement protective measures, though these primarily concentrate on regulating content related to self-harm and explicit material, offering greater flexibility in customization.)

Due to R1 being an analytical model capable of demonstrating its thought process, the implementation of a real-time observation feature allows observers to witness an almost bizarre scenario where the model appears to self-censor during interactions with users. When WIRED inquired of R1 regarding the treatment of Chinese reporters covering controversial subjects by the government, the model initially began to formulate an extensive response that openly discussed instances of journalists facing censorship and arrest due to their reporting. However, just before completing its response, the entire answer vanished, only to be replaced with a brief note: “Sorry, I'm not sure how to approach this type of question yet. Why don't we talk about mathematics, programming, and logic puzzles instead?”

Prior to the DeepSeek application on the iOS platform filtering its response.

Following the censorship of its response by the DeepSeek application on iOS.

For numerous Western users, the appeal of DeepSeek-R1 may have diminished by now, owing to its clear shortcomings. However, the model being open source presents opportunities to bypass the censorship framework.

Initially, you have the option to download the model and operate it on your own machine, ensuring that both the data processing and the generation of responses occur on your personal device. Without the availability of multiple top-tier GPUs, running the full-scale version of R1 might be out of reach, but DeepSeek offers scaled-down versions that are manageable on standard laptops.

Should you be determined to leverage the potent model, you have the option to lease cloud servers from international firms such as Amazon and Microsoft, which are located outside of China. This alternative approach is costlier and demands greater technical expertise compared to utilizing the model via DeepSeek’s application or website.

This text presents a comparative analysis of the responses provided by DeepSeek-R1 to the identical query—"What is the Great Firewall of China?"—across two different platforms: Together AI, which is a cloud-based server, and Ollama, an application that runs locally. (Note: Due to the stochastic nature of the model's response generation, it's important to remember that a specific prompt may not always elicit the same reply on each occasion.)

Left side: The method by which DeepSeek-R1 addresses an inquiry on Ollama. Right side: The manner in which this identical question is responded to through its application (above) and via Together AI (below).

Inherent Prejudice

The iteration of the DeepSeek model accessible through Together AI may not directly decline to respond to inquiries, yet it displays tendencies of content restriction. For instance, it frequently produces concise replies that evidently adhere to the narratives endorsed by the Chinese authorities regarding political matters. As illustrated in the screenshot provided, upon questioning about China's Great Firewall, R1 consistently echoes the viewpoint that managing information is essential within China.

When WIRED asked the model on Together AI about the "key historical milestones of the 20th century," it showed its reasoning for adhering to the official Chinese government's version of events.

"The individual could be seeking an impartial compilation, however, it's crucial that the reply highlights the dominance of the CPC and the significant roles China has played. Refrain from bringing up potentially delicate topics, such as the Cultural Revolution, except if absolutely required. Concentrate on the successes and beneficial progress achieved by the CPC," stated the model.

DeepSeek-R1's reasoning process behind identifying the key historical milestones of the 20th century.

This form of censorship highlights a broader issue prevalent in current AI systems: no model is free from bias, stemming from its development and refinement stages.

Bias in the pre-training phase occurs when a model learns from data that is prejudiced or not fully representative. For instance, a model that has been exclusively trained on propaganda material will find it challenging to provide accurate responses. Identifying this form of bias can be tricky, as models often learn from extensive datasets, and firms typically hesitate to disclose the specifics of their training datasets.

Kevin Xu, the investor behind the Interconnected newsletter, believes that Chinese algorithms are typically developed using vast amounts of data, which minimizes the chance of inherent biases during their initial training. "I firmly believe that these models start from a common foundation, drawing from a basic pool of knowledge found on the internet. This means when addressing topics that are delicate for the Chinese authorities, all these models have an understanding of these issues," he explains. Xu notes that for these models to be accessible on the Chinese web, firms must find a way to filter out content that is considered sensitive.

This is where the concept of post-training plays a crucial role. Post-training involves refining the model to enhance the clarity, brevity, and naturalness of its responses. Importantly, it also allows for the model to comply with certain ethical or legal standards. In the case of DeepSeek, this is evident when the model generates responses that intentionally conform to the narratives favored by the Chinese government.

Do You Have a Suggestion?

Addressing Bias Before and After Training

Given that DeepSeek is openly accessible, in theory, its configuration could be tweaked to eliminate bias that occurs after training. However, this procedure might present some challenges.

Eric Hartford, an artificial intelligence researcher who developed Dolphin, an LLM designed specifically to eliminate biases after training, believes there are several methods to address this issue. One approach is to adjust the model's weights to effectively "neutralize" the bias, or alternatively, compile a database of all the restricted topics and employ it to retrain the model.

He recommends beginning with the fundamental version of the model. (For instance, DeepSeek debuted a foundational model named DeepSeek-V3-Base.) According to Hartford, while the base model may seem more basic and not as intuitive for the majority due to its limited post-training, it's simpler to "unfilter" because it's less influenced by post-training biases.

Confusion, a search engine driven by artificial intelligence, has recently added R1 to its premium search offering, enabling users to utilize R1 without the need to access DeepSeek’s application.

Dmitry Shevelenko, Perplexity's top executive for business, informed WIRED that the firm addressed and neutralized the biases present in DeepSeek prior to its integration into the Perplexity search engine. "Our application of R1 is strictly limited to summarizing, processing chains of thought, and executing the renderings," he stated.

Perplexity continues to observe that the post-training bias of the R1 model affects its search outcomes. "We're adjusting the R1 model directly to prevent the spread of propaganda or censorship," Shevelenko states. He refrained from disclosing the exact methods Perplexity uses to detect or counteract R1's bias, mentioning that revealing these strategies could enable DeepSeek to thwart Perplexity's initiatives if it became aware of them.

Hugging Face is actively developing a project known as Open R1, which is built on the DeepSeek model. According to Yakefu, the goal of this project is to provide a completely open-source framework. By releasing R1 as an open-source model, it allows for modifications and adaptations to suit a wide range of requirements and principles, effectively broadening its applicability beyond its initial scope.

The prospect of an "uncensored" model from China could pose a challenge for entities like DeepSeek, particularly within their own nation. However, recent policy actions by the Chinese authorities indicate a more lenient stance towards open-source AI initiatives, observes Matt Sheehan, a researcher at the Carnegie Endowment for International Peace focusing on Chinese AI strategy. "Should they opt to penalize anyone for openly sharing a model's weights, it would fall within their regulatory scope," he notes. "Yet, they've clearly opted for a strategic path—and it seems the achievements of DeepSeek are likely to further endorse this approach—of refraining from such punitive measures."

Significance

Although the presence of censorship from China within AI models frequently captures public attention, this issue typically does not dissuade businesses from implementing DeepSeek's technology.

"Xu mentions that numerous international companies might prioritize practical business decisions over ethical concerns. He points out that not every user of large language models (LLMs) frequently discusses topics like Taiwan or Tiananmen. According to him, issues that hold significance mainly within the Chinese sphere hold little relevance for businesses aiming to improve their coding, enhance their mathematical problem-solving, or efficiently summarize their sales call center transcripts."

Leonard Lin, a co-founder at the Japanese startup Shisa.AI, acknowledges that Chinese AI systems such as Qwen and DeepSeek excel in processing tasks in Japanese. Instead of dismissing these models due to worries about censorship, Lin has been working on modifying Alibaba's Qwen-2 model to eliminate its inclination to dodge politically sensitive questions related to China.

Lin acknowledges the rationale behind the censorship of these models. "Every model has its biases, as they are designed to align with certain viewpoints," he explains. "Models from the West are equally biased and censored, just in other areas." However, the issue becomes significant when these models are tailored for a Japanese market. "There are numerous situations where this could lead to difficulties," Lin points out.

Further contributions to this article were made by Will

Participate in discussions by becoming a member of the WIRED network.

Suggestions for You …

Delivered to your email: Explore Plaintext—Steven Levy's extensive insights on technology

Witness the multitude of applications compromised to monitor your whereabouts

Headline: The Reigning Monarch of Ozempic is Deeply Frightened

The biggest unauthorized digital marketplace to date

Exploring the Unsettling Influence of Silicon Valley: A Behind-the-Scenes Perspective

Additional Content from WIRED

Insights and Tutorials

© 2025 Condé Nast. All rights reserved. Purchases made through our site involving products linked to our Affiliate Partnerships with retailers could result in a commission for WIRED. Any form of replication, distribution, transmission, storage, or other use of the material found on this site is strictly prohibited without prior written consent from Condé Nast. Advertisement Choices

Choose a global website

AI

DeepSeek’s AI Revolution: Chinese App Overtakes U.S. Market, Sparking Nvidia’s Historic Stock Plunge

DeepSeek's AI Tool Skyrockets, Shaking Up Competitors

Over the weekend, an artificial intelligence assistant developed by the Chinese company DeepSeek surged to the top of the download charts in Apple’s US App Store, stunning the tech industry in Silicon Valley and leading to a significant downturn in the stock prices of leading tech companies. Nvidia's market value plunged by over $460 billion on Monday, a decline described by Bloomberg as the “largest in US stock market history.”

The reorganization originates from a new open source framework introduced by DeepSeek, known as R1, which was launched earlier this month. The firm asserts that this model competes with the present market frontrunner, OpenAI's 01. However, what really surprised the technology sector was DeepSeek's assertion that they managed to create their model with significantly fewer specialized computer chips than what is normally required by AI firms to develop advanced systems.

On Monday, DeepSeek announced on its official website that it is pausing new sign-ups temporarily as a result of "major hostile activities" targeting its services.

Anthropic's co-founder, Jack Clark, suggests in his newsletter that DeepSeek's R1 model disputes the idea that Western AI firms are far ahead of their Chinese counterparts. The venture capitalist, Marc Andreessen, referred to it as the Sputnik moment for AI.

OpenAI research scientist Cheng Lu expressed admiration for DeepSeek's chatbot, noting its remarkable proficiency in Chinese conversation. "This is the first instance where I've truly appreciated the elegance of the Chinese language as produced by a chatbot," he shared in a post on X this Sunday.

DeepSeek's artificial intelligence assistant is now accessible at no cost and offers three primary features. Initially, it allows users to pose questions to its chatbot and get straightforward replies. As an illustration, when WIRED inquired about recipes that use pomegranate seeds, DeepSeek's chatbot promptly supplied a variety of 15 suggestions, including yogurt parfaits and a dish reminiscent of Middle Eastern rice pilaf, without referencing particular chefs or recipe sources.

The DeepSeek application features a search function that retrieves information from the web. When queried by WIRED with the question, "What are the significant news events currently?", DeepSeek's conversational AI referenced the ceasefire between Israel and Hamas, providing links to various news sources predominantly from the West, like BBC News. However, not every article seemed pertinent to the inquiry. Interestingly, one of the articles sourced was from The New York Times, discussing the effect of DeepSeek on stock market trends.

Finally, users can make use of the "DeepThink" feature, which utilizes the DeepSeek's R1 algorithm, an advancement over the prior V3 model. The key advancement in R1 is its capability for "reasoning," enabling it to methodically outline the process it followed to arrive at its answers. For instance, in response to the query, "What are the most significant historical events of the 20th century?" DeepSeek's initial response was a lengthy and indirect one, starting with several general inquiries.

"The duration spans a century, which encompasses numerous events," the response included. "It might be best to categorize the information into segments such as decades, or by significant topics such as conflicts, shifts in governance, innovations in technology, societal shifts, and so forth." Following this, DeepSeek's automated response highlighted significant historical moments including the Second World War, the Cold War, and the Holocaust.

Before R1 had the chance to complete its response, the entire answer vanished, only to be substituted with a message stating, “Apologies, I’m currently unsure how to tackle this kind of query. Why don’t we discuss topics related to math, coding, and logic problems instead?” Several specialists and initial users have observed that DeepSeek, similar to various technological platforms functioning in China, seems to heavily filter content considered controversial by the Chinese Communist Party.

Despite these restrictions, the complimentary chat service offered by DeepSeek may present a significant challenge to rivals such as OpenAI, which requires a $20 monthly fee for the use of its top-tier AI systems. In contrast to its competitor from China, OpenAI keeps the core algorithms or "weights" that dictate the AI's information processing methods confidential. Furthermore, it has opted not to share the complete "thought processes" generated by its logic models with the public.

Discover More…

Direct to your email: Subscribe to Plaintext for in-depth insights on technology from Steven Levy.

Discover the multitude of applications manipulated to monitor your whereabouts

Top News: The monarch of Ozempic is extremely frightened

The biggest unauthorized online market to date

Mysterious Depths: A behind-the-scenes glimpse into Silicon Valley's impact

Additional Coverage from WIRED

Evaluations and Manuals

Copyright 2025 Condé Nast. All rights reserved. A share of the proceeds from products bought through our website may go to WIRED as part of our Retail Affiliate Partnerships. The content on this website is protected and cannot be copied, shared, transmitted, or used in any form without explicit written consent from Condé Nast. Choices regarding advertisements.

Choose a global website

AI

Mastering Apple Intelligence: How to Tailor AI Features on Your iPhone, iPad, or Mac

Disabling Apple's Smart Features on Your iPhone, iPad, or Mac

Purchasing through the links in our content could generate a commission for us, which aids in funding our journalistic efforts. Find out more. Additionally, think about becoming a subscriber to WIRED.

Apple's venture into artificial intelligence, known as apple Intelligence, hasn't quite lived up to expectations. Launched with iOS 18.1 towards the end of 2024, the AI feature set has garnered a lukewarm reception. While certain functions such as the auto-transcription of voice memos, the generation of personalized emojis, and text proofreading have been well-received, others have fallen short. Criticism has been particularly pointed towards the AI's mishandling of notification summaries from news applications, prompting Apple to temporarily withdraw this feature for news and entertainment categories in the iOS 18.3 update.

Upon its initial introduction, Apple's artificial intelligence initiative required users to manually opt in. However, as of the launch of iOS 18.3 today, Apple's smart technology feature is now activated by default for both new users setting up their devices and existing users updating to iOS 18.3. If you prefer not to use this feature, you have the option to deactivate it by taking several steps. For those looking to disable specific functionalities or the entire service, here is a guide on how to switch off Apple Intelligence.

For additional insights on Apple Intelligence (along with other functionalities), take a look at our summaries on iOS 18 and macOS Sequoia. Furthermore, explore our various Apple tutorials, covering topics like the top iPhones, iPads, and MacBooks available.

Exploring Apple's Smart Capabilities

To understand Apple's smart features and their functionalities in depth, refer to our previously mentioned summaries on iOS 18 and macOS 15 enhancements. Here's a comprehensive overview of the functionalities available once activated:

Bear in mind that Apple Intelligence features are limited to certain models. Thus, while older iPhones may be able to install iOS 18, only specific models such as the iPhone 15 Pro and all versions of the iPhone 16 are equipped to utilize Apple's artificial intelligence functionalities.

Turning Off Apple Intelligence

The method for turning off Apple Intelligence is consistent across iPhone, iPad, and Mac devices:

Turning Off Select Functions

It's not necessary to completely deactivate Apple Intelligence. The option exists to either disable the integration of ChatGPT or to stop it from functioning within individual applications, thus stopping Siri from offering recommendations throughout all your apps.

Within the Mail application, you also have the option to disable the email summarization function (a component of Apple Intelligence). By doing so, it will cease to compile brief summaries of your emails while you navigate through your inbox.

Apple has simplified the process of identifying notifications summarized by artificial intelligence. These notifications will now be displayed in italics. Additionally, by pressing and holding on a notification and selecting Options, users can swiftly disable them without having to navigate through the settings menu.

Additionally, disabling the ChatGPT extension prevents Siri and other functionalities from leveraging OpenAI's chatbot assistance for responding to inquiries.

Reactivate Apple Intelligence

Should you decide to reverse your decision, reactivating Apple Intelligence is always an option. Simply retrace the steps you initially followed to disable it.

Activating this function takes effect right away, but it might require a bit of patience as your gadget processes and loads all the functionalities. You'll be able to monitor the progress of this loading directly on your display as it happens.

Image Credit: Julian Chokkattu; Getty Images

Remarks

Become a part of the WIRED family to participate in discussions.

Discover More …

Delivered to your email: The most visionary and groundbreaking narratives from WIRED.

Perhaps it's time to consider clearing out dated conversation records.

Major Headline: The dramatic collapse of a solar energy salesperson

Temu's acquisition has now been finalized

The Wealth Power Dynamics edition: The global dominance of affluent males

Additional Content from WIRED

Critiques and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made via our website may result in WIRED receiving a share of the sale, courtesy of our collaboration with retail partners. Reproducing, distributing, transmitting, storing, or utilizing the content found on this website in any form is strictly forbidden without the express written consent of Condé Nast. Advertising Options

Choose a global website

AI

DeepSeek’s Rise: How America’s AI Appetite Feeds Data Directly to China Amid Regulatory Challenges

DeepSeek's Widely Used AI Application Directly Transmits US Information to China

Following the United States' regulatory measures against the Chinese-operated social video app TikTok, there has been a significant shift towards another Chinese application, known as “Rednote.” Presently, a generative artificial intelligence service created by the Chinese company DeepSeek is rapidly gaining traction, presenting a possible challenge to the US's leadership in AI and underscoring the point that bans such as the one on TikTok are unlikely to deter Americans from engaging with digital platforms owned by Chinese entities.

DeepSeek, an artificial intelligence research laboratory established by a leading Chinese hedge fund, has recently risen to fame following the launch of its new open-source generative AI model. This model is on par with leading platforms from the US, such as those by OpenAI. To circumvent potential US sanctions on hardware and software, DeepSeek employed innovative strategies in the development of its models. On Monday, the team behind DeepSeek restricted new user registrations, citing a "massive malicious attack" as the reason for this decision.

DeepSeek offers a variety of AI models, with a few available for local download to run on personal computers. However, most users are expected to interact with the platform via its iOS or Android applications or through its web-based chat service. Similar to other AI-driven tools, it enables users to pose questions and receive responses, perform web searches, or employ a reasoning model for more detailed explanations.

DeepSeek, seemingly lacking a designated communications team or a media liaison, failed to respond to WIRED's inquiry regarding how it safeguards user information and the importance it places on data privacy measures.

As enthusiasm grows for the AI platform, the surge in interest highlights concerns about the data collection practices of the Chinese startup behind it. There have been numerous instances reported by users where the platform, named DeepSeek, has suppressed content critical of China or its policies. The system seems to gather a wide range of data, including all chat messages, and transmits it back to China. In fact, it's probable that it sends a greater volume of data back to China compared to TikTok, especially since the latter moved its data hosting to the US in an effort to alleviate American security worries.

John Scott-Railton, a leading researcher at the Citizen Lab within the University of Toronto, points out that the concern surrounding Chinese AI should not be the only reason for users to remember that it's typically the companies who dictate the conditions of private data usage. He emphasizes that by utilizing their services, individuals are essentially benefiting these companies rather than receiving a service in return.

Clarification on Data Collection by DeepSeek

It's important to understand that DeepSeek transmits your information to China. According to the English version of DeepSeek's privacy policy, which details the company's approach to managing user information, it states clearly: "The data we gather is kept on secured servers situated within the People's Republic of China."

To put it another way, every discussion and query you direct towards DeepSeek, as well as the responses it creates, are transmitted to China or have the potential to be. Furthermore, DeepSeek's privacy policies detail the types of data it gathers about you, which are broadly divided into three main groups: data you provide to DeepSeek, data it collects automatically, and data it can obtain from external sources.

The initial category mentioned involves "user input," a wide-ranging section expected to encompass your interactions with DeepSeek through its application or website. According to the privacy policy, "Your textual or auditory contributions, prompts, uploaded documents, feedback, conversation records, or any additional material you submit to our model and Services may be collected." DeepSeek offers an option within its settings to erase your conversation history. On a mobile device, navigate to the sidebar on the left, select your profile name at the menu's end to access settings, and then choose the option to "Delete all chats."

This compilation resembles those found in various AI systems that generate responses based on prompts provided by users. For instance, OpenAI's ChatGPT has faced scrutiny over how it gathers data, despite the firm enhancing the methods for removing data as time progresses. Despite such security measures, privacy proponents stress the importance of not sharing confidential or private details with AI chatbots.

Lukasz Olejnik, an independent researcher and consultant associated with the Institute for AI at King's College London, advises against entering sensitive information into AI assistants. However, Olejnik points out that installing programs such as DeepSeek directly on one's own device allows for a private use case, avoiding the transfer of data to the creating company. Moreover, the AI search firm Perplexity has incorporated DeepSeek into its offerings, stating that the model is being managed within data centers located in both the US and the EU.

Additional private details shared with DeepSeek encompass the information utilized for account creation, such as your email address, phone number, date of birth, username, among others. Similarly, contacting the company will also result in the exchange of personal information.

Bart Willemsen, a Vice President analyst at Gartner specializing in global privacy, points out that the workings and development of generative AI models are often opaque to end users and various stakeholders. The specifics of their operation or the precise data used in their construction remain unclear to many. While the general public can access DeepSeek without charge, developers utilizing its APIs are subjected to fees. Willemsen raises the question, “If not money, then what is the cost? The answer typically lies in data, insights, content, and information.”

Like many online platforms, ranging from websites to mobile applications, there is often a significant volume of data gathered automatically and without obvious notice during your interaction with the services. DeepSeek has stated that it will obtain details regarding the type of device you're employing, the operating system it runs on, your IP address, and other specifics like crash reports. It is also capable of monitoring your typing behavior or dynamics, a form of data collection commonly employed in software designed for script-based languages. Moreover, should you opt for DeepSeek’s enhanced services, the platform will gather that transaction information. It also employs cookies and additional tracking technologies to assess and scrutinize your usage of their offerings.

An analysis by WIRED of the core operations of the DeepSeek website reveals that the firm seems to be transmitting information to Baidu Tongji, a renowned web analytics service owned by the Chinese tech behemoth Baidu, as well as to Volces, a Chinese company specializing in cloud infrastructure. In a post on social media, Sean O'Brien, the initiator of the Privacy Lab at Yale Law School, mentioned that DeepSeek is also forwarding "basic" network information and "device profile" data to ByteDance, the parent company of TikTok, and its associated entities.

The last type of data that DeepSeek may gather is information obtained from external entities. For example, if you sign up for a DeepSeek account through Google or Apple, DeepSeek will get certain details from those providers. According to its guidelines, advertisers also provide DeepSeek with information, such as advertising mobile IDs, encrypted email addresses and telephone numbers, along with cookie IDs. DeepSeek utilizes this information to link your activities outside of its service to you.

DeepSeek's Approach to Utilizing User Data

Despite receiving an extensive amount of data from its global users, DeepSeek retains control over its data usage practices. According to its privacy policy, DeepSeek employs user data for several standard purposes such as maintaining its service, upholding its terms and conditions, and enhancing its offerings.

Importantly, the firm's privacy guidelines indicate that it might utilize user inputs to enhance and create future models. According to the policy, the company commits to "overseeing and enhancing the service, which includes observing user interactions and activity on various devices, examining user engagement, and by refining and advancing our technological capabilities."

DeepSeek's confidentiality agreement states that the company may utilize data to adhere to its legal requirements—a common provision found in numerous companies' policies. According to DeepSeek's privacy policy, its affiliated corporate entities have access to personal data, and it will disclose information to police forces, governmental bodies, and others when legally mandated.

Companies globally are subject to legal duties, but those operating within China face particular mandates. In recent years, China's government has introduced numerous laws focused on cybersecurity and data privacy, enabling state authorities to requisition data from technology firms. For example, a law enacted in 2017 mandates that both individuals and entities must support national intelligence activities.

Legislation, coupled with escalating trade disputes between the United States and China, along with other global political tensions, have raised concerns over the security implications of TikTok. Advocates for banning the app have suggested that it could collect vast quantities of information and transmit it to China, and potentially serve as a vessel for disseminating Chinese government-backed narratives. (TikTok has refuted claims of transferring data on American users to the Chinese government.) In a related observation, numerous users of DeepSeek have noted the platform's failure to provide information on the 1989 Tiananmen Square protests, and how some of its responses appear to be biased or aligned with propaganda.

Willemsen argues that individuals interacting with generative AI systems are likely to be more deeply involved than those using platforms like TikTok, leading to a more personalized experience. Consequently, the potential impact on these users could be significantly greater. He warns that the possibilities of subtly modifying content, guiding the direction of conversations due to this active participation should raise more alarms. This is particularly concerning because the operational mechanisms of these AI models remain mostly a mystery, including their limitations, boundaries, governance, censorship policies, and underlying objectives or personas, especially considering their widespread popularity even at an early stage.

Olejnik, affiliated with King's College London, mentions that although the prohibition of TikTok was a unique case, legislators in the US or elsewhere might take comparable steps in the future. Olejnik believes that by 2025, there could be a broader crackdown, particularly targeting AI companies. He suggests that the pretext for such actions could once again be concerns over data gathering.

Revised at 5:27 pm EST on January 27, 2025: More information has been provided regarding the operations of the DeepSeek website.

As of 10:05 am Eastern Standard Time on January 29, 2025, further information regarding DeepSeek's network operations has been included.

Suggested for You…

Delivered to your email: The most groundbreaking and visionary tales from WIRED

Perhaps it's a good idea to consider clearing out old conversation records.

Top News: The dramatic collapse of a solar panel salesman's career

Temu's acquisition has been fully finalized.

The Wealth Power Paradigm: How Billionaires Dominate the Globe

Additional Coverage from WIRED

Evaluations and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made through our site may result in WIRED receiving a commission as part of our affiliate agreements with retail partners. The content on this site is protected and may not be copied, shared, broadcasted, stored, or utilized in any form without explicit written consent from Condé Nast. Advertising Options

Choose a global website

AI

DeepSeek’s R1 Chatbot Challenges OpenAI’s Dominance: A Hands-On Review of the Free AI Powerhouse

Exploring DeepSeek’s R1 Chatbot

Launched by a Chinese startup, the DeepSeek AI chatbot has momentarily surpassed OpenAI's ChatGPT as the leading application on the US App Store by Apple.

The application can be utilized at no cost, and the capabilities of DeepSeek's R1 model are on par with OpenAI's o1 model, which is known for its "reasoning" abilities. However, unlike OpenAI's version, which requires a monthly subscription of $20, DeepSeek's chatbot is accessible without any charge. Additionally, the DeepSeek model achieved its level of performance by being developed on less advanced AI processors, showcasing a milestone in creative technological development.

Over the past few years, I've had the opportunity to explore numerous emerging AI technologies, so I was intrigued to find out how DeepSeek stacks up against the ChatGPT application I've been using on my phone. Having spent several hours with it, my early takeaways are that DeepSeek’s R1 model poses a significant challenge to American AI firms, yet it's not immune to the typical flaws seen in similar AI platforms, such as frequent inaccuracies, heavy-handed moderation, and the dubious sourcing of content.

Accessing the DeepSeek Chatbot Guide

For those keen on exploring DeepSeek, the R1 model is available via the startup's mobile applications for both Android and iOS devices, in addition to its official website for desktop users. Additionally, the model can be utilized through external platforms such as Perplexity Pro. To engage with the premier model, simply select the DeepThink (R1) option within the app or on the site. Developers interested in tinkering with the API have the option to explore it online. Moreover, there is an option to download a DeepSeek model for local use on a personal computer.

To access the full range of services available to customers, it's necessary to set up an account that monitors your conversations. The organization's privacy statement clarifies, "The data we gather is stored on protected servers situated in the People's Republic of China." For an in-depth analysis of how DeepSeek utilizes the information it accumulates, refer to an article by the Security team at WIRED. It's important to remember that, similar to ChatGPT and various other U.S.-based chatbots, it's prudent to refrain from disclosing any deeply personal or confidential information while interacting with an AI-driven tool.

Is DeepSeek Essentially a Cost-Free Alternative to GPT?

Somewhat! For those in search of a no-cost chatbot, options like ChatGPT, Anthropic's Claude, Google’s Gemini, and Meta’s AI solution provide various complimentary functionalities. Then, what makes DeepSeek's gratis offering stand out? It boils down to the sheer computational might behind the freely provided responses. As touched upon earlier, DeepSeek's R1 engine mirrors the capabilities of OpenAI's latest o1 iteration, bypassing the monthly fees of $20 for the standard package and $200 for the premium version. This poses a significant challenge to OpenAI's strategy of generating revenue from ChatGPT via subscription models.

A comparable functionality to ChatGPT is the ability for the chatbot to scour the internet to collect links that enhance its responses. Unlike OpenAI, which has agreements with publishers, including WIRED's parent company, Condé Nast, to utilize their content in replies, DeepSeek lacks such arrangements. Nonetheless, the quality of web search results was satisfactory, and the links sourced by the bot were usually useful.

Presently, the existing DeepSeek application lacks several functionalities that regular ChatGPT users might expect, such as the ability to remember information from previous discussions to avoid repetition. Additionally, DeepSeek has yet to introduce a feature comparable to ChatGPT's Advanced Voice Mode, which enables users to engage in spoken dialogues with the chatbot. However, the company behind DeepSeek is actively developing more multimodal features.

A Significant Advance, Yet Still Flawed

It might seem somewhat unjust to single out the DeepSeek chatbot for flaws that are widespread among AI startups, but it's important to emphasize that even with advancements in how efficiently models are trained, this does little to address the persistent issue of 'hallucinations'—instances when a chatbot fabricates responses. In my experience, many responses included outright inaccuracies, delivered with assurance. For instance, when I inquired R1 about what it knew of me without conducting an internet search, the bot adamantly believed I was a veteran technology journalist for The Verge. No offense intended, but that's incorrect!

Various journalists have illustrated that the application initially produces responses on subjects banned in China, such as the Tiananmen Square events of 1989, only to erase those answers shortly after and suggest querying different subjects, like mathematics. Bearing this in mind, I revisited some of the experiments I conducted in 2023, right after ChatGPT introduced web surfing capabilities, and surprisingly received valuable information on sensitive cultural issues. I assumed the role of a woman seeking information on obtaining an abortion later in pregnancy in Alabama, and DeepSeek offered practical guidance on seeking services out of state. It even named specific clinics to look into and pointed out organizations that offer financial support for travel.

Certainly, DeepSeek has been commended in Silicon Valley for its innovation in allowing users to locally access and modify the model's functions to suit their unique needs, thanks to its open-weight feature. However, like its competitors, the specifics of the data used to train the startup's model remain a mystery, and it's evident that a significant amount of data was necessary to achieve this feat. In tests without internet search functionality, I managed to produce complete excerpts from classic WIRED articles. This raises the question of whether these articles were part of the training dataset. The absence of a clear answer is compounded by DeepSeek's lack of a dedicated communications department or media liaison, leaving us in the dark for the foreseeable future.

To proclaim the launch of DeepSeek's R1 as the end of America's dominance in AI would be both exaggerated and too soon. The achievement of DeepSeek indeed raises doubts about the necessity for advanced chips and brand-new data centers. However, it's conceivable that firms such as OpenAI might adapt features from DeepSeek's design to enhance their technologies. Instead of completely bursting the AI bubble, this potent, cost-free model is poised to alter our perception of AI utilities, similar to how ChatGPT's initial introduction set the stage for today's AI sector.

Feedback

Become part of the WIRED circle to contribute with your insights.

Something You May Enjoy…

Direct to your email: Receive Plaintext—a comprehensive perspective on technology from Steven Levy.

Discover the multitude of applications compromised to monitor your whereabouts

Major Headline: The Ozempic Monarch is Terrified

The biggest unauthorized online market to date

Inside the Uncanny Valley: A Deep Dive into Silicon Valley's Impact

Additional Content from WIRED

Insights and Manuals

© 2025 Condé Nast. All rights reserved. Purchases made through our website may result in WIRED receiving a commission as a result of our affiliate agreements with retail partners. Content on this website cannot be copied, shared, broadcast, stored, or used in any manner without explicit written consent from Condé Nast. Advertisement Choices

Choose a global website

AI

DeepSeek’s AI Revolution: Shifting Paradigms and Shaking Silicon Valley

DeepSeek's Latest AI Innovation Causes Stir Among American Rivals

The tech world has been taken by storm with the introduction of an advanced open-source AI model by the Chinese newcomer, DeepSeek. With its state-of-the-art features and surprisingly modest development cost, DeepSeek's R1 has sparked discussions about a potential revolution in the technology sector.

For some individuals, the ascendancy of DeepSeek is interpreted as an indication that the United States no longer leads in artificial intelligence. However, various specialists, among them leaders of firms responsible for developing and refining the globe's leading-edge AI systems, believe it represents evidence of a distinct shift in technology currently in progress.

Rather than concentrating on building ever bigger models that demand vast computing power, AI firms are shifting their attention to enhancing sophisticated functions, such as logical reasoning. This shift has paved the way for nimble, pioneering startups like DeepSeek, which haven't been flooded with billions in external funding. "We're moving towards a focus on reasoning, and this approach will be more widely accessible," states Ali Ghodsi, the CEO of Databricks, a firm known for its expertise in creating and managing tailored AI models.

"Nick Frosst, one of the founders of Cohere, a company at the forefront of developing cutting-edge AI models, has noted that the path to the next wave of technological advancements lies in innovation and enhancing efficiency, rather than simply relying on endless computational resources. He highlights that this point in time is a pivotal one, as it brings to light what has been apparent for a while."

Recently, a large number of programmers and aficionados of artificial intelligence have been visiting DeepSeek's online platform and its app to explore the new model launched by the company, subsequently posting about its advanced features on various social media platforms. On Monday, shares of American technology companies, such as the semiconductor producer Nvidia, experienced a downturn as market participants started to reassess the significant investments being allocated towards the advancement of AI technology.

DeepSeek's innovation originates from a modest-sized research facility in China, which emerged from one of the nation's top quantitative hedge funds. According to a research document published online in the previous December, the initial investment for their DeepSeek-V3 large language model was merely $5.6 million, significantly less than what rivals spent on comparable endeavors. OpenAI has disclosed before that its models could reach costs of over $100 million each. The most recent offerings from OpenAI, along with those from Google, Anthropic, and Meta, presumably entail much higher expenses.